Complexity: A Guided Tour - Melanie Mitchell (2009)

Part I. Background and History

Science has explored the microcosmos and the macrocosmos; we have a good sense of the lay of the land. The great unexplored frontier is complexity.

—Heinz Pagels, The Dreams of Reason

Chapter 1. What Is Complexity?

Ideas thus made up of several simple ones put together, I call Complex; such as are Beauty, Gratitude, a Man, an Army, the Universe.

—John Locke, An Essay Concerning Human Understanding

Brazil: The Amazon rain forest. Half a million army ants are on the march. No one is in charge of this army; it has no commander. Each individual ant is nearly blind and minimally intelligent, but the marching ants together create a coherent fan-shaped mass of movement that swarms over, kills, and efficiently devours all prey in its path. What cannot be devoured right away is carried with the swarm. After a day of raiding and destroying the edible life over a dense forest the size of a football field, the ants build their nighttime shelter—a chain-mail ball a yard across made up of the workers’ linked bodies, sheltering the young larvae and mother queen at the center. When dawn arrives, the living ball melts away ant by ant as the colony members once again take their places for the day’s march.

Nigel Franks, a biologist specializing in ant behavior, has written, “The solitary army ant is behaviorally one of the least sophisticated animals imaginable,” and, “If 100 army ants are placed on a flat surface, they will walk around and around in never decreasing circles until they die of exhaustion.” Yet put half a million of them together, and the group as a whole becomes what some have called a “superorganism” with “collective intelligence.”

How does this come about? Although many things are known about ant colony behavior, scientists still do not fully understand all the mechanisms underlying a colony’s collective intelligence. As Franks comments further, “I have studied E. burchelli [a common species of army ant] for many years, and for me the mysteries of its social organization still multiply faster than the rate at which its social structure can be explored.”

The mysteries of army ants are a microcosm for the mysteries of many natural and social systems that we think of as “complex.” No one knows exactly how any community of social organisms—ants, termites, humans—come together to collectively build the elaborate structures that increase the survival probability of the community as a whole. Similarly mysterious is how the intricate machinery of the immune system fights disease; how a group of cells organizes itself to be an eye or a brain; how independent members of an economy, each working chiefly for its own gain, produce complex but structured global markets; or, most mysteriously, how the phenomena we call “intelligence” and “consciousness” emerge from nonintelligent, nonconscious material substrates.

Such questions are the topics of complex systems, an interdisciplinary field of research that seeks to explain how large numbers of relatively simple entities organize themselves, without the benefit of any central controller, into a collective whole that creates patterns, uses information, and, in some cases, evolves and learns. The word complex comes from the Latin root plectere: to weave, entwine. In complex systems, many simple parts are irreducibly entwined, and the field of complexity is itself an entwining of many different fields.

Complex systems researchers assert that different complex systems in nature, such as insect colonies, immune systems, brains, and economies, have much in common. Let’s look more closely.

Insect Colonies

Colonies of social insects provide some of the richest and most mysterious examples of complex systems in nature. An ant colony, for instance, can consist of hundreds to millions of individual ants, each one a rather simple creature that obeys its genetic imperatives to seek out food, respond in simple ways to the chemical signals of other ants in its colony, fight intruders, and so forth. However, as any casual observer of the outdoors can attest, the ants in a colony, each performing its own relatively simple actions, work together to build astoundingly complex structures that are clearly of great importance for the survival of the colony as a whole. Consider, for example, their use of soil, leaves, and twigs to construct huge nests of great strength and stability, with large networks of underground passages and dry, warm, brooding chambers whose temperatures are carefully controlled by decaying nest materials and the ants’ own bodies. Consider also the long bridges certain species of ants build with their own bodies to allow emigration from one nest site to another via tree branches separated by great distances (to an ant, that is) (figure 1.1). Although much is now understood about ants and their social structures, scientists still can fully explain neither their individual nor group 'margin-top:24.0pt;margin-right:0cm;margin-bottom: 12.0pt;margin-left:0cm;text-align:justify;line-height:normal'>The Brain

The cognitive scientist Douglas Hofstadter, in his book Gödel, Escher, Bach, makes an extended analogy between ant colonies and brains, both being complex systems in which relatively simple components with only limited communication among themselves collectively give rise to complicated and sophisticated system-wide (“global”) behavior. In the brain, the simple components are cells called neurons. The brain is made up of many different types of cells in addition to neurons, but most brain scientists believe that the actions of neurons and the patterns of connections among groups of neurons are what cause perception, thought, feelings, consciousness, and the other important large-scale brain activities.

FIGURE 1.1. Ants build a bridge with their bodies to allow the colony to take the shortest path across a gap. (Photograph courtesy of Carl Rettenmeyer.)

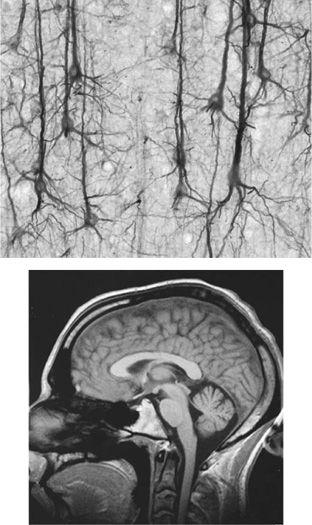

Neurons are pictured in figure 1.2 (top). Neurons consists of three main parts: the cell body (soma), the branches that transmit the cell’s input from other neurons (dendrites), and the single trunk transmitting the cell’s output to other neurons (axon). Very roughly, a neuron can be either in an active state (firing) or an inactive state (not firing). A neuron fires when it receives enough signals from other neurons through its dendrites. Firing consists of sending an electric pulse through the axon, which is then converted into a chemical signal via chemicals called neurotransmitters. This chemical signal in turn activates other neurons through their dendrites. The firing frequency and the resulting chemical output signals of a neuron can vary over time according to both its input and how much it has been firing recently.

These actions recall those of ants in a colony: individuals (neurons or ants) perceive signals from other individuals, and a sufficient summed strength of these signals causes the individuals to act in certain ways that produce additional signals. The overall effects can be very complex. We saw that an explanation of ants and their social structures is still incomplete; similarly, scientists don’t yet understand how the actions of individual or dense networks of neurons give rise to the large-scale behavior of the brain (figure 1.2, bottom). They don’t understand what the neuronal signals mean, how large numbers of neurons work together to produce global cognitive behavior, or how exactly they cause the brain to think thoughts and learn new things. And again, perhaps most puzzling is how such an elaborate signaling system with such powerful collective abilities ever arose through evolution.

The Immune System

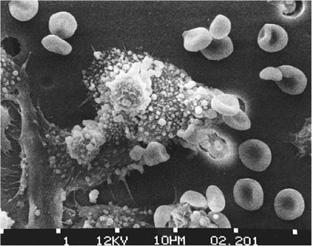

The immune system is another example of a system in which relatively simple components collectively give rise to very complex behavior involving signaling and control, and in which adaptation occurs over time. A photograph illustrating the immune system’s complexity is given in figure 1.3.

FIGURE 1.2. Top: microscopic view of neurons, visible via staining. Bottom: a human brain. How does the behavior at one level give rise to that of the next level? (Neuron photograph from brainmaps.org [http://brainmaps.org/smi32-pic.jpg], licensed under Creative Commons [http://creativecommons.org/licenses/by/3.0/]. Brain photograph courtesy of Christian R. Linder.)

FIGURE 1.3. Immune system cells attacking a cancer cell. (Photograph by Susan Arnold, from National Cancer Institute Visuals Online [http://visualsonline.cancer.gov/details.cfm?imageid=2370].)

The immune system, like the brain, differs in sophistication in different animals, but the overall principles are the same across many species. The immune system consists of many different types of cells distributed over the entire body (in blood, bone marrow, lymph nodes, and other organs). This collection of cells works together in an effective and efficient way without any central control.

The star players of the immune system are white blood cells, otherwise known as lymphocytes. Each lymphocyte can recognize, via receptors on its cell body, molecules corresponding to certain possible invaders (e.g., bacteria). Some one trillion of these patrolling sentries circulate in the blood at a given time, each ready to sound the alarm if it is activated—that is, if its particular receptors encounter, by chance, a matching invader. When a lymphocyte is activated, it secretes large numbers of molecules—antibodies—that can identify similar invaders. These antibodies go out on a seek-and-destroy mission throughout the body. An activated lymphocyte also divides at an increased rate, creating daughter lymphocytes that will help hunt out invaders and secrete antibodies against them. It also creates daughter lymphocytes that will hang around and remember the particular invader that was seen, thus giving the body immunity to pathogens that have been previously encountered.

One class of lymphocytes are called B cells (the B indicates that they develop in the bone marrow) and have a remarkable property: the better the match between a B cell and an invader, the more antibody-secreting daughter cells the B cell creates. The daughter cells each differ slightly from the mother cell in random ways via mutations, and these daughter cells go on to create their own daughter cells in direct proportion to how well they match the invader. The result is a kind of Darwinian natural selection process, in which the match between B cells and invaders gradually gets better and better, until the antibodies being produced are extremely efficient at seeking and destroying the culprit microorganisms.

Many other types of cells participate in the orchestration of the immune response. T cells (which develop in the thymus) play a key role in regulating the response of B cells. Macrophages roam around looking for substances that have been tagged by antibodies, and they do the actual work of destroying the invaders. Other types of cells help effect longer-term immunity. Still other parts of the system guard against attacking the cells of one’s own body.

Like that of the brain and ant colonies, the immune system’s behavior arises from the independent actions of myriad simple players with no one actually in charge. The actions of the simple players—B cells, T cells, macrophages, and the like—can be viewed as a kind of chemical signal-processing network in which the recognition of an invader by one cell triggers a cascade of signals among cells that put into play the elaborate complex response. As yet many crucial aspects of this signal-processing system are not well understood. For example, it is still to be learned what, precisely, are the relevant signals, their specific functions, and how they work together to allow the system as a whole to “learn” what threats are present in the environment and to produce long-term immunity to those threats. We do not yet know precisely how the system avoids attacking the body; or what gives rise to flaws in the system, such as autoimmune diseases, in which the system does attack the body; or the detailed strategies of the human immunodeficiency virus (HIV), which is able to get by the defenses by attacking the immune system itself. Once again, a key question is how such an effective complex system arose in the first place in living creatures through biological evolution.

Economies

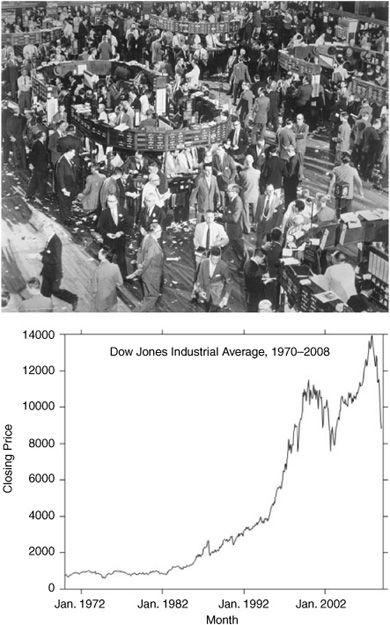

Economies are complex systems in which the “simple, microscopic” components consist of people (or companies) buying and selling goods, and the collective behavior is the complex, hard-to-predict behavior of markets as a whole, such as changes in the price of housing in different areas of the country or fluctuations in stock prices (figure 1.4). Economies are thought by some economists to be adaptive on both the microscopic and macroscopic level. At the microscopic level, individuals, companies, and markets try to increase their profitability by learning about the behavior of other individuals and companies. This microscopic self-interest has historically been thought to push markets as a whole—on the macroscopic level—toward an equilibrium state in which the prices of goods are set so there is no way to change production or consumption patterns to make everyone better off. In terms of profitability or consumer satisfaction, if someone is made better off, someone else will be made worse off. The process by which markets obtain this equilibrium is called market efficiency. The eighteenth-century economist Adam Smith called this self-organizing behavior of markets the “invisible hand”: it arises from the myriad microscopic actions of individual buyers and sellers.

Economists are interested in how markets become efficient, and conversely, what makes efficiency fail, as it does in real-world markets. More recently, economists involved in the field of complex systems have tried to explain market behavior in terms similar to those used previously in the descriptions of other complex systems: dynamic hard-to-predict patterns in global behavior, such as patterns of market bubbles and crashes; processing of signals and information, such as the decision-making processes of individual buyers and sellers, and the resulting “information processing” ability of the market as a whole to “calculate” efficient prices; and adaptation and learning, such as individual sellers adjusting their production to adapt to changes in buyers’ needs, and the market as a whole adjusting global prices.

The World Wide Web

The World Wide Web came on the world scene in the early 1990s and has experienced exponential growth ever since. Like the systems described above, the Web can be thought of as a self-organizing social system: individuals, with little or no central oversight, perform simple tasks: posting Web pages and linking to other Web pages. However, complex systems scientists have discovered that the network as a whole has many unexpected large-scale properties involving its overall structure, the way in which it grows, how information propagates over its links, and the coevolutionary relationships between the behavior of search engines and the Web’s link structure, all of which lead to what could be called “adaptive” behavior for the system as a whole. The complex behavior emerging from simple rules in the World Wide Web is currently a hot area of study in complex systems. Figure 1.5 illustrates the structure of one collection of Web pages and their links. It seems that much of the Web looks very similar; the question is, why?

FIGURE 1.4. Individual actions on a trading floor give rise to the hard-to-predict large-scale behavior of financial markets. Top: New York Stock Exchange (photograph from Milstein Division of US History, Local History and Genealogy, The New York Public Library, Astor, Lenox, and Tilden Foundations, used by permission). Bottom: Dow Jones Industrial Average closing price, plotted monthly 1970-2008.

FIGURE 1.5. Network structure of a section of the World Wide Web. (Reprinted with permission from M.E.J. Newman and M. Girvin, Physical Review Letters E, 69,026113, 2004. Copyright 2004 by the American Physical Society.)

Common Properties of Complex Systems

When looked at in detail, these various systems are quite different, but viewed at an abstract level they have some intriguing properties in common:

1. Complex collective behavior: All the systems I described above consist of large networks of individual components (ants, B cells, neurons, stock-buyers, Website creators), each typically following relatively simple rules with no central control or leader. It is the collective actions of vast numbers of components that give rise to the complex, hard-to-predict, and changing patterns of behavior that fascinate us.

2. Signaling and information processing: All these systems produce and use information and signals from both their internal and external environments.

3. Adaptation: All these systems adapt—that is, change their behavior to improve their chances of survival or success—through learning or evolutionary processes.

Now I can propose a definition of the term complex system: a system in which large networks of components with no central control and simple rules of operation give rise to complex collective behavior, sophisticated information processing, and adaptation via learning or evolution. (Sometimes a differentiation is made between complex adaptive systems, in which adaptation plays a large role, and nonadaptive complex systems, such as a hurricane or a turbulent rushing river. In this book, as most of the systems I do discuss are adaptive, I do not make this distinction.)

Systems in which organized behavior arises without an internal or external controller or leader are sometimes called self-organizing. Since simple rules produce complex behavior in hard-to-predict ways, the macroscopic behavior of such systems is sometimes called emergent. Here is an alternative definition of a complex system: a system that exhibits nontrivial emergent and self-organizing behaviors. The central question of the sciences of complexity is how this emergent self-organized behavior comes about. In this book I try to make sense of these hard-to-pin-down notions in different contexts.

How Can Complexity Be Measured?

In the paragraphs above I have sketched some qualitative common properties of complex systems. But more quantitative questions remain: Just how complex is a particular complex system? That is, how do we measure complexity? Is there any way to say precisely how much more complex one system is than another?

These are key questions, but they have not yet been answered to anyone’s satisfaction and remain the source of many scientific arguments in the field. As I describe in chapter 7, many different measures of complexity have been proposed; however, none has been universally accepted by scientists. Several of these measures and their usefulness are described in various chapters of this book.

But how can there be a science of complexity when there is no agreed-on quantitative definition of complexity?

I have two answers to this question. First, neither a single science of complexity nor a single complexity theory exists yet, in spite of the many articles and books that have used these terms. Second, as I describe in many parts of this book, an essential feature of forming a new science is a struggle to define its central terms. Examples can be seen in the struggles to define such core concepts as information, computation, order, and life. In this book I detail these struggles, both historical and current, and tie them in with our struggles to understand the many facets of complexity. This book is about cutting-edge science, but it is also about the history of core concepts underlying this cutting-edge science. The next four chapters provide this history and background on the concepts that are used throughout the book.