BioBuilder: Synthetic Biology in the Lab (2015)

Chapter 4. Fundamentals of Bioethics

The field of ethics can provide individuals with tools to decide what it means to behave in moral and socially valued ways. By looking at behaviors through the lens of morals, ethics helps us to decide between conflicting choices. In this chapter we describe some recent illustrative examples in which ethical questions have come to bear on the field of synthetic biology, and then we connect the topics raised by these questions to several BioBuilder activities.

What Makes “Good Work”?

Imagine a researcher hard at work at a laboratory bench. In your imagination, that researcher might be wearing a bright-white lab coat and have a pipet in hand. The lab itself might be filled with glassware and equipment for mixing or separating or growing or measuring things. Maybe there are groups of people talking together in this imaginary lab, or maybe one researcher is working by a brightly lit window all alone.

From this imagined laboratory environment, can you tell if the researcher is doing “good work”? Despite the impressive level of detail you might have conjured in this imaginary environment, the superficial clues can’t tell you much about the intangible qualities of the effort. You can’t determine if it’s good work just because the imagined researcher is smiling or because there are awards hung on the laboratory walls and a pile of published articles on a desk. Those clues might reveal that a happy, accredited, and informed person is doing the work, but these clues alone can’t reflect the value of the work itself. So, what can guarantee that work is good work? The question is surprisingly complex and challenging to answer.

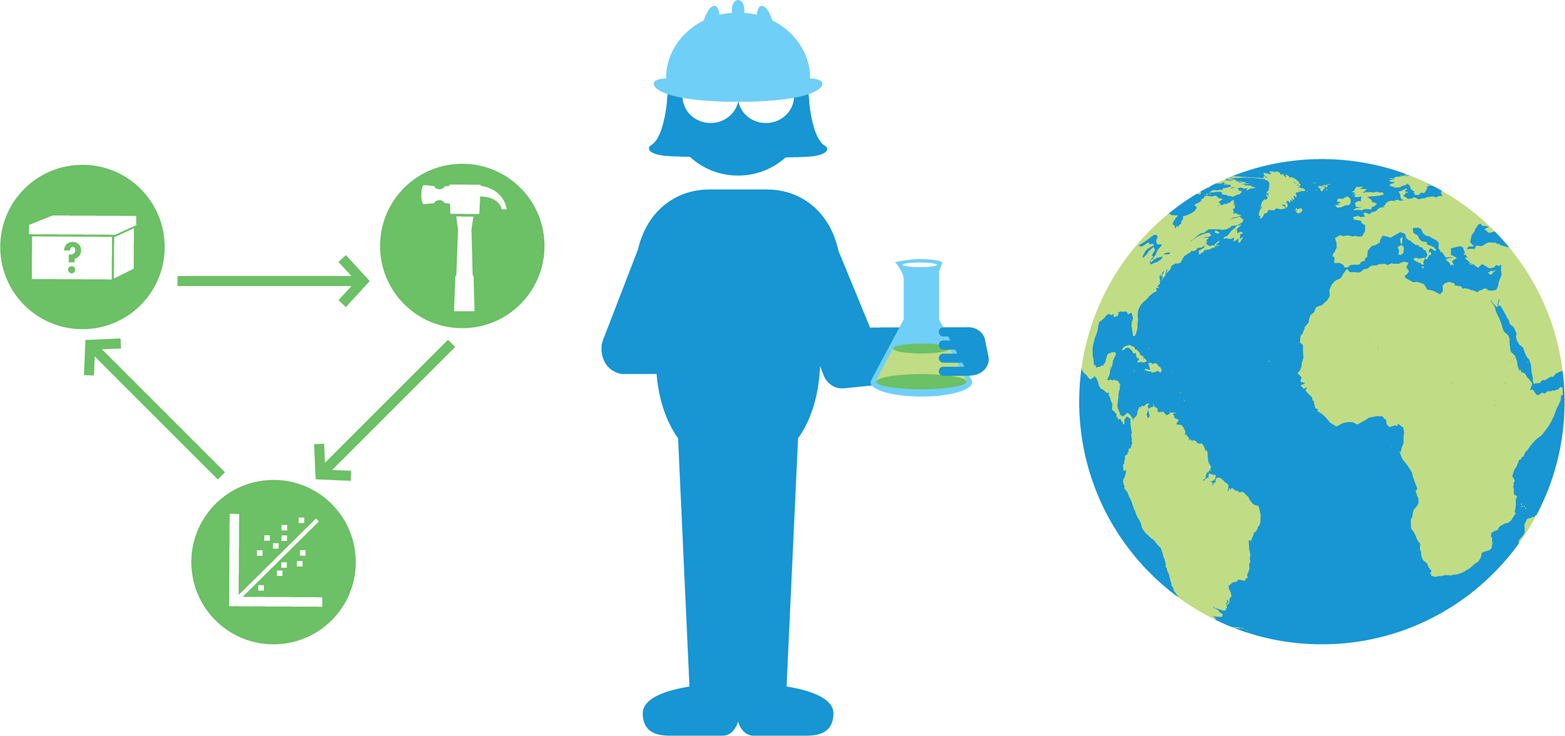

The opportunity to pursue personal commitments and concerns is one of the most satisfying things in life, says MIT’s Emeritus Professor of Linguistics Noam Chomsky. He suggests that satisfying work is found in creating something you value and in solving a difficult problem, and that this formula is as true for a research scientist as it is for a carpenter. One aspect of “good work” then might be having a satisfying job that offers the resources and opportunities to pursue challenging and creative solutions to problems that matter. But, even if you find a creative job that lets you think independently about a challenging question, you probably haven’t guaranteed yourself complete job satisfaction. For most people, good work includes coming up with high-quality answers to the challenges they face. Few of us would find it very satisfying to work on lousy solutions for excellent problems—even if we are paid well to do it.

Good work, then, must be engaging and excellent—it must also be ethical. Ethics guides our determination of what is right and wrong as a basis for making decisions about how to act, but not everyone agrees upon what constitutes ethical behavior. Even more, a single person’s definition of ethical behavior can change over time. For these reasons, society grapples with ethics in an ongoing and dynamic way. The field of bioethics has a long and rich history, which provides a conceptual foundation for current bioethical questions. Technologies change, but certain core principles of bioethics tend to remain relevant. For example, ethical decisions demonstrate respect for individuals and responsible stewardship, minimize harm while maximizing benefit, and consider issues of freedom, fairness, and justice. Later in this chapter, we explore these core principles in the context of several real-world examples. As you will see, ethics is an essential element to the definition of good work in science and engineering, where the outcomes don’t end at the laboratory bench.

Synthetic biology uses science and engineering to address real-world challenges. Inevitably, these technological solutions will be considered by a society filled with conflicting ideas and priorities. The individuals conducting synthetic biology research will also be faced with complex, and sometimes conflicting, obligations. Engineers, for example, have obligations to society, to their clients, and to their profession.

Many of the concerns that arise today around synthetic biology also arose in the 1970s with the advent of recombinant DNA (rDNA) technologies. To provide context for our discussion of ethics in synthetic biology, we will first consider a historical example from 1976, when rDNA techniques were first being used in labs at Harvard and MIT in Cambridge, Massachusetts. The residents of Cambridge and local elected officials held hearings to discuss the risks associated with this new genetic engineering technology. They ultimately placed a half-year moratorium on research while they assessed the risk. When the moratorium was lifted, research was allowed to continue under the guidance of a regulatory board that included both university officials and local citizens. The concerns raised in these hearings are echoed in many modern discussions about emerging technologies and continue to provide valuable insights.

In this chapter, we also consider some ethical questions that have arisen around specific projects in the still young field of synthetic biology. Without a doubt, there will be new examples to consider in the near future. Nonetheless, the examples listed here offer a starting point for some of the field’s ethical complexities. They reflect common ethical questions and reveal guiding ethical principles that apply to emerging technologies broadly. Finally, we spotlight a few of the ways that the BioBuilder activities and resources can be used to wrestle directly with these questions.

Regulating for Ethical Research

Synthetic biology has the potential to make lasting impacts on peoples’ lives and the planet as a whole. As with any emerging technology, though, it is not always easy to separate promise from peril. Each synthetic biology project idea must be considered for its merits and its risks. To a certain extent, scientists can choose what projects to work on based on personal preferences, but scientific research never exists in a vacuum. Funding, governmental regulations, and corporate interests all steer the direction of research—its goals, its applications, and its limits.

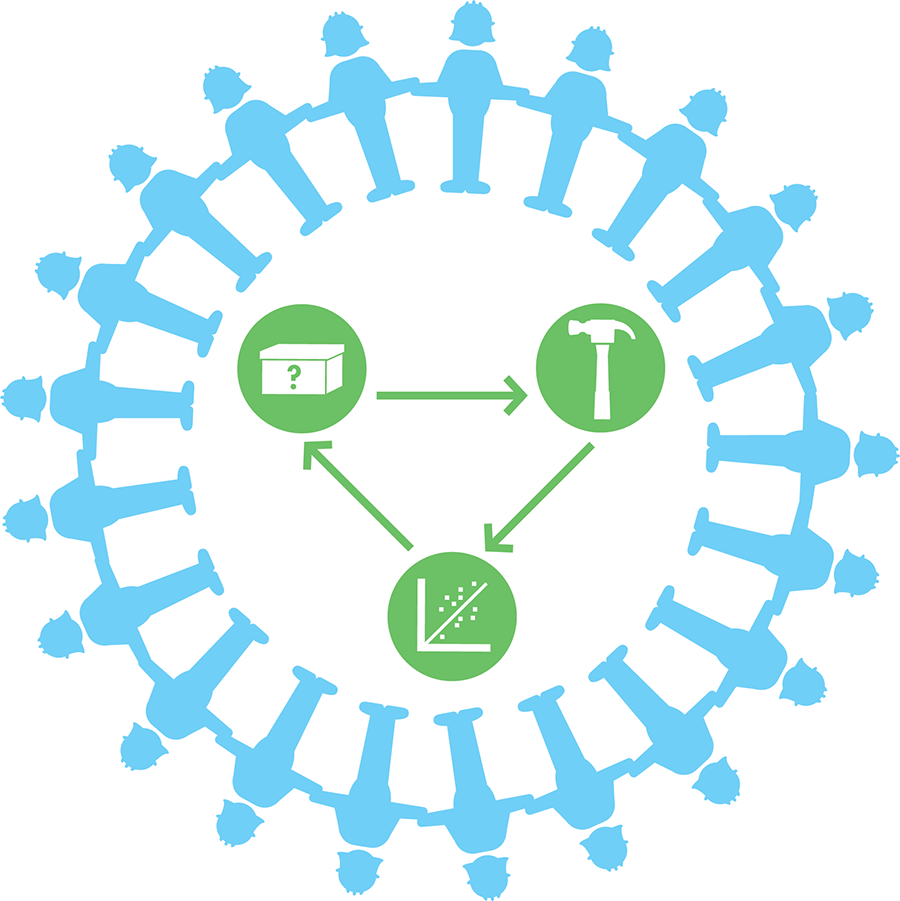

The future of synthetic biology depends upon meaningful conversation between researchers, government, and all the people whose lives stand to be affected.

One landmark in this conversation came in 2010 when United States President Barack Obama charged one of his advisory groups, the Presidential Commission for the Study of Bioethical Issues, to study and report on synthetic biology. This request came in response to the published claim by the that they had “created life” by inserting a synthetically derived genome into a host cell—an example we detail more extensively a bit later. Although some news reports at the time equated Venter’s scientific advance with Frankenstein-like reanimation, the commission studied the field of synthetic biology more broadly and concluded that the capacity to make novel life forms from scratch was not in hand. Their analysis of the field led to 18 policy recommendations but no new regulations for synthetic biology research. The commission noted that the potential benefits of synthetic biology largely outweigh major risks. They did, however, caution that dangers could develop in the future and recommended “prudent vigilance” via ongoing federal oversight.

Synthetic biology is not the first biotechnology field to undergo governmental scrutiny. Similar discussions occurred in the 1970s as molecular biology became possible. The discussions at that time resulted in guidelines that are still applied to synthetic and rDNA work today. One important aspect of the development of these guidelines was that the scientists conducting the research initiated the conversation. For them, doing good and ethical work meant publicly asking difficult questions about research going on in their labs and the labs of their colleagues.

Scientific Advances Raise Ethical Questions

In the 1970s, several labs discovered ways to artificially combine DNA from different organisms and express those combinations in living cells. For example, Dr. Paul Berg at Stanford University discovered ways to use a modified monkey tumor virus to integrate new genes into bacteria. His success, however, raised some important concerns for himself and others. What if a scientist modified bacteria using these techniques and then accidentally ingested some of the transformed cells? Could the rDNA be transferred from one bacterial cell to other bacteria in the gut of a person? If the DNA were fully functional, could the monkey tumor virus cause an infection?

Until these risks and questions could be evaluated, the scientists working on rDNA in the 1970s took precautions and voluntarily decided to suspend any further work that involved rDNA and animal viruses until the risks were better understood. This suspension of research activities was initiated through a letter of concern that was written to the National Academy of Sciences by a panel of scientists, including Dr. Maxine Singer, a researcher at the National Institutes of Health (NIH). The concerned scientists requested that the Academy establish a committee to consider the safety of the technology. Berg, an early pioneer in rDNA techniques, chaired the committee to consider the safety of ongoing research activities enabled by rDNA techniques. The committee concluded that the technology’s “potential rather than demonstrated risk” was sufficient motivation to initiate a voluntary and temporary moratorium on rDNA experiments. They stopped experiments that involved making plasmids with novel antibiotic resistance genes, toxin-encoding genes, or animal virus DNA. Although the moratorium was voluntary, the request carried weight within the scientific community thanks to the authority of the committee members, who included Herbert Boyer and Stanley Cohen, co-laureates of the Nobel Prize in Chemistry with Berg, and James Watson, the co-discoverer of the DNA double-helix structure.

During the moratorium, scientists and policy makers worked together to identify risks and outline strategies to conduct research safely. Scientists famously convened at the Asilomar conference grounds outside Monterey, California, to prepare recommendations for the NIH, and these guidelines evolved into the current biosafety levels that are still used today to describe and contain the riskiest experiments.

Public Response

Many researchers saw the outcome of the Asilomar meeting as a significant success that would help to ensure safe and responsible research. However, the attention that the moratorium attracted also had some unintended side effects, including raising fears both outside and within the scientific community about the research. At the time, most of the public’s attention to scientific research was directed toward military experiments and advances in physics that ushered in the Atomic Age. Until Asilomar, the risks associated with biology had not received much public attention. By spelling out their specific fears for rDNA research and advocating for a suspension of the work, biologists attracted quite a bit of attention and initiated a public debate.

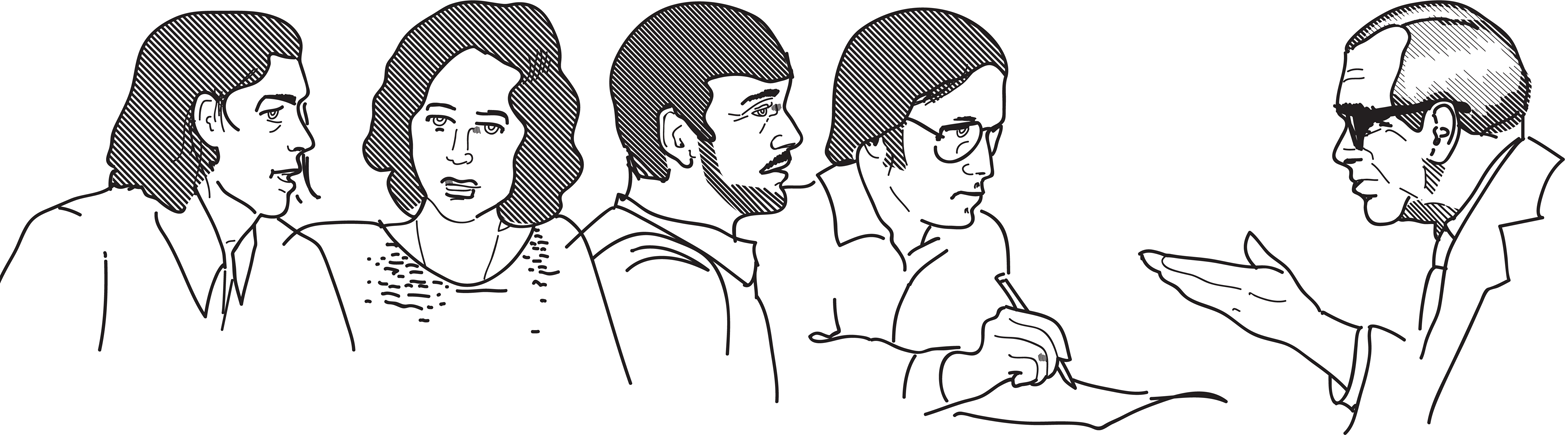

The 1976 Cambridge, Massachusetts City Council hearings offer a dramatic illustration of the public’s response to rDNA research. The mayor of Cambridge at that time, Alfred Velluci, was upset to learn that Harvard University was planning to build a special lab for high-risk, rDNA research right after the NIH released its guidelines for such research. Questioning what this lab could mean for his city and its constituents, Mayor Velluci convened public hearings of the Cambridge City Council in which scientists were asked to testify about rDNA and its associated risks. The exchanges between public officials and scientists capture the apprehension and exasperation of those involved. Many of the questions are pertinent for any technology in its infancy, including synthetic biology. Three specific issues—risk assessment, self-regulation, and apprehension—are explored later in this chapter, and the full video of the hearings documents other complex civic issues that arise when scientific capacities change.

Risk assessment: minimizing harm while maximizing good

Ethics guides us to weigh the risks of an action against its benefits, but one of the most complicated issues to arise during the Cambridge City Council hearings was the assessment of risk associated with rDNA research. Both the scientists and city officials tended toward conservative stances, but to different ends. The city officials wanted to minimize the potential harm to the citizens of Cambridge, which meant, at least for Mayor Velluci, eliminating all risk. “Can you make an absolute 100 percent certain guarantee that there is no possible risk which might arise from this experimentation?” he asked the scientists. “Is there zero risk of danger?”

The testifying scientists, on the other hand, communicated their conservative views about the new technology by acknowledging that they could not make such absolute claims about risk. Because scientists are trained to speak in probabilities rather than absolutes, it was difficult for them to convey a level of certainty to the city council in the type of language that Mayor Velluci sought. For example, Harvard University professor Mark Ptashne said, “I believe it to be the opinion of the overwhelming majority of microbiologists that there is in fact no significant risk involving experiments authorized to be done...” Professor Ptashne may have intended phrases like “overwhelming majority” and “no significant risk” to be strong statements of agreement, but they seem to have been heard as inconclusive by the mayor and the members of the city council.

“Now I know you don’t like to hear scientists telling you that there are risks involved, but they are extraordinarily low,” Professor Ptashne continued. “The risks are less than the typical kinds of risks you engage in every day, walking across the street.” In fact, nearly everything we do in our lives involves some kind of risk, and in making our decisions we weigh risks against the potential benefits while also doing what we can to minimize potential danger. Looking both ways before we step into the street is an example of protecting ourselves when conducting risky activities. By applying Mayor Velluci’s original question, “Can you make an absolute 100 percent certain guarantee that there is no possible risk which might arise from this...?” to mundane activities such as going to work or eating dinner, the answer is never an unequivocal “yes.” Honest risk assessment, however, might not provide much comfort when facing a new and unknown set of potential dangers, such as those faced by the scientists and the public in the early days of rDNA experimentation.

Self-regulation versus government legislation: freedom, fairness, and justice

At the time of the Cambridge City Council hearings, not all scientists agreed that rDNA research was low risk. For some, the guidelines that came out of the Asilomar meeting were problematic. MIT professor Jonathan King and others did not trust that an agency such as the National Institutes of Health (NIH) could objectively evaluate the science it funded. “I’m personally super-upset that a person representing the National Institutes of Health came here and made their presentation on the side of going on,” he said. “I don’t think those guidelines were written by a set of people representing the public and all their interests ... They were the ones doing the experiments. There was no reason whatsoever to believe that it was a democratic representative of the people. Those guidelines are like having the tobacco industry write guidelines for tobacco safety.”

City council member David Clem echoed his concerns: “There is an important principle in this country that the people who have a vested self-interest in certain types of activity shouldn’t be the ones who are charged with not only promulgating it but regulating it. This country missed the boat with nuclear research and the atomic energy commission, and we’re going to find ourselves in one hell of a bind because we are allowing ourselves to allow one agency with a vested interest to initiate, fund, and encourage research, and yet we are assuming they are non-biased and have the ability to regulate that and, more importantly, to enforce their regulation.”

For others, though, the guidelines were sufficient to alleviate their concerns. “I have been concerned and I continue to be concerned,” said Dr. Maxine Singer, one of the authors of the original letter to the National Academy that spurred the moratorium. But, she continued, “I feel that these guidelines [for rDNA research] are a very responsible response to that concern. The whole deliberation within the NIH has been a very open process.”

The platforms for dialogue have changed dramatically since these conversations about rDNA research were held in the 1970s, but the tension between self-governance and legislation remains. Core values such as community health, personal security, and enhanced knowledge remain essential, but the rapid and widespread availability of information through the Internet has affected the dynamics of such conversations. Ideas and information can now be widely exchanged through websites, including blogs and twitter feeds, in addition to Asilomar-like conferences and Cambridge City Council-like public hearings. Independent of venue, though, finding a balance between oversight that is prudent and oversight that is stifling remains central to ethical discussions around emerging technology and every community’s definition of “good work.”

Apprehension: respect for individuals

It is impossible to ignore the visceral feelings of unease many people have in response to new technologies, especially those involving life sciences. Tinkering with DNA and building novel organisms can call into question fundamental beliefs about the nature of life. The members of the Cambridge City Council did not explicitly state this concern, but several comments alluded to philosophical opposition. “I have made references to Frankenstein over the past week, and some people think this is all a big joke,” Mayor Velluci said. “That was my way of describing what happens when beings are put together in a new way. This is a deadly serious matter... If worst comes to worst, we could have a major disaster on our hands.” Councilmember David Clem also conveyed his feelings about rDNA: “I don’t think you have any business doing it anyway. But that’s my personal opinion.”

This type of deep-seated apprehension about the very foundations of rDNA research then—and synthetic biology research now—cannot be ignored. Scientists conducting the work might have concluded that there is nothing inherently wrong in modifying naturally occurring genetic material, but they must acknowledge that their work is influenced by and affects the society around them. Having unique expertise in a field comes with unique responsibilities, including continuous risk assessment and communication. Even when scientists are reasonably confident in their risk assessment, new data or circumstance could change that assessment over time. The synthetic biology case studies presented in the section that follows spotlight science in the context of society.

Three Synthetic Biology Case Studies

Laboratory policies for carrying out safe rDNA research are no longer hotly debated in public forums, but modern conversations around synthetic biology research echo some of the concerns raised in past. Three projects that are associated with synthetic biology research are described in some detail here. Each has attracted public attention and scrutiny. The examples spotlight different bioethical concerns. Each example is well suited to the bioethics activities that are described later in this chapter.

Grow-Your-Own Glowing Plants

“What if we used trees to light our streets instead of electric streetlamps?” asks the introductory video for the Glowing Plant project when it was posted to the Kickstarter website in 2013. The developers of this project proposed to genetically engineer plants to generate light. Their project was inspired by other organisms, such as fireflies, jellyfish, and certain bacteria, that naturally generate light. The Glowing Plant developers chose to work with the gene that encodes luciferase, an enzyme from the firefly that produces a visible glow from its chemical substrate, luciferin. They also chose to work with the plant Arabidopsis thaliana, a common laboratory organism that is widely used for academic and industrial research. The introductory video that the developers made about the project describes their intention to insert luciferase into Arabidopsis as “a symbol of the future, a symbol of sustainability.” Many people agreed that the project was a worthy endeavor: the Glowing Plant development team obtained $484,013 of crowd-sourced funding from 8,433 backers through a fundraising campaign on the Kickstarter website.

Despite the lofty rhetoric and the project’s many supporters, detractors voiced their concerns about the safety and ethics of the project, publicly asking if it was being regulated appropriately. For decades, genetically modified crops have been controversial, with concerns raised about the safety of eating such products, the potential environmental and ecological impacts, and the restrictive business practices that sometimes surround the use of such crops. Protestors asked what might happen if the Glowing Plant seeds were to “escape” into the wild, potentially becoming an invasive species or otherwise disrupting naturally occurring ecosystems. To their credit, the researchers who were leading this project anticipated such concerns even in the very early stages of their project. For example, one of the researchers told the journal Nature that they were likely to use a version of the plant that requires a nutritional supplement, which would decrease the likelihood of uncontrolled spread. This assurance, however, was not sufficient to alleviate all the worries about the project. The What a Colorful World chapter considers chassis safety more extensively.

A second concern arose around the “do-it-yourself” ethos of the project. The glowing plant was being developed by individuals not affiliated with a university. The group was affiliated with a “biohacker” space, as opposed to a traditional and highly regulated academic or industrial research laboratory. Though at least one of the project leaders was a trained scientist, at least part of the take-away message from the Glowing Plant team seemed to be, “anyone can do this.” Indeed one of the stated goals of the project was to show how the promise of synthetic biology can be at anyone’s fingertips, leading to questions about how decisions should be made about which technologies should be developed and deployed. Their introductory video refers to their work as “a symbol to inspire others to create new living things.” Although synthetic biology’s potential for widespread engineering of new living things is viewed by some as empowering and full of opportunity, others see synthetic biology as providing some basic tools to do considerable harm, and the Glowing Plant project provided an early test case for the new capabilities in citizen science.

Because a bioengineering project such as the Glowing Plant project falls between the cracks of most of the established regulatory agencies, biohacker efforts have initiated important discussions about how to preserve the inviting and democratic nature of the field while also ensuring that appropriate precautions and regulatory oversight are maintained. Existing laws and regulations didn’t anticipate many people having the capacity to practice biotechnology. Even though it might be hard to imagine any serious harm from a glowing plant, the tools and goals of synthetic biology continue to push the boundaries of what is possible. It is therefore important for synthetic biology researchers to consider the ethical issues presented by early test cases such as Glowing Plants to anticipate future regulatory needs and make sure the field continues in a direction that promotes “good work.”

“Creating” Genomes

Thanks to improved DNA manipulation tools, synthetic biology labs can aim to construct new living systems such as the aforementioned glowing plant or a specialized microbe that produces a particular pharmaceutical compound or a biofuel. The improved tools also open up other possibilities, including new efforts to write entire genomes from scratch. With ever-increasing ease and speed, synthetic biologists can use digital sequence data rather than a physical template to synthesize the genomic DNA for any organism in a lab. The Fundamentals of Synthetic Biology chapter presents more details about sequencing and synthesis technologies.

The open sharing of DNA sequence data became commonplace in the late 1990s, as researchers began to collect massive amounts of genome sequencing data. It became clear that it would be inefficient and a waste of time and resources for researchers to hoard their sequence information. The solution was to create publicly available databases to house the information. That way anyone with an Internet connection could access sequence data for all kinds of organisms. DNA sequence information is openly available for many species of plants and animals, for commonly used drug resistance genes, and for human pathogens.

Based on all of this available genetic data, researchers can, in theory, build synthetically produced genomes that could encode new organisms from scratch. But, despite what Hollywood’s Jurassic Park might suggest, much more than genetic material is currently needed to successfully reconstitute a living organism. Nonetheless, the possibility of conjuring life from genetic data is both exciting and worrisome. The DNA sequence information for many organisms and pathogens is publicly available, and basic DNA synthesis technologies make it possible for anyone who can afford it to write genetic code. The potential to use this technology for good or evil is clear. Some fear that terrorists will release a devastating bioweapon by synthesizing a pathogen that has been eradicated or highly contained. Others see the potential for reanimating extinct species and enhancing our planet’s biodiversity. All of these possible applications raise a host of additional ethical, ecological, and sociological questions. For example, should access to digital sequence information of genome synthesizers be regulated in any way? Should researchers be required to obtain additional safety and ethical approval for certain experiments involving DNA synthesis? What might the ecological consequences be of resurrecting species that have been wiped out?

Although researchers are currently far from being able to generate complex organisms from genetic information alone—or, for that matter, even being able to stitch together the genomic DNA for such organisms—there are several instances of successfully synthesized virus genomes, enabled by the simpler genetics and architecture of these microscopic pathogens relative to those of free-living organisms. Viruses are essentially packets of genetic material surrounded by a protein coat. The viral genome operates by hijacking a host cell’s synthesis machinery, instructing it to generate more copies of the viral DNA and proteins. In other words, researchers can generate a functional virus just by chemically synthesizing DNA and passing the synthesized viral genome through a host cell.

An early example of this application came in 2002, when scientists at the State University of New York at Stony Brook reconstituted the genome for poliovirus from short, synthetically produced DNA fragments and showed that they could generate active virus. It required significant expertise to piece together the short fragments that had been purchased from a DNA synthesis company, but in the years since this project was completed, synthesis technology has improved, enabling production of longer DNA pieces and reducing the skill and effort necessary to complete this sort of work. The 2002 poliovirus work was conducted safely in a regulated, secure laboratory environment, but it is relatively easy to imagine how the technology could be dangerously applied by others working in different environments, with different aims.

Genome synthesis projects have also expanded beyond viruses to more complex, free-living bacterial cells. In 2010, researchers at the J. Craig Venter Institute showed they could synthesize a bacterial genome that, like a viral genome, could hijack the activities of a bacterial host cell. The Venter group chemically synthesized the Mycoplasma mycoides genome by ordering short snippets of DNA from a DNA synthesis company and then assembling the pieces into a full genome in the lab. They added a few detectable “watermarks” so that they could distinguish the synthesized DNA from the genome of the Mycoplasma capricolum host. The synthetic M. mycoides genome was successfully built and inserted into a M. capricolum host cell, eventually replacing the unique proteins and other components of the host species with those encoded by the synthetic genome. Importantly, the donor and host species both come from the same genus, Mycoplasma. This requirement for a close relation between the donor and the host illustrates one limitation on this type of work. Nonetheless, the researchers essentially converted M. capricolum into a different species, M. mycoides, with just DNA.

Even before Venter’s work, researchers and the public have been concerned about the public availability of DNA sequence data for many organisms and the increasing availability of DNA synthesis technology. The openness of public genetic information repositories also came under scrutiny when, in 2011, two labs developed a mutant strain of the H5N1 avian flu. The mutation they independently discovered allowed the virus to pass between mammals more easily than the naturally occurring strain can. When the research findings were announced, experts disagreed about whether the sequences of the mutant viruses should be published. Some believed that the genetic data could provide valuable insight into virus transmission and therefore should be shared. Others worried that bioterrorists or other bad actors could use this information to create a highly virulent, highly transmissible human flu. After much debate and a publication delay, the World Health Organization concluded that the sequences should be published. This sort of dilemma will undoubtedly surface again as physical DNA sequences can be made even more easily starting from digital and openly available information rather than any tangible starting material.

Another approach to limiting the possible synthesis of pathogens is to regulate the DNA synthesis companies themselves. The companies, however, are scattered all over the globe, making the authorization and enforcement of regulations a challenge. Instead, many of the DNA synthesis companies voluntarily participate in a safety protocol to screen all orders and question any requests to synthesize DNA similar to known pathogens before deciding whether to fulfill them.

The voluntary code of conduct followed by DNA synthesis companies might deter some malevolent individuals, but it is not foolproof. In 2006, British newspaper The Guardian illustrated a loophole in the system by ordering slightly modified DNA sequences for the highly regulated smallpox virus. The reporters from The Guardian found the complete DNA sequence of the virus on one of the many public repositories housing genetic sequence information and they ordered segments of the DNA to be synthesized and delivered to their newsroom. Thus, although the physical virus has been eradicated from all but two known and highly controlled laboratories, the reporters were able to obtain genetic material encoding the deadly smallpox virus. This news-making story raised awareness of the DNA synthesis industry but did not generate marked progress on the biosecurity questions. It remains challenging to strike the right balance to insure that synthesis technologies and sequence information are secure enough to deter evil-doers but also accessible for legitimate research reasons, such as academic and industrial researchers ordering sequences from virulent pathogens to develop vaccines or other treatments against them.

Biosynthetic Drugs and the Economics of Public Benefit

One of the earliest successes of synthetic biology has been microbial production of artemisinic acid—a valuable precursor to the antimalarial drug artemisinin—by synthetic biology company, Amyris. Since its antimalarial properties were discovered, artemisinin has been in high demand, but it is also somewhat difficult to produce. Historically it has been extracted from the Chinese wormwood plant, Artemisia annua, which naturally make artemisinin, but farmers have struggled to provide a stable supply of leaves for this process, resulting in large fluctuations in supply and price. Believing that a reliable source of artemisinin would stabilize the drug’s market price and also better meet the demand, Professor Jay Keasling and his lab at the University of California, Berkeley worked to produce artemisinin synthetically. Using many of the foundational techniques in synthetic biology as well as established tools from the field of metabolic engineering, the lab was able to engineer bacterial and yeast strains that could produce the drug precursor. Finally, in 2013, ten years after the production company, Amyris, was founded, large-scale industrial production of synthetic artemisinin began.

Some have hailed this success as an example of the good that synthetic biology can do. Others, though, have expressed concern about the sociological and economic impacts of this work, principally regarding how it will affect the farmers who until now have produced the world’s supply of artemisinin. Despite the roller-coaster supply cycle the compound has experienced, some believe that the production environment might have stabilized in recent years and that the farmers might, in fact, be equipped to appropriately handle the growing demand for the drug. If true, the farmers could respond to shifts in supply and demand without causing huge fluctuations in the price of the drug, and the synthetic artemisinin could be considered an unnecessary threat to their livelihood.

Additional considerations are also debated. For example, some suggest that the farmers and land not used to grow Chinese wormwood plants could be freed to grow much needed food crops. Other questions persist about which artemisinin production process will ultimately yield the most inexpensive treatment, or whether expense is even the most important issue. Specifically, access to the treatment might be as important, if not more important, than its cost, and barriers to access must be addressed with infrastructure and policy tools beyond producing the necessary compounds.

Despite its success and noble mission, the synthetic production of an antimalarial drug is not an open-and-shut case. There is continuing debate about which supply source will better serve both the patients in need of affordable, stable treatment and the farmers who need to make a living. Whereas the previous case studies focus primarily on issues of health, safety, and environmental soundness, this artemisinin case illustrates how synthetic biology applications can extend beyond science and medicine into complex world issues including culture and economics. The decision to pursue or support any project is a complex one, with researchers, funding agencies, and consumers all bearing some of the responsibility to determine what counts as “good work.”

Group Bioethics Activities

Bioethics and biodesign activities share many of the same challenges and opportunities. Neither one has a clearly “right” or “wrong” approach, and both are process driven rather than answer driven. In teaching bioethics, we want individuals to:

§ Recognize ethical dilemmas that arise from new advances.

§ Practice how to listen and assess differing viewpoints.

§ Propose and justify courses of action that resolve gaps in our policies or thinking. Some resolutions will be obvious to everyone, and others will remain in dispute forever, but the sweet spot for teaching comes in that vast middle area.

Here, we offer one way to use synthetic biology to introduce bioethics. The BioBuilder content begins with modeling the difficult process of decision making using a video of the Cambridge City Council hearings on rRNA research from 1976. The activity, though, can be adapted for any of the case studies presented in this chapter or from other real-world examples you might find in the news. You can find additional activities in greater detail with the BioBuilder Bioethics online material, which includes learning objectives and assessment guides. Another excellent resource is the NIH’s curriculum supplement “Exploring Bioethics.” However you approach this process, we hope that the activities can encourage individuals to ask some complex questions and embrace their multiple roles as professionals and citizens.

Bioethics Involves a Decision-Making Process

The emergence of new biological technologies, like the rDNA capabilities in the 1970s and the synthetic biology tools of this era, makes it possible for us to alter living systems in ways we’ve never been able to do before. Whereas most of the BioBuilder activities address how to do synthetic biology, this section focuses on what we should do with synthetic biology. To answer “should” rather than “how” questions, we turn to the field of ethics, which provides a process for making decisions about morally complicated issues. In the following section, we outline one approach to asking the “should we” questions.

Identifying the ethical question

Ethics gives us a process to tackle difficult questions by carefully considering an issue from multiple perspectives. The first step in this process is to identify the ethical components of a particular scenario. “Should” questions are one hallmark. For instance, “Should scientists build novel organisms?” is clearly a bioethical question, whereas “Is it safe to build novel organisms?” is a scientific one. Other types of questions might be legal (“Will I be fined or jailed if I build novel organisms?”) or matters of personal preference (“What kind of research should I do?”). Distinguishing between questions of personal preference and ethical questions can become quite tricky because they can both include “should” questions. One key difference is how the decisions impact other people. When others have a stake in the outcome, the question becomes ethical rather than merely a matter of preference.

Identifying the stakeholders

After identifying the ethical questions central to an issue, the next step involves identifying who or what could be affected by the outcome. These “stakeholders” can include the government, academia, corporations, citizens, or nonpersons such as the environment. Considering an issue from many perspectives is a critical aspect of ethical decision making. It is not always possible to satisfy all stakeholders, so determining whose interests are protected is a major part of the process.

Connecting facts with guiding ethical principles

The next stage of the process involves outlining both the relevant facts and guiding ethical considerations. Both scientific and social facts are important for making bioethical decisions. When deciding whether scientists should build novel organisms, it is important to consider scientific facts about safety alongside social facts about whether particular groups of people might be disproportionately at risk. When information about an issue is incomplete, additional research might be required to make an informed decision. Alternatively, decisions are made based on the best available knowledge but incorporate enough flexibility for change when new information comes to light. Relevant facts are fairly specific to a particular situation, but guiding ethical considerations are often more widely applicable. They reflect the kind of society we live in or at least the one to which we aspire. Some common core principles guiding ethical decision-making include respect for individuals, minimizing harm while maximizing benefit, and fairness. We might choose to prioritize these core principles differently or include other relevant principles, but many ethical decisions incorporate one or more of these ideas.

Finally, it’s time to base a decision on the perspectives you’ve considered. In the real world, ethics are more than thought exercises. Decisions can affect public policies and laws. Such consequences are, after all, the point of the ethical deliberations. And although we rarely agree unanimously on ethical issues as a society, we can justify our own reasoning using this process.

The Cambridge City Council hearings: bioethics in action

When former Cambridge, Massachusetts Mayor Alfred Velluci told the assembled panel of scientists in the 1976 Cambridge City Council hearings to “refrain from using the alphabet,” he was asking the scientists to avoid using jargon, but he also provided a slogan for the divide between science and society. You can watch the 30-minute video of the hearings, “Hypothetical Risks, The Cambridge City Council hearings on DNA experimentation in Cambridge,” from the MIT 150 website. The exchanges in the video highlight many relevant aspects of any emerging technology, not only rDNA.

Before watching the video, it’s helpful to review what is meant by “recombinant DNA,” which is DNA spliced together from different natural or synthetic sources (see the Fundamentals of Synthetic Biology and Fundamentals of DNA Engineering chapters for detailed explanations), and “biosafety levels,” which are laboratory designations that describe the procedures necessary to contain dangerous biological materials (for more information about this, read the following sidebar as well as additional resources on the NIH and the BioBuilder websites).

BIOSAFETY LEVELS

The National Institutes for Health (NIH) uses four biosafety levels to categorize labs containing hazardous biological materials. The levels that were called biohazard levels P1, P2, P3, and P4 in the 1970s are now called Biosafety levels (BSL) 1, 2, 3, 4. BSL1 (or BL1 and historically P1) denotes labs containing the least dangerous organisms and materials, whereas BL4 labs contain the most dangerous agents. Each level specifies the precautions and procedures required to minimize risk for that setting. For example, BL1 labs use only well-studied organisms that are not known to cause disease in healthy adults. BL1 labs include typical high school and undergraduate teaching labs. In these labs, scientists wear gloves, lab coats, and goggles, as necessary. BL2 labs are suitable for working with bacteria and viruses causing nonlethal diseases, such as Lyme disease or Salmonella infections. BL2 labs are also suitable for work with human cells and cell lines. These labs have specialized equipment to physically contain experiments and keep pathogens from spreading into “clean” zones. BL3 labs are required when scientists are working with treatable but dangerous pathogens, such as the viruses that cause Bubonic plague, SARS, and West Nile encephalitis. Scientists in BL3 labs are required to use highly specialized protective equipment and procedures. For example, air from the lab is filtered before being exhausted outdoors, and overall access to the lab is highly restricted. BL4 labs contain infectious agents with the highest risk for causing life-threatening illnesses. BL4 labs are places where research is conducted on the world’s most dangerous pathogens such as Ebola virus. BL4 labs are rare and are often housed in an isolated building to protect neighboring communities.

To set the stage for the activity described here, imagine that it is three years after the scientific community raised concern about the safety of recombinant DNA experiments. It is one month after the NIH issued guidelines to regulate recombinant DNA work. The Cambridge City Council is meeting with scientists from nearby institutions to discuss the consequence of these guidelines for the community surrounding the laboratories and to consider additional resolutions and actions that the city might take to ensure the safety of its citizens. The hearings were recorded at Cambridge City Hall in 1976, and though the audio and the video quality have degraded, it provides an invaluable window into the dynamics of these debates, showing the human side of what can sometimes, in retrospect, look like merely academic debates or simplified as over-emotional public reaction.

After watching the Cambridge City Council videotape, hand out copies of the transcript provided on the BioBuilder website and at the end of this chapter. Review the cast of characters as a group. Then, divide into smaller groups with each group assigned one of the major players. Each group should assign a notetaker to record how their character responded (or didn’t respond) to the following questions:

§ Can rDNA experiments produce dangerous organisms?

§ Should rDNA experiments be regulated by the NIH?

§ Should rDNA experiments be regulated by the Cambridge City Council?

§ Do “routine” experiments with rDNA endanger the researcher performing the experiments?

§ Do “routine” experiments with rDNA endanger the citizens of Cambridge?

§ Are there benefits to rDNA research?

§ Should rDNA research be allowed in Cambridge?

Record whether each character decided, 1) that rDNA should continue under the guidelines already established by the NIH, or, 2) that a moratorium should be placed on all rDNA experiments in the city of Cambridge until further investigations occurred.

Introduce the idea of a stakeholder as a person who stands to be affected by the outcome of the decision. What kinds of stakeholders are featured in the video? Some examples might include scientist, citizen, elected government official, appointed government official, environmental advocate, academic, fisherman, and so on.

Assign each character his/her primary stakeholder role and then report out on the following questions:

§ What was your character’s primary stakeholder role?

§ Did you all agree immediately which stakeholder role to assign your character?

§ What were some of the secondary roles you considered?

As a group, review how the stakeholders’ roles align with the characters’ decisions. Do stakeholders from one group all share the same opinion? The discussion can be further facilitated using one or more of the following focus topics:

Risk assessment

How do the different stakeholders approach risk assessment of rDNA research?

Detection and surveillance of threats

How do the stakeholders’ background knowledge and experience affect the way they detect threats? How does direct access to and control of the potential threat impact the perception of that threat?

Emergency scenarios and preparedness

What is a reasonable level of preparedness for emergency scenarios? What can be done to agree on a reasonable level of preparation for rare but terrible possible situations? How can the trade-offs for such preparation be identified and discussed?

Discourse and rhetoric

How do differences in the language used by the scientists versus the city councilors reflect their differing points of view? Is it just a difference in language or are the meanings truly distinct?

Self-regulation versus government legislation

What are the pros and cons of self-regulation versus government legislation for the scientific community? Do they differ for local citizens?

The scientific process

How does the scientific process differ from legal or civic processes? In what ways are they similar? How does the iterative nature of the scientific process, wherein evidence builds but does not “prove” an idea, lead to differences in expectations? Would a better understanding of the scientific process help with the discussion? If possible, how could a common understanding be reached?

Stakeholder Activity

In this activity, groups or individuals put themselves in the stakeholders’ shoes to practice bioethical decision-making. It can accompany any of the case studies presented here or another of your choosing. Although the activity can stand alone, starting with the earlier section “The Cambridge City Council hearings: bioethics in action” introduces the concept of stakeholders, and models the process of ethical decision-making.

Review the idea that bioethics is a process that can be framed by asking the following questions:

What is the ethical question?

One key feature of an ethical question is that its outcome stands to have a negative impact on an individual or group.

What are the relevant facts?

Consider that scientific, sociological, historical, and all other types of facts can be important.

Who or what could be affected by the way the question is resolved?

These are the stakeholders. Write each on a notecard to distribute so each person is assigned one.

What are the relevant ethical considerations?

Respect for persons, minimizing harms while maximizing benefits, and fairness are the core principles we discussed, but this list is by no means exhaustive.

Begin by choosing a case study from this chapter or from the news and then address the preceding key questions. Depending on your setting, it might be more appropriate to provide answers to one or all of the questions. With more advanced groups it might be more sensible to consider the questions together and then collectively arrive at a set of answers.

Next, divide into groups and assign each group a stakeholder role; for example, all government officials are in one group. Other stakeholder roles could be based on affiliation with science, industry, civil society, policy, education, art, or other areas. For the purposes of this activity, it is best to limit the number of stakeholders to four or five, because this will determine the number of small groups. This can be done by eliminating all but the most directly affected stakeholders or by creating broader categories; for example, grouping together elected and appointed government officials or combining consumers, investors, and children into one “citizen” category. In these groups, take time to discuss the ethical question from the perspective of that stakeholder. Groups should take into account the relevant facts and ethical considerations, such as respect for persons, minimizing harm/maximizing benefit, fairness, and any others that might come to mind. Each group should arrive at a collective decision for the stakeholder’s preferred course of action and supporting justifications.

Next, groups will shuffle such that every group has a representative from each stakeholder role; for example, one government official, one scientist, one citizen, and one corporate leader are all in a group. In this mixed group, the ethical questions should be discussed again, taking into account the relevant facts and ethical considerations from the point of view of their assigned stakeholder role. Each group should arrive at a decision for the preferred course of action, but reaching a decision this time might require a vote because each person will have distinct perspectives and interests to protect. Have representatives from each group share their decisions with the larger group. Did all the groups reach the same conclusions?

Finally, to connect their personal thinking to that of the stakeholder groups, individuals can share or write a follow-up statement describing whether their courses of action or reasoning changed when moving from a uniform group of stakeholders to a group that was mixed. Is there a connection between their personal views and the views expressed by their stakeholder identity? Could they see their personal viewpoints changing if they ultimately found themselves in one of these stakeholder careers?

Additional Reading and Resources

§ The “Belmont Report”: National Commission for the Protection of Human Subjects of Biomedical and Behavioral Research. (1979) Ethical Principles and Guidelines for the Protection of Human Subjects of Research.

§ Berg, P., Singer M.F. The recombinant DNA controversy: twenty years later. Proc Natl Acad Sci USA. 1995;92(20):9011-3.

§ Cello, J., Paul, A.V., Wimmer E. Chemical Synthesis of Poliovirus cDNA: Generation of Infectious Virus in the Absence of Natural Template. Science 2002;297(5583):1016-8.

§ Gibson D.G. et al. Creation of a bacterial cell controlled by a chemically synthesized genome. Science 2010;329(5987):52-6.

§ Randerson J. Did anyone order smallpox? The Guardian, 2006. (http://bit.ly/order_smallpox).

§ Rogers, M. The Pandora’s box Congress. Rolling Stone 1975;18:19.

§ Website: DIYbio Code of Ethics (http://diybio.org/codes/).

§ Website: Glowing plant reference (http://www.glowingplant.com/).

§ Website: MIT 150 Website: “Hypothetical Risk: Cambridge City Council’s Hearings on Recombinant DNA Research” (http://bit.ly/hypothetical_risk).

§ Website: NIH’s “Exploring Bioethics” (http://bit.ly/exploring_bioethics).

§ Website: NIH Biosafety guidelines (http://bit.ly/biosafety_guidelines).