The Beginning of Infinity: Explanations That Transform the World - David Deutsch (2011)

Chapter 17. Unsustainable

Easter Island in the South Pacific is famous mainly - let’s face it, only - for the large stone statues that were built there many centuries ago by the islanders. The purpose of the statues is unknown, but is thought to be connected with an ancestor-worshipping religion. The first settlers may have arrived on the island as early as the fifth century CE. They developed a complex Stone Age civilization, which suddenly collapsed over a millennium later. By some accounts there was starvation, war and perhaps cannibalism. The population fell to a small fraction of what it had been, and their culture was lost.

The prevailing theory is that the Easter Islanders brought disaster upon themselves, in part by chopping down the forest which had originally covered most of the island. They eliminated the most useful species of tree altogether. This is not a wise thing to do if you rely on timber for shelter, or if fish form a large part of your diet and your boats and nets are made of wood. And there were knock-on effects such as soil erosion, precipitating the destruction of the environment on which the islanders had depended.

Some archaeologists dispute this theory. For example, Terry Hunt has concluded that the islanders arrived only in the thirteenth century, and that their civilization continued to function throughout the deforestation (which he attributes to rats, not tree-felling) until it was destroyed by epidemics, caused by contact with Europeans. However, I do not want to discuss whether the prevailing theory is accurate, but only to use it as an example of a common fallacy - an argument by analogy about issues far less parochial.

Easter Island is 2,000 kilometres from the nearest habitation, namely Pitcairn Island (where the Bounty’s crew took refuge after their famous mutiny). Both islands are far from anywhere, even by today’s standards. Nevertheless, in 1972 Jacob Bronowski made his way to Easter Island to film part of his magnificent television series The Ascent of Man. He and his film crew travelled by ship all the way from California, a round trip of some 14,000 kilometres. He was in poor health, and the crew had literally to carry him to the location for filming. But he persevered because those distinctive statues were the perfect setting for him to deliver the central message of his series - which is also a theme of this book - that our civilization is unique in history for its capacity to make progress. He wanted to celebrate its values and achievements, and to attribute the latter to the former, and to contrast our civilization with the alternative as epitomized by ancient Easter Island.

The Ascent of Man had been commissioned by the naturalist David Attenborough, then controller of the British television channel BBC2. A quarter of a century later Attenborough - who had by then become the doyen of natural-history film-making - led another film crew to Easter Island, to film another television series, The State of the Planet. He too chose those grim-faced statues as a backdrop, for his closing scene. Alas, his message was almost exactly the opposite of Bronowski’s.

The philosophical difference between these two great broadcasters - so alike in their infectious sense of wonder, their clarity of exposition, and their humanity - was immediately evident in their different attitudes towards those statues. Attenborough called them ‘astonishing stone sculptures … vivid evidence of the technological and artistic skills of the people who once lived here’. Now, I wonder whether Attenborough was really all that impressed by the islanders’ skills, which had been exceeded millennia earlier in other Stone Age societies. I expect he was being polite, for it is de rigueur in our culture to heap praise upon any achievement of a primitive society. But Bronowski refused to conform to that convention. He remarked, ‘People often ask about Easter Island, How did men come here? They came here by accident: that is not in question. The question is, Why could they not get off?’ And why, he might have added, did others not follow to trade with them (there was a great deal of trade among Polynesians other than Easter Islanders), or to rob them, or to learn from them? Because they did not know how.

As for the statues being ‘vivid evidence of … artistic skills’, Bronowski was having none of that either. To him they were vivid evidence of failure, not success:

The critical question about these statues is, Why were they all made alike? You see them sitting there, like Diogenes in their barrels, looking at the sky with empty eye-sockets, and watching the sun and the stars go overhead without ever trying to understand them. When the Dutch discovered this island on Easter Sunday in 1722, they said that it had the makings of an earthly paradise. But it did not. An earthly paradise is not made by this empty repetition … These frozen faces, these frozen frames in a film that is running down, mark a civilization which failed to take the first step on the ascent of rational knowledge.

The Ascent of Man (1973)

The statues were all made alike because Easter Island was a static society. It never took that first step in the ascent of man - the beginning of infinity.

Of the hundreds of statues on the island, built over the course of several centuries, fewer than half are at their intended destinations. The rest, including the largest, are in various stages of completion, with as many as 10 per cent already in transit on specially built roads. Again there are conflicting explanations, but, according to the prevailing theory, it is because there was a large increase in the rate of statue-building just before it stopped for ever. In other words, as disaster loomed, the islanders diverted ever more effort not into addressing the problem - for they did not know how to do that - but into making ever more and bigger (but very rarely better) monuments to their ancestors. And what were those roads made of? Trees.

When Bronowski made his documentary, there were as yet no detailed theories of how the Easter Island civilization fell. But, unlike Attenborough, he was not interested in that, because his whole purpose in going to Easter Island was to point out the profound difference between our civilization and civilizations like the one that built those statues. We are not like them was his message. We have taken the step that they did not. Attenborough’s argument rests on the opposite claim: we are like them and are following headlong in their footsteps. And so he drew an extended analogy between the Easter Island civilization and ours, feature for feature, and danger for danger:

A warning of what the future could hold can be seen on one of the remotest places on Earth … When the first Polynesian settlers landed here they found a miniature world that had ample resources to sustain them. They lived well …

The State of the Planet (BBC TV, 2000)

A miniature world: there, in three words, is Attenborough’s reason for travelling all the way to Easter Island and telling its story. He believed that it holds a warning for the world because Easter Island was itself a miniature world - a Spaceship Earth - that went wrong. It had ‘ample resources’ to sustain its population, just as the Earth has seemingly ample resources to sustain us. (Imagine how amazed Malthus would have been had he known that the Earth’s resources would still be called ‘ample’ by pessimists in the year 2000.) Its inhabitants ‘lived well’, just as we do. And yet they were doomed, just as we are doomed unless we change our ways. If we do not, here is ‘what the future could hold’:

The old culture that had sustained them was abandoned and the statues toppled. What had been a rich, fertile world in miniature had become a barren desert.

Again, Attenborough puts in a good word for the old culture: it ‘sustained’ the islanders (just as the ample resources did, until the islanders failed to use them sustainably). He uses the toppling of the statues to symbolize the fall of that culture, as if to warn of future disaster for ours, and he reiterates his world-in-miniature analogy between the society and technology of ancient Easter Island and that of our whole planet today.

Thus Attenborough’s Easter Island is a variant of Spaceship Earth: humans are sustained jointly by the ‘rich, fertile’ biosphere and the cultural knowledge of a static society. In this context, ‘sustain’ is an interestingly ambiguous word. It can mean providing someone with what they need. But it can also mean preventing things from changing - which can be almost the opposite meaning, for the suppression of change is seldom what human beings need.

The knowledge that currently sustains human life in Oxfordshire does so only in the first sense: it does not make us enact the same, traditional way of life in every generation. In fact it prevents us from doing so. For comparison: if your way of life merely makes you build a new, giant statue, you can continue to live afterwards exactly as you did before. That is sustainable. But if your way of life leads you to invent a more efficient method of farming, and to cure a disease that has been killing many children, that is unsustainable. The population grows because children who would have died survive; meanwhile, fewer of them are needed to work in the fields. And so there is no way to continue as before. You have to live the solution, and to set about solving the new problems that this creates. It is because of this unsustainability that the island of Britain, with a far less hospitable climate than the subtropical Easter Island, now hosts a civilization with at least three times the population density that Easter Island had at its zenith, and at an enormously higher standard of living. Appropriately enough, this civilization has knowledge of how to live well without the forests that once covered much of Britain.

The Easter Islanders’ culture sustained them in both senses. This is the hallmark of a functioning static society. It provided them with a way of life; but it also inhibited change: it sustained their determination to enact and re-enact the same behaviours for generations. It sustained the values that placed forests - literally - beneath statues. And it sustained the shapes of those statues, and the pointless project of building ever more of them.

Moreover, the portion of the culture that sustained them in the sense of providing for their needs was not especially impressive. Other Stone Age societies have managed to take fish from the sea and sow crops without wasting their efforts in endless monument-building. And, if the prevailing theory is true, the Easter Islanders started to starve before the fall of their civilization. In other words, even after it had stopped providing for them, it retained its fatal proficiency at sustaining a fixed pattern of behaviour. And so it remained effective at preventing them from addressing the problem by the only means that could possibly have been effective: creative thought and innovation. Attenborough regards the culture as having been very valuable and its fall as a tragedy. Bronowski’s view was closer to mine, which is that since the culture never improved, its survival for many centuries was a tragedy, like that of all static societies.

Attenborough is not alone in drawing frightening lessons from the history of Easter Island. It has become a widely adduced version of the Spaceship Earth metaphor. But what exactly is the analogy behind the lesson? The idea that civilization depends on good forest management has little reach. But the broader interpretation, that survival depends on good resource management, has almost no content: any physical object can be deemed a ‘resource’. And, since problems are soluble, all disasters are caused by ‘poor resource management’. The ancient Roman ruler Julius Caesar was stabbed to death, so one could summarize his mistake as ‘imprudent iron management, resulting in an excessive build-up of iron in his body’. It is true that if he had succeeded in keeping iron away from his body he would not have died in the (exact) way he did, yet, as an explanation of how and why he died, that ludicrously misses the point. The interesting question is not what he was stabbed with, but how it came about that other politicians plotted to remove him violently from office and that they succeeded. A Popperian analysis would focus on the fact that Caesar had taken vigorous steps to ensure that he could not be removed without violence. And then on the fact that his removal did not rectify, but actually entrenched, this progress-suppressing innovation. To understand such events and their wider significance, one has to understand the politics of the situation, the psychology, the philosophy, sometimes the theology. Not the cutlery. The Easter Islanders may or may not have suffered a forest-management fiasco. But, if they did, the explanation would not be about why they made mistakes - problems are inevitable - but why they failed to correct them.

I have argued that the laws of nature cannot possibly impose any bound on progress: by the argument of Chapters 1 and 3, denying this is tantamount to invoking the supernatural. In other words, progress is sustainable, indefinitely. But only by people who engage in a particular kind of thinking and behaviour - the problem-solving and problem-creating kind characteristic of the Enlightenment. And that requires the optimism of a dynamic society.

One of the consequences of optimism is that one expects to learn from failure - one’s own and others’. But the idea that our civilization has something to learn from the Easter Islanders’ alleged forestry failure is not derived from any structural resemblance between our situation and theirs. For they failed to make progress in practically every area. No one expects the Easter Islanders’ failures in, say, medicine to explain our difficulties in curing cancer, or their failure to understand the night sky to explain why a quantum theory of gravity is elusive to us. The Easter Islanders’ errors, both methodological and substantive, were simply too elementary to be relevant to us, and their imprudent forestry, if that is really what destroyed their civilization, would merely be typical of their lack of problem-solving ability across the board. We should do much better to study their many small successes than their entirely commonplace failures. If we could discover their rules of thumb (such as ‘stone mulching’ to help grow crops on poor soil), we might find valuable fragments of historical and ethnological knowledge, or perhaps even something of practical use. But one cannot draw general conclusions from rules of thumb. It would be astonishing if the details of a primitive, static society’s collapse had any relevance to hidden dangers that may be facing our open, dynamic and scientific society, let alone what we should do about them.

The knowledge that would have saved the Easter Islanders’ civilization has already been in our possession for centuries. A sextant would have allowed them to explore their ocean and bring back the seeds of new forests and of new ideas. Greater wealth, and a written culture, would have enabled them to recover after a devastating plague. But, most of all, they would have been better at solving problems of all kinds if they had known some of our ideas about how to do that, such as the rudiments of a scientific outlook. Such knowledge would not have guaranteed their welfare, any more than it guarantees ours. Nevertheless, the fact that their civilization failed for lack of what ours discovered long ago cannot be an ominous ‘warning of what the future could hold’ for us.

This knowledge-based approach to explaining human events follows from the general arguments of this book. We know that achieving arbitrary physical transformations that are not forbidden by the laws of physics (such as replanting a forest) can only be a matter of knowing how. We know that finding out how is a matter of seeking good explanations. We also know that whether a particular attempt to make progress will succeed or not is profoundly unpredictable. It can be understood in retrospect, but not in terms of factors that could have been known in advance. Thus we now understand why alchemists never succeeded at transmutation: because they would have had to understand some nuclear physics first. But this could not have been known at the time. And the progress that they did make - which led to the science of chemistry - depended strongly on how individual alchemists thought, and only peripherally on factors like which chemicals could be found nearby. The conditions for a beginning of infinity exist in almost every human habitation on Earth.

In his book Guns, Germs and Steel, the biogeographer Jared Diamond takes the opposite view. He proposes what he calls an ‘ultimate explanation’ of why human history was so different on different continents. In particular, he seeks to explain why it was Europeans who sailed out to conquer the Americas, Australasia and Africa and not vice versa. In Diamond’s view, the psychology and philosophy and politics of historical events are no more than ephemeral ripples on the great river of history. Its course is set by factors independent of human ideas and decisions. Specifically, he says, the continents on our planet had different natural resources - different geographies, plants, animals and micro-organisms - and, details aside, that is what explains the broad sweep of history, including which human ideas were created and what decisions were made, politics, philosophy, cutlery and all.

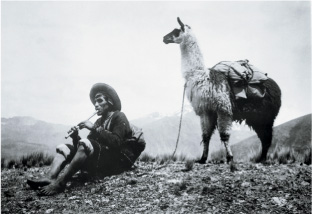

For example, part of his explanation of why the Americas never developed a technological civilization before the advent of Europeans is that there were no animals there suitable for domestication as beasts of burden.

Llamas are native to South America, and have been used as beasts of burden since prehistoric times, so Diamond points out that they are not native to the continent as a whole, but only to the Andes mountains. Why did no technological civilization arise in the Andes mountains? Why did the Incan Empire not have an Enlightenment? Diamond’s position is that other biogeographical factors were unfavourable.

The communist thinker Friedrich Engels proposed the same ultimate explanation of history, and made the same proviso about llamas, in 1884:

The Eastern Hemisphere … possessed nearly all the animals adaptable to domestication … The Western Hemisphere, America, had no mammals that could be domesticated except the llama, which, moreover, was only found in one part of South America … Owing to these differences in natural conditions, the population of each hemisphere now goes on its own way …

The Origin of the Family, Private Property and the State

(Friedrich Engels, based on notes by Karl Marx)

But why did llamas continue to be ‘only found in one part of South America’, if they could have been useful elsewhere? Engels did not address that issue. But Diamond realized that it ‘cries out for explanation’. Because, unless the reason that llamas were not exported was itself biogeographical, Diamond’s ‘ultimate explanation’ is false. So he proposed a biogeographical reason: he pointed out that a hot, lowland region, unsuitable for llamas, separates the Andes from the highlands of Central America where llamas would have been useful in agriculture.

But, again, why must such a region have been a barrier to the spread of domesticated llamas? Traders travelled between South and Central America for centuries, perhaps overland and certainly by sea. Where there are long-range traders, it is not necessary for an idea to be useful in an unbroken line of places for it to be able to spread. As I remarked in Chapter 11, knowledge has the unique ability to take aim at a distant target and utterly transform it while having scarcely any effect on the space between. So, what would it have taken for some of those traders to take some llamas north for sale? Only the idea: the leap of imagination to guess that if something is useful here, it might be useful there too. And the boldness to take the speculative and physical risk. Polynesian traders did exactly that. They ranged further, across a more formidable natural barrier, carrying goods including livestock. Why did none of the South American traders ever think of selling llamas to the Central Americans? We may never know - but why should it have had anything to do with geography? They may simply have been too set in their ways. Perhaps innovative uses for animals were taboo. Perhaps such a trade was attempted, but failed every time because of sheer bad luck. But, whatever the reason was, it cannot have been that the hot region constituted a physical barrier, for it did not.

Those are the parochial considerations. The bigger picture is that the spread of llamas can only have been prevented by people’s ideas and outlook. Had the Andeans had a Polynesian outlook instead, llamas might have spread all over the Americas. Had the ancient Polynesians not had that outlook, they might never have settled Polynesia in the first place, and biogeographical explanations would now be referring to the great ocean barrier as the ‘ultimate explanation’ for that. If the Polynesians had been even better at long-range trading, they might have managed to transport horses from Asia to their islands and thence to South America - a feat perhaps no more impressive than Hannibal’s transporting elephants across the Alps. If the ancient Greek enlightenment had continued, Athenians might have been the first to settle the Pacific islands and they would now be the ‘Polynesians’. Or, if the early Andeans had worked out how to breed giant war llamas and had ridden out to explore and conquer before anyone else had even thought of domesticating the horse, South American biogeographers might now be explaining that their ancestors colonized the world because no other continent had llamas.

Moreover, the Americas had not always lacked large quadrupeds. When the first humans arrived there, many species of ‘mega-fauna’ were common, including wild horses, mammoths, mastodons and other members of the elephant family. According to some theories, the humans hunted them to extinction. What would have happened if one of those hunters had had a different idea: to ride the beast before killing it? Generations later, the knock-on effects of that bold conjecture might have been tribes of warriors on horses and mammoths pouring back through Alaska and re-conquering the Old World. Their descendants would now be attributing this to the geographical distribution of mega-fauna. But the real cause would have been that one idea in the mind of that one hunter.

In early prehistory, populations were tiny, knowledge was parochial, and history-making ideas were millennia apart. In those days, a meme spread only when one person observed another enacting it nearby, and (because of the staticity of cultures) rarely even then. So at that time human behaviour resembled that of other animals, and much of what happened was indeed explained by biogeography. But developments such as abstract language, explanation, wealth above the level of subsistence, and long-range trade all had the potential to erode parochialism and hence to give causal power to ideas. By the time history began to be recorded, it had long since become the history of ideas far more than anything else - though unfortunately the ideas were still mainly of the self-disabling, anti-rational variety. As for subsequent history, it would take considerable dedication to insist that biogeographical explanations account for the broad sweep of events. Why, for instance, did the societies in North America and Western Europe, rather than Asia and Eastern Europe, win the Cold War? Analysing climate, minerals, flora, fauna and diseases can teach us nothing about that. The explanation is that the Soviet system lost because its ideology wasn’t true, and all the biogeography in the world cannot explain what was false about it.

Coincidentally, one of the things that was most false about the Soviet ideology was the very idea that there is an ultimate explanation of history in mechanical, non-human terms, as proposed by Marx, Engels and Diamond. Quite generally, mechanical reinterpretations of human affairs not only lack explanatory power, they are morally wrong as well, for in effect they deny the humanity of the participants, casting them and their ideas merely as side effects of the landscape. Diamond says that his main reason for writing Guns, Germs and Steel was that, unless people are convinced that the relative success of Europeans was caused by biogeography, they will for ever be tempted by racist explanations. Well, not readers of this book, I trust! Presumably Diamond can look at ancient Athens, the Renaissance, the Enlightenment - all of them the quintessence of causation through the power of abstract ideas - and see no way of attributing those events to ideas and to people; he just takes it for granted that the only alternative to one reductionist, dehumanizing reinterpretation of events is another.

In reality, the difference between Sparta and Athens, or between Savonarola and Lorenzo de’ Medici, had nothing to do with their genes; nor did the difference between the Easter Islanders and the imperial British. They were all people - universal explainers and constructors. But their ideas were different. Nor did landscape cause the Enlightenment. It would be much truer to say that the landscape we live in is the product of ideas. The primeval landscape, though packed with evidence and therefore opportunity, contained not a single idea. It is knowledge alone that converts landscapes into resources, and humans alone who are the authors of explanatory knowledge and hence of the uniquely human behaviour called ‘history’.

Physical resources such as plants, animals and minerals afford opportunities, which may inspire new ideas, but they can neither create ideas nor cause people to have particular ideas. They also cause problems, but they do not prevent people from finding ways to solve those problems. Some overwhelming natural event like a volcanic eruption might have wiped out an ancient civilization regardless of what the victims were thinking, but that sort of thing is exceptional. Usually, if there are human beings left alive to think, there are ways of thinking that can improve their situation, and then improve it further. Unfortunately, as I have explained, there are also ways of thinking that can prevent all improvement. Thus, since the beginning of civilization and before, both the principal opportunities for progress and the principal obstacles to progress have consisted of ideas alone. These are the determinants of the broad sweep of history. The primeval distribution of horses or llamas or flint or uranium can affect only the details, and then only aftersome human being has had an idea for how to use those things. The effects of ideas and decisions almost entirely determine which biogeographical factors have a bearing on the next chapter of human history, and what that effect will be. Marx, Engels and Diamond have it the wrong way round.

A thousand years is a long time for a static society to survive. We think of the great centralized empires of antiquity which lasted even longer; but that is a selection effect: we have no record of most static societies, and they must have been much shorter-lived. A natural guess is that most were destroyed by the first challenge that would have required the creation of a significantly new pattern of behaviour. The isolated location of Easter Island, and the relatively hospitable nature of its environment, might have given its static society a longer lifespan than it would have had if it had been exposed to more tests by nature and by other societies. But even those factors are still largely human, not biogeographical: if the islanders had known how to make long-range ocean voyages, the island would not have been ‘isolated’ in the relevant sense. Likewise, how ‘hospitable’ Easter Island is depends on what the inhabitants know. If its settlers had known as little about survival techniques as I do, then they would not have survived their first week on the island. And, on the other hand, today thousands of people live on Easter Island without starving and without a forest - though now they are planting one because they want to and know how.

The Easter Island civilization collapsed because no human situation is free of new problems, and static societies are inherently unstable in the face of new problems. Civilizations rose and collapsed on other South Pacific islands too - including Pitcairn Island. That was part of the broad sweep of history in the region. And, in the big picture, the cause was that they all had problems that they failed to solve. The Easter Islanders failed to navigate their way off the island, just as the Romans failed to solve the problem of how to change governments peacefully. If there was a forestry disaster on Easter Island, that was not what brought its inhabitants down: it was that they were chronically unable to solve the problem that this raised. If that problem had not dispatched their civilization, some other problem eventually would have. Sustaining their civilization in its static, statue-obsessed state was never an option. The only options were whether it would collapse suddenly and painfully, destroying most of what little knowledge they had, or change slowly and for the better. Perhaps they would have chosen the latter if only they had known how.

We do not know what horrors the Easter Island civilization perpetrated in the course of preventing progress. But apparently its fall did not improve anything. Indeed, the fall of tyranny is never enough. The sustained creation of knowledge depends also on the presence of certain kinds of idea, particularly optimism, and an associated tradition of criticism. There would have to be social and political institutions that incorporated and protected such traditions: a society in which some degree of dissent and deviation from the norm was tolerated, and whose educational practices did not entirely extinguish creativity. None of that is trivially achieved. Western civilization is the current consequence of achieving it - which is why, as I said, it already has what it takes to avoid an Easter Island disaster. If it really is facing a crisis, it must be some other crisis. If it ever collapses, it will be in some other way and if it needs to be saved, it will have to be by its own, unique methods.

In 1971, while I was still at school, I attended a lecture for high-school students entitled ‘Population, Resources, Environment’. It was given by the population scientist Paul Ehrlich. I do not remember what I was expecting - I don’t think I had ever heard of ‘the environment’ before - but nothing had prepared me for such a bravura display of raw pessimism. Ehrlich starkly described to his young audience the living hell we would be inheriting. Half a dozen varieties of resource-management catastrophe were just around the corner, and it was already too late to avoid some of them. People would be starving to death by the billion in ten years, twenty at best. Raw materials were running out: the Vietnam War, then in progress, was a last-ditch struggle for the region’s tin, rubber and petroleum. (Notice how his biogeographical explanation blithely shrugged off the political disagreements that were in fact causing the conflict.) The troubles of the day in American inner cities, rising crime, mental illness - all were part of the same great catastrophe. All were linked by Ehrlich to overpopulation, pollution and the reckless overuse of finite resources: we had created too many power stations and factories, and mines, and intensive farms - too much economic growth, far more than the planet could sustain. And, worst of all, too many people - the ultimate source of all the other ills. In this respect, Ehrlich was following in the footsteps of Malthus, making the same error: setting predictions of one process against prophecies of another. Thus he calculated that, if the United States was to sustain even its 1971 standard of living, it would have to reduce its population by three-quarters, to 50 million - which was of course impossible in the time available. The planet as a whole was overpopulated by a factor of seven, he said. Even Australia was nearing its maximum sustainable population. And so on.

We had little basis for doubting what the professor was telling us about the field he was studying. Yet for some reason our conversation afterwards was not that of a group of students who had just had their futures stolen. I do not know about the others, but I can remember when I stopped worrying. At the end of the lecture a girl asked Ehrlich a question. I have forgotten the details, but it had the form ‘What if we solve [one of the problems that Ehrlich had described] within the next few years? Wouldn’t that affect your conclusion?’ Ehrlich’s reply was brisk. How could we possibly solve it? (She did not know.) And, even if we did, how could that do more than briefly delay the catastrophe? And what would we do then?

What a relief! Once I realized that Ehrlich’s prophesies amounted to saying, ‘If we stop solving problems, we are doomed,’ I no longer found them shocking, for how could it be otherwise? Quite possibly that girl went on to solve the very problem she asked about, and the one after it. At any rate, someone must have, because the catastrophe scheduled for 1991 has still not materialized. Nor have any of the others that Ehrlich foretold.

Ehrlich thought that he was investigating a planet’s physical resources and predicting their rate of decline. In fact he was prophesying the content of future knowledge. And, by envisaging a future in which only the best knowledge of 1971 was deployed, he was implicitly assuming that only a small and rapidly dwindling set of problems would ever be solved again. Furthermore, by casting problems in terms of ‘resource depletion’, and ignoring the human level of explanation, he missed all the important determinants of what he was trying to predict, namely: did the relevant people and institutions have what it takes to solve problems? And, more broadly, what does it take to solve problems?

A few years later, a graduate student in the then new subject of environmental science explained to me that colour television was a sign of the imminent collapse of our ‘consumer society’. Why? Because, first of all, he said, it served no useful purpose. All the useful functions of television could be performed just as well in monochrome. Adding colour, at several times the cost, was merely ‘conspicuous consumption’. That term had been coined by the economist Thorstein Veblen in 1902, a couple of decades before even monochrome television was invented; it meant wanting new possessions in order to show off to the neighbours. That we had now reached the physical limit of conspicuous consumption could be proved, said my colleague, by analysing the resource constraints scientifically. The cathode-ray tubes in colour televisions depended on the element europium to make the red phosphors on the screen. Europium is one of the rarest elements on Earth. The planet’s total known reserves were only enough to build a few hundred million more colour televisions. After that, it would be back to monochrome. But worse - think what this would mean. From then on there would be two kinds of people: those with colour televisions and those without. And the same would be true of everything else that was being consumed. It would be a world with permanent class distinction, in which the elites would hoard the last of the resources and live lives of gaudy display, while, to sustain that illusory state through its final years, everyone else would be labouring on in drab resentment. And so it went on, nightmare built upon nightmare.

I asked him how he knew that no new source of europium would be discovered. He asked how I knew that it would. And, even if it were, what would we do then? I asked how he knew that colour cathode-ray tubes could not be built without europium. He assured me that they could not: it was a miracle that there existed even one element with the necessary properties. After all, why should nature supply elements with properties to suit our convenience?

I had to concede the point. There aren’t that many elements, and each of them has only a few energy levels that could be used to emit light. No doubt they had all been assessed by physicists. If the bottom line was that there was no alternative to europium for making colour televisions, then there was no alternative.

Yet something deeply puzzled me about that ‘miracle’ of the red phosphor. If nature provides only one pair of suitable energy levels, why does it provide even one? I had not yet heard of the fine-tuning problem (it was new at the time), but this was puzzling for a similar reason. Transmitting accurate images in real time is a natural thing for people to want to do, like travelling fast. It would not have been puzzling if the laws of physics forbade it, just as they do forbid faster-than-light travel. For them to allow it but only if one knew how would be normal too. But for them only just to allow it would be a fine-tuning coincidence. Why would the laws of physics draw the line so close to a point that happened to have significance for human technology? It would be as if the centre of the Earth had turned out to be within a few kilometres of the centre of the universe. It seemed to violate the Principle of Mediocrity.

What made this even more puzzling was that, as with the real fine-tuning problem, my colleague was claiming that there were many such coincidences. His whole point was that the colour-television problem was just one representative instance of a phenomenon that was happening simultaneously in many areas of technology: the ultimate limits were being reached. Just as we were using up the last stocks of the rarest of rare-earth elements for the frivolous purpose of watching soap operas in colour, so everything that looked like progress was actually just an insane rush to exploit the last resources left on our planet. The 1970s were, he believed, a unique and terrible moment in history.

He was right in one respect: no alternative red phosphor has been discovered to this day. Yet, as I write this chapter, I see before me a superbly coloured computer display that contains not one atom of europium. Its pixels are liquid crystals consisting entirely of common elements, and it does not require a cathode-ray tube. Nor would it matter if it did, for by now enough europium has been mined to supply every human being on earth with a dozen europium-type screens, and the known reserves of the element comprise several times that amount.

Even while my pessimistic colleague was dismissing colour television technology as useless and doomed, optimistic people were discovering new ways of achieving it, and new uses for it - uses that he thought he had ruled out by considering for five minutes how well colour televisions could do the existing job of monochrome ones. But what stands out, for me, is not the failed prophecy and its underlying fallacy, nor relief that the nightmare never happened. It is the contrast between two different conceptions of what people are. In the pessimistic conception, they are wasters: they take precious resources and madly convert them into useless coloured pictures. This is true of static societies: those statues really were what my colleague thought colour televisions are - which is why comparing our society with the ‘old culture’ of Easter Island is exactly wrong. In the optimistic conception - the one that was unforeseeably vindicated by events - people are problem-solvers: creators of the unsustainable solution and hence also of the next problem. In the pessimistic conception, that distinctive ability of people is a disease for which sustainability is the cure. In the optimistic one, sustainability is the disease and people are the cure.

Since then, whole new industries have come into existence to harness great waves of innovation, and in many of those - from medical imaging to video games to desktop publishing to nature documentaries like Attenborough’s - colour television proved to be very useful after all. And, far from there being a permanent class distinction between monochrome- and colour-television users, the monochrome technology is now practically extinct, as are cathode-ray televisions. Colour displays are now so cheap that they are being given away free with magazines as advertising gimmicks. And all those technologies, far from being divisive, are inherently egalitarian, sweeping away many formerly entrenched barriers to people’s access to information, opinion, art and education.

Optimistic opponents of Malthusian arguments are often - rightly - keen to stress that all evils are due to lack of knowledge, and that problems are soluble. Prophecies of disaster such as the ones I have described do illustrate the fact that the prophetic mode of thinking, no matter how plausible it seems prospectively, is fallacious and inherently biased. However, to expect that problems will always be solved in time to avert disasters would be the same fallacy. And, indeed, the deeper and more dangerous mistake made by Malthusians is that they claim to have a way of averting resource-allocation disasters (namely, sustainability). Thus they also deny that other great truth that I suggested we engrave in stone: problems are inevitable.

A solution may be problem-free for a period, and in a parochial application, but there is no way of identifying in advance which problems will have such a solution. Hence there is no way, short of stasis, to avoid unforeseen problems arising from new solutions. But stasis is itself unsustainable, as witness every static society in history. Malthus could not have known that the obscure element uranium, which had just been discovered, would eventually become relevant to the survival of civilization, just as my colleague could not have known that, within his lifetime, colour televisions would be saving lives every day.

So there is no resource-management strategy that can prevent disasters, just as there is no political system that provides only good leaders and good policies, nor a scientific method that provides only true theories. But there are ideas that reliably cause disasters, and one of them is, notoriously, the idea that the future can be scientifically planned. The only rational policy, in all three cases, is to judge institutions, plans and ways of life according to how good they are at correcting mistakes: removing bad policies and leaders, superseding bad explanations, and recovering from disasters.

For example, one of the triumphs of twentieth-century progress was the discovery of antibiotics, which ended many of the plagues and endemic illnesses that had caused suffering and death since time immemorial. However, it has been pointed out almost from the outset by critics of ‘so-called progress’ that this triumph may only be temporary, because of the evolution of antibiotic-resistant pathogens. This is often held up as an indictment of - to give it its broad context - Enlightenment hubris. We need lose only one battle in this war of science against bacteria and their weapon, evolution (so the argument goes), to be doomed, because our other ‘so-called progress’ - such as cheap worldwide air travel, global trade, enormous cities - makes us more vulnerable than ever before to a global pandemic that could exceed the Black Death in destructiveness and even cause our extinction.

But all triumphs are temporary. So to use this fact to reinterpret progress as ‘so-called progress’ is bad philosophy. The fact that reliance on specific antibiotics is unsustainable is only an indictment from the point of view of someone who expects a sustainable lifestyle. But in reality there is no such thing. Only progress is sustainable.

The prophetic approach can see only what one might do to postpone disaster, namely improve sustainability: drastically reduce and disperse the population, make travel difficult, suppress contact between different geographical areas. A society which did this would not be able to afford the kind of scientific research that would lead to new antibiotics. Its members would hope that their lifestyle would protect them instead. But note that this lifestyle did not, when it was tried, prevent the Black Death. Nor would it cure cancer.

Prevention and delaying tactics are useful, but they can be no more than a minor part of a viable strategy for the future. Problems are inevitable, and sooner or later survival will depend on being able to cope when prevention and delaying tactics have failed. Obviously we need to work towards cures. But we can do that only for diseases that we already know about. So we need the capacity to deal with unforeseen, unforeseeable failures. For this we need a large and vibrant research community, interested in explanation and problem-solving. We need the wealth to fund it, and the technological capacity to implement what it discovers.

This is also true of the problem of climate change, about which there is currently great controversy. We face the prospect that carbon-dioxide emissions from technology will cause an increase in the average temperature of the atmosphere, with harmful effects such as droughts, sea-level rises, disruption to agriculture, and the extinctions of some species. These are forecast to outweigh the beneficial effects, such as an increase in crop yields, a general boost to plant life, and a reduction in the number of people dying of hypothermia in winter. Trillions of dollars, and a great deal of legislation and institutional change, intended to reduce those emissions, currently hang on the outcomes of simulations of the planet’s climate by the most powerful supercomputers, and on projections by economists about what those computations imply about the economy in the next century. In the light of the above discussion, we should notice several things about the controversy and about the underlying problem.

First, we have been lucky so far. Regardless of how accurate the prevailing climate models are, it is uncontroversial from the laws of physics, without any need for supercomputers or sophisticated modelling, that such emissions must, eventually, increase the temperature, which must, eventually, be harmful. Consider, therefore: what if the relevant parameters had been just slightly different and the moment of disaster had been in, say, 1902 - Veblen’s time - when carbondioxide emissions were already orders of magnitude above their pre-Enlightenment values. Then the disaster would have happened before anyone could have predicted it or known what was happening. Sea levels would have risen, agriculture would have been disrupted, millions would have begun to die, with worse to come. And the great issue of the day would have been not how to prevent it but what could be done about it.

They had no supercomputers then. Because of Babbage’s failures and the scientific community’s misjudgements - and, perhaps most importantly, their lack of wealth - they lacked the vital technology of automated computing altogether. Mechanical calculators and roomfuls of clerks would have been insufficient. But, much worse: they had almost no atmospheric physicists. In fact the total number of physicists of all kinds was a small fraction of the number who today work on climate change alone. From society’s point of view, physicists were a luxury in 1902, like colour televisions were in the 1970s. Yet, to recover from the disaster, society would have needed more scientific knowledge, and better technology, and more of it - that is to say, more wealth. For instance, in 1900, building a sea wall to protect the coast of a low-lying island would have required resources so enormous that the only islands that could have afforded it would have been those with either large concentrations of cheap labour or exceptional wealth, as in the Netherlands, much of whose population already lived below sea level thanks to the technology of dyke-building.

This is a challenge that is highly susceptible to automation. But people were in no position to address it in that way. All relevant machines were underpowered, unreliable, expensive, and impossible to produce in large numbers. An enormous effort to construct a Panama canal had just failed with the loss of thousands of lives and vast amounts of money, due to inadequate technology and scientific knowledge. And, to compound those problems, the world as a whole had very little wealth by today’s standards. Today, a coastal defence project would be well within the capabilities of almost any coastal nation - and would add decades to the time available to find other solutions to rising sea levels.

If none are found, what would we do then? That is a question of a wholly different kind, which brings me to my second observation on the climate-change controversy. It is that, while the supercomputer simulations make (conditional) predictions, the economic forecasts make almost pure prophecies. For we can expect the future of human responses to climate to depend heavily on how successful people are at creating new knowledge to address the problems that arise. So comparing predictions with prophecies is going to lead to that same old mistake.

Again, suppose that disaster had already been under way in 1902. Consider what it would have taken for scientists to forecast, say, carbon-dioxide emissions for the twentieth century. On the (shaky) assumption that energy use would continue to increase by roughly the same exponential factor as before, they could have estimated the resulting increase in emissions. But that estimate would not have included the effects of nuclear power. It could not have, because radioactivity itself had only just been discovered, and would not be harnessed for power until the middle of the century. But suppose that somehow they had been able to foresee that. Then they might have modified their carbon-dioxide forecast, and concluded that emissions could easily be restored to below the 1902 level by the end of the century. But, again, that would only be because they could not possibly foresee the campaign against nuclear power, which would put a stop to its expansion (ironically, on environmental grounds) before it ever became a significant factor in reducing emissions. And so on. Time and again, the unpredictable factor of new human ideas, both good and bad, would make the scientific prediction useless. The same is bound to be true - even more so - of forecasts today for the coming century. Which brings me to my third observation about the current controversy.

It is not yet accurately known how sensitive the atmosphere’s temperature is to the concentration of carbon dioxide - that is, how much a given increase in concentration increases the temperature. This number is important politically, because it affects how urgent the problem is: high sensitivity means high urgency; low sensitivity means the opposite. Unfortunately, this has led to the political debate being dominated by the side issue of how ‘anthropogenic’ (human-caused) the increase in temperature to date has been. It is as if people were arguing about how best to prepare for the next hurricane while all agreeing that the only hurricanes one should prepare for are human-induced ones. All sides seem to assume that if it turns out that a random fluctuation in the temperature is about to raise sea levels, disrupt agriculture, wipe out species and so on, our best plan would be simply to grin and bear it. Or if two-thirds of the increase is anthropogenic, we should not mitigate the effects of the other third.

Trying to predict what our net effect on the environment will be for the next century and then subordinating all policy decisions to optimizing that prediction cannot work. We cannot know how much to reduce emissions by, nor how much effect that will have, because we cannot know the future discoveries that will make some of our present actions seem wise, some counter-productive and some irrelevant, nor how much our efforts are going to be assisted or impeded by sheer luck. Tactics to delay the onset of foreseeable problems may help. But they cannot replace, and must be subordinate to, increasing our ability to intervene after events turn out as we did not foresee. If that does not happen in regard to carbon-dioxide-induced warming, it will happen with something else.

Indeed, we did not foresee the global-warming disaster. I call it a disaster because the prevailing theory is that our best option is to prevent carbon-dioxide emissions by spending vast sums and enforcing severe worldwide restrictions on behaviour, and that is already a disaster by any reasonable measure. I call it unforeseen because we now realize that it was already under way even in 1971, when I attended that lecture. Ehrlich did tell us that agriculture was soon going to be devastated by rapid climate change. But the change in question was going to be global cooling, caused by smog and the condensation trails of supersonic aircraft. The possibility of warming caused by gas emissions had already been mooted by some scientists, but Ehrlich did not consider it worth mentioning. He told us that the evidence was that a general cooling trend had already begun, and that it would continue with catastrophic effects, though it would be reversed in the very long term because of ‘heat pollution’ from industry (an effect that is currently at least a hundred times smaller than the global warming that preoccupies us).

There is a saying that an ounce of prevention equals a pound of cure. But that is only when one knows what to prevent. No precautions can avoid problems that we do not yet foresee. To prepare for those, there is nothing we can do but increase our ability to put things right if they go wrong. Trying to rely on the sheer good luck of avoiding bad outcomes indefinitely would simply guarantee that we would eventually fail without the means of recovering.

The world is currently buzzing with plans to force reductions in gas emissions at almost any cost. But it ought to be buzzing much more with plans to reduce the temperature, or for how to thrive at a higher temperature. And not at all costs, but efficiently and cheaply. Some such plans exist - for instance to remove carbon dioxide from the atmosphere by a variety of methods; and to generate clouds over the oceans to reflect sunlight; and to encourage aquatic organisms to absorb more carbon dioxide. But at the moment these are very minor research efforts. Neither supercomputers nor international treaties nor vast sums are devoted to them. They are not central to the human effort to face this problem, or problems like it.

This is dangerous. There is as yet no serious sign of retreat into a sustainable lifestyle (which would really mean achieving only the semblance of sustainability), but even the aspiration is dangerous. For what would we be aspiring to? To forcing the future world into our image, endlessly reproducing our lifestyle, our misconceptions and our mistakes. But if we choose instead to embark on an open-ended journey of creation and exploration whose every step is unsustainable until it is redeemed by the next - if this becomes the prevailing ethic and aspiration of our society - then the ascent of man, the beginning of infinity, will have become, if not secure, then at least sustainable.

TERMINOLOGY

The ascent of man The beginning of infinity. Moreover, Jacob Bronowski’s The Ascent of Man was one of the inspirations for this book.

Sustain The term has two almost opposite, but often confused, meanings: to provide someone with what they need, and to prevent things from changing.

MEANINGS OF ‘THE BEGINNING OF INFINITY’ ENCOUNTERED IN THIS CHAPTER

- Rejecting (the semblance of) sustainability as an aspiration or a constraint on planning.

SUMMARY

Static societies eventually fail because their characteristic inability to create knowledge rapidly must eventually turn some problem into a catastrophe. Analogies between such societies and the technological civilization of the West today are therefore fallacies. Marx, Engels and Diamond’s ‘ultimate explanation’ of the different histories of different societies is false: history is the history of ideas, not of the mechanical effects of biogeography. Strategies to prevent foreseeable disasters are bound to fail eventually, and cannot even address the unforeseeable. To prepare for those, we need rapid progress in science and technology and as much wealth as possible.