Complexity: A Guided Tour - Melanie Mitchell (2009)

Part V. Conclusion

I will put Chaos into fourteen lines

And keep him there; and let him thence escape

If he be lucky; let him twist, and ape

Flood, fire, and demon—his adroit designs

Will strain to nothing in the strict confines

Of this sweet order, where, in pious rape,

I hold his essence and amorphous shape,

Till he with Order mingles and combines.

Past are the hours, the years of our duress,

His arrogance, our awful servitude:

I have him. He is nothing more nor less

Than something simple not yet understood;

I shall not even force him to confess;

Or answer. I will only make him good.

—Edna St. Vincent Millay, Mine the

Harvest: A Collection of New Poems

Chapter 19. The Past and Future of the Sciences of Complexity

IN 1995, THE SCIENCE JOURNALIST John Horgan published an article in Scientific American, arguably the world’s leading popular science magazine, attacking the field of complex systems in general and the Santa Fe Institute in particular. His article was advertised on the magazine’s cover under the label “Is Complexity a Sham?” (figure 19.1).

The article contained two main criticisms. First, in Horgan’s view, it was unlikely that the field of complex systems would uncover any useful general principles, and second, he believed that the predominance of computer modeling made complexity a “fact-free science.” In addition, the article made several minor jabs, calling complexity “pop science” and its researchers “complexologists.” Horgan speculated that the term “complexity” has little meaning but that we keep it for its “public-relations value.”

To add insult to injury, Horgan quoted me as saying, “At some level you can say all complex systems are aspects of the same underlying principles, but I don’t think that will be very useful.” Did I really say this? I wondered. What was the context? Do I believe it? Horgan had interviewed me on the phone for an hour or more and I had said a lot of things; he chose the single most negative comment to use in his article. I hadn’t had very much experience with science journalists at that point and I felt really burned.

I wrote an angry, heartfelt letter to the editor at Scientific American, listing all the things I thought were wrong and unfair in Horgan’s article. Of course a dozen or more of my colleagues did the same; the magazine published only one of these letters and it wasn’t mine.

FIGURE 19.1. Complexity is “dissed” on the cover of Scientific American. (Cover art by Rosemary Volpe, reprinted by permission.)

The whole incident taught me some lessons. Mostly, be careful what you say to journalists. But it did force me to think harder and more carefully about the notion of “general principles” and what this notion might mean.

Horgan’s article grew into an equally cantankerous book, called The End of Science, in which he proposed that all of the really important discoveries of science have already been made, and that humanity would make no more. His Scientific American article on complexity was expanded into a chapter, and included the following pessimistic prediction: “The fields of chaos, complexity, and artificial life will continue…. But they will not achieve any great insights into nature—certainly none comparable to Darwin’s theory of evolution or quantum mechanics.”

Is Horgan right in any sense? Is it futile to aim for the discovery of general principles or a “unified theory” covering all complex systems?

On Unified Theories and General Principles

The term unified theory (or Grand Unified Theory, quaintly abbreviated as GUT) usually refers to a goal of physics: to have a single theory that unifies the basic forces in the universe. String theory is one attempt at a GUT, but there is no consensus in physics that string theory works or even if a GUT exists.

Imagine that string theory turns out to be correct—physics’ long sought-after GUT. That would be an enormously important achievement, but it would not be the end of science, and in particular it would be far from the end of complex systems science. The behaviors of complex systems that interest us are not understandable at the level of elementary particles or ten-dimensional strings. Even if these elements make up all of reality, they are the wrong vocabulary for explaining complexity. It would be like answering the question, “Why is the logistic map chaotic?” with the answer, “Because xt+1 = R xt (1 − xt).” The explanation for chaos is, in some sense, embedded in the equation, just as the behavior of the immune system would in some sense be embedded in a Grand Unified Theory of physics. But not in the sense that constitutes human understanding—the ultimate goal of science. Physicists Jim Crutchfield, Doyne Farmer, Norman Packard, and Robert Shaw voiced this view very well: “[T]he hope that physics could be complete with an increasingly detailed understanding of fundamental physical forces and constituents is unfounded. The interaction of components on one scale can lead to complex global behavior on a larger scale that in general cannot be deduced from knowledge of the individual components.” Or, as Albert Einstein supposedly quipped, “Gravitation is not responsible for people falling in love.”

So if fundamendal physics is not to be a unified theory for complex systems, what, if anything, is? Most complex systems researchers would probably say that a unified theory of complexity is not a meaningful goal at this point. The science of physics, being over two thousand years old, is conceptually way ahead in that it has identified two main kinds of “stuff”—mass and energy—which Einstein unified with E = mc2. It also has identified the four basic forces in nature, and has unified at least three of them. Mass, energy, and force, and the elementary particles that give rise to them, are the building blocks of theories in physics.

As for complex systems, we don’t even know what corresponds to the elemental “stuff” or to a basic “force”; a unified theory doesn’t mean much until you figure out what the conceptual components or building blocks of that theory should be.

Deborah Gordon, the ecologist and entomologist voiced this opinion:

Recently, ideas about complexity, self-organization, and emergence—when the whole is greater than the sum of its parts—have come into fashion as alternatives for metaphors of control. But such explanations offer only smoke and mirrors, functioning merely to provide names for what we can’t explain; they elicit for me the same dissatisfaction I feel when a physicist says that a particle’s behavior is caused by the equivalence of two terms in an equation. Perhaps there can be a general theory of complex systems, but it is clear we don’t have one yet. A better route to understanding the dynamics of apparently self-organizing systems is to focus on the details of specific systems. This will reveal whether there are general laws… . The hope that general principles will explain the regulation of all the diverse complex dynamical systems that we find in nature can lead to ignoring anything that doesn’t fit a pre-existing model. When we learn more about the specifics of such systems, we will see where analogies between them are useful and where they break down.

Of course there are many general principles that are not very useful, for example, “all complex systems exhibit emergent properties,” because, as Gordon says, they “provide names for what we can’t explain.” This is, I think, what I was trying to say in the statement of mine that Horgan quoted. I think Gordon is correct in her implication that no single set of useful principles is going to apply to all complex systems.

It might be better to scale back and talk of common rather than general principles: those that provide new insight into—or new conceptualizations of—the workings of a set of systems or phenomena that would be very difficult to glean by studying these systems or phenomena separately, and trying to make analogies after the fact.

The discovery of common principles might be part of a feedback cycle in complexity research: knowledge about specific complex systems is synthesized into common principles, which then provide new ideas for understanding the specific systems. The specific details and common principles inform, constrain, and enrich one another.

This all sounds well and good, but where are examples of such principles? Of course proposals for common or universal principles abound in the literature, and we have seen several such proposals in this book: the universal properties of chaotic systems; John von Neumann’s principles of self-reproduction; John Holland’s principle of balancing exploitation and exploration; Robert Axelrod’s general conditions for the evolution of cooperation; Stephen Wolfram’s principle of computational equivalence; Albert-László Barabási and Réka Albert’s proposal that preferential attachment is a general mechanism for the development of real-world networks; West, Brown, and Enquist’s proposal that fractal circulation networks explain scaling relationships; et cetera. There are also many proposals that I have had to leave out because of limited time and space.

I stuck my neck out in chapter 12 by proposing a number of common principles of adaptive information processing in decentralized systems. I’m not sure Gordon would agree, but I believe those principles might actually be useful for people who study specific complex systems such as the ones I covered—the principles might give them new ideas about how to understand the systems they study. As one example, I proposed that “randomness and probabilities are essential.” When I gave a lecture recently and outlined those principles, a neuroscientist in the audience responded by speculating where randomness might come from in the brain and what its uses might be. Some people in the room had never thought about the brain in these terms, and this idea changed their view a bit, and perhaps gave them some new concepts to use in their own research.

On the other hand, feedback must come from the specific to the general. At that same lecture, several people pointed out examples of complex adaptive systems that they believed did not follow all of my principles. This forced me to rethink what I was saying and to question the generality of my assertions. As Gordon so rightly points out, we should be careful to not ignore “anything that doesn’t fit a pre-existing model.” Of course what are thought to be facts about nature are sometimes found to be wrong as well, and perhaps some common principles will help in directing our skepticism. Albert Einstein, a theorist par excellence, supposedly said, “If the facts don’t fit the theory, change the facts.” Of course this depends on the theory and the facts. The more established the theory or principles, the more skeptical you have to be of any contradicting facts, and conversely the more convincing the contradicting facts are, the more skeptical you need to be of your supposed principles. This is the nature of science—an endless cycle of proud proposing and disdainful doubting.

Roots of Complex Systems Research

The search for common principles governing complex systems has a long history, particularly in physics, but the quest for such principles became most prominent in the years after the invention of computers. As early as the 1940s, some scientists proposed that there are strong analogies between computers and living organisms.

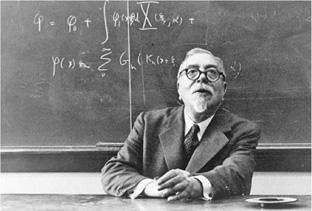

In the 1940s, the Josiah Macy, Jr. Foundation sponsored a series of interdisciplinary scientific meetings with intriguing titles, including “Feedback Mechanisms and Circular Causal Systems in Biological and Social Systems,” “Teleological Mechanisms in Society,” and “Teleological Mechanisms and Circular Causal Systems.” These meetings were organized by a small group of scientists and mathematicians who were exploring common principles of widely varying complex systems. A prime mover of this group was the mathematician Norbert Wiener, whose work on the control of anti-aircraft guns during World War II had convinced him that the science underlying complex systems in both biology and engineering should focus not on the mass, energy, and force concepts of physics, but rather on the concepts of feedback, control, information, communication, and purpose (or “teleology”).

Norbert Wiener, 1894-1964 (AIP Emilio Segre Visual Archives)

In addition to Norbert Wiener, the series of Macy Foundation conferences included several scientific luminaries of the time, such as John von Neumann, Warren McCulloch, Margaret Mead, Gregory Bateson, Claude Shannon, W. Ross Ashby, among others. The meetings led Wiener to christen a new discipline of cybernetics, from the Greek word for “steersman”—that is, one who controls a ship. Wiener summed up cybernetics as “the entrie field of control and communication theory, whether in the machine or in the animal.”

The discussions and writings of this loose-knit cybernetics group focused on many of the issues that have come up in this book. They asked: What are information and computation? How are they manifested in living organisms? What analogies can be made between living systems and machines? What is the role of feedback in complex behavior? How do meaning and purpose arise from information processing?

There is no question that much important work on analogies between living systems and machines came out of the cybernetics group. This work includes von Neumann’s self-reproducing automaton, which linked notions of information and reproduction; H. Ross Ashby’s “Design for a Brain,” an influential proposal for how the ideas of dynamics, information, and feedback should inform neuroscience and psychology; Warren McCulloch and Walter Pitts’ model of neurons as logic devices, which was the impetus for the later field of neural networks; Margaret Mead and Gregory Bateson’s application of cybernetic ideas in psychology and anthropology; and Norbert Wiener’s books Cybernetics and The Human Use of Human Beings, which attempted to provide a unified overview of the field and its relevance in many disciplines. These are only a few examples of works that are still influential today.

In its own time, the research program of cybernetics elicited both enthusiasm and disparagement. Proponents saw it as the beginning of a new era of science. Critics argued that it was too broad, too vague, and too lacking in rigorous theoretical foundations to be useful. The anthropologist Gregory Bateson adopted the first view, writing, “The two most important historical events in my life were the Treaty of Versailles and the discovery of Cybernetics.” On the other side, the biophysicist and Nobel prize-winner Max Delbrück characterized the cybernetics meeting he attended as “vacuous in the extreme and positively inane.” Less harshly, the decision theorist Leonard Savage described one of the later Macy Foundation meetings as “bull sessions with a very elite group.”

In time, the enthusiasm of the cyberneticists for attending meetings faded, along with the prospects of the field itself. William Aspray, a historian of science who has studied the cybernetics movement writes that “in the end Wiener’s hope for a unified science of control and communication was not fulfilled. As one participant in these events explained, cybernetics had ‘more extent than content.’ It ranged over too disparate an array of subjects, and its theoretical apparatus was too meager and cumbersome to achieve the unification Wiener desired.”

A similar effort toward finding common principles, under the name of General System Theory, was launched in the 1950s by the biologist Ludwig von Bertalanffy, who characterized the effort as “the formulation and deduction of those principles which are valid for ‘systems’ in general.” A system is defined in a very general sense: a collection of interacting elements that together produce, by virtue of their interactions, some form of system-wide behavior. This, of course, can describe just about anything. The general system theorists were particularly interested in general properties of living systems. System theorist Anatol Rapoport characterized the main themes of general system theory (as applied to living systems, social systems, and other complex systems) as preservation of identity amid changes, organized complexity, and goal-directedness. Biologists Humberto Maturana and Francisco Varela attemptedto make sense of the first two themes in terms of their notion of autopoiesis, or “self-construction”—a self-maintaining process by which a system (e.g., a biological cell) functions as a whole to continually produce the components (e.g., parts of a cell) which themselves make up the system that produces them. To Maturana, Varela, and their many followers, autopoiesis was a key, if not the key feature of life.

Like the research program of the cyberneticists, these ideas are very appealing, but attempts to construct a rigorous mathematical framework—one that explains and predicts the important common properties of such systems—were not generally successful. However, the central scientific questions posed by these efforts formed the roots of several modern areas of science and engineering. Artificial intelligence, artificial life, systems ecology, systems biology, neural networks, systems analysis, control theory, and the sciences of complexity have all emerged from seeds sown by the cyberneticists and general system theorists. Cybernetics and general system theory are still active areas of research in some quarters of the scientific community, but have been largely overshadowed by these offspring disciplines.

Several more recent approaches to general theories of complex systems have come from the physics community. For example, Hermann Haken’s Synergetics and Ilya Prigogine’s theories of dissipative structures and nonequilibrium systems both have attempted to integrate ideas from thermodynamics, dynamical systems theory, and the theory of “critical phenomena” to explain self-organization in physical systems such as turbulent fluids and complex chemical reactions, as well as in biological systems. In particular, Prigogine’s goal was to determine a “vocabulary of complexity”: in the words of Prigogine and his colleague, Grégoire Nicolis, “a number of concepts that deal with mechanisms that are encountered repeatedly throughout the different phenomena; they are nonequilibrium, stability, bifurcation and symmetry breaking, and long-range order … they become the basic elements of what we believe to be a new scientific vocabulary.” Work continues along these lines, but to date these efforts have not yet produced the coherent and general vocabulary of complexity envisioned by Prigogine, much less a general theory that unifies these disparate concepts in a way that explains complexity in nature.

Five Questions

As you can glean from the wide variety of topics I have covered in this book, what we might call modern complex systems science is, like its forebears, still not a unified whole but rather a collection of disparate parts with some overlapping concepts. What currently unifies different efforts under this rubrik are common questions, methods, and the desire to make rigorous mathematical and experimental contributions that go beyond the less rigorous analogies characteristic of these earlier fields. There has been much debate about what, if anything, modern complex systems science is contributing that was lacking in previous efforts. To what extent is it succeeding?

There is a wide spectrum of opinions on this question. Recently, a researcher named Carlos Gershenson sent out a list of questions on complex systems to a set of his colleagues (including myself) and plans to publish the responses in a book called Complexity: 5 Questions. The questions are

1. Why did you begin working with complex systems?

2. How would you define complexity?

3. What is your favorite aspect/concept of complexity?

4. In your opinion, what is the most problematic aspect/concept of complexity?

5. How do you see the future of complexity?

I have so far seen fourteen of the responses. Although the views expressed are quite diverse, some common opinions emerge. Most of the respondents dismiss the possibility of “universal laws” of complexity as being too ambitious or too vague. Moreover, most respondents believe that defining complexity is one of the most problematic aspects of the field and is likely to be the wrong goal altogether. Many think the word complexity is not meaningful; some even avoid using it. Most do not believe that there is yet a “science of complexity,” at least not in the usual sense of the word science—complex systems often seems to be a fragmented subject rather than a unified whole.

Finally a few of the respondents worry that the field of complex systems will share the fate of cybernetics and related earlier efforts—that is, it will pinpoint intriguing analogies among different systems without producing a coherent and rigorous mathematical theory that explains and predicts their behavior.

However, in spite of these pessimistic views of the limitations of current complex systems research, most of the respondents are actually highly enthusiastic about the field and the contributions it has and probably will make to science. In the life sciences, brain science, and social sciences, the more carefully scientists look, the more complex the phenomena are. New technologies have enabled these discoveries, and what is being discovered is in dire need of new concepts and theories about how such complexity comes about and operates. Such discoveries will require science to change so as to grapple with the questions being asked in complex systems research. Indeed, as we have seen in examples in previous chapters, in recent years the themes and results of complexity science have touched almost every scientific field, and some areas of study, such as biology and social sciences, are being profoundly transformed by these ideas. Going further, several of the survey participants voiced opinions similar to that stated by one respondent: “I see some form of complexity science taking over the whole of scientific thinking.”

Apart from important individual discoveries such as Brown, Enquist, and West’s work on metabolic scaling or Axelrod’s work on the evolution of cooperation (among many other examples), perhaps the most significant contributions of complex systems research to date have been the questioning of many long-held scientific assumptions and the development of novel ways of conceptualizing complex problems. Chaos has shown us that intrinsic randomness is not necessary for a system’s behavior to look random; new discoveries in genetics have challenged the role of gene change in evolution; increasing appreciation of the role of chance and self-organization has challenged the centrality of natural selection as an evolutionary force. The importance of thinking in terms of nonlinearity, decentralized control, networks, hierarchies, distributed feedback, statistical representations of information, and essential randomness is gradually being realized in both the scientific community and the general population.

New conceptual frameworks often require the broadening of existing concepts. Throughout this book we have seen how the concepts of information and computation are being extended to encompass living systems and even complex social systems; how the notions of adaptation and evolution have been extended beyond the biological realm; and how the notions of life and intelligence are being expanded, perhaps even to include self-replicating machines and analogy-making computer programs.

This way of thinking is progressively moving into mainstream science. I could see this clearly when I interacted with young graduate students and postdocs at the SFI summer schools. In the early 1990s, the students were extremely excited about the new ideas and novel scientific worldview presented at the school. But by the early 2000s, largely as a result of the educational efforts of SFI and similar institutes, these ideas and worldview had already permeated the culture of many disciplines, and the students were much more blasé, and, in some cases, disappointed that complex systems science seemed so “mainstream.” This should be counted as a success, I suppose.

Finally, complex systems research has emphasized above all interdisciplinary collaboration, which is seen as essential for progress on the most important scientific problems of our day.

The Future of Complexity, or Waiting for Carnot

In my view complex systems science is branching off in two separate directions. Along one branch, ideas and tools from complexity research will be refined and applied in an increasingly wide variety of specific areas. In this book we’ve seen ways in which similar ideas and tools are being used in fields as disparate as physics, biology, epidemiology, sociology, political science, and computer science, among others. Some areas I didn’t cover in which these ideas are gaining increasing prominence include neuroscience, economics, ecology, climatology, and medicine—the seeds of complexity and interdisciplinary science are being widely sowed.

The second branch, more controversial, is to view all these fields from a higher level, so as to pursue explanatory and predictive mathematical theories that make commonalities among complex systems more rigorous, and that can describe and predict emergent phenomena.

At one complexity meeting I attended, a heated discussion took place about what direction the field should take. One of the participants, in a moment of frustration, said, “ ‘Complexity’ was once an exciting thing and is now a cliché. We should start over.”

What should we call it? It is probably clear by now that this is the crux of the problem—we don’t have the right vocabulary to precisely describe what we’re studying. We use words such as complexity, self-organization, and emergence to represent phenomena common to the systems in which we’re interested but we can’t yet characterize the commonalities in a more rigorous way. We need a new vocabulary that not only captures the conceptual building blocks of self-organization and emergence but that can also describe how these come to encompass what we call functionality, purpose, or meaning (cf. chapter 12). These ill-defined terms need to be replaced by new, better-defined terms that reflect increased understanding of the phenomena in question. As I have illustrated in this book, much work in complex systems involves the integration of concepts from dynamics, information, computation, and evolution. A new conceptual vocabulary and a new kind of mathematics will have to be forged from this integration. The mathematician Steven Strogatz puts it this way: “I think we may be missing the conceptual equivalent of calculus, a way of seeing the consequences of myriad interactions that define a complex system. It could be that this ultracalculus, if it were handed to us, would be forever beyond human comprehension. We just don’t know.”

Having the right conceptual vocabulary and the right mathematics is essential for being able to understand, predict, and in some cases, direct or control self-organizing systems with emergent properties. Developing such concepts and mathematical tools has been, and remains, the greatest challenge facing the sciences of complex systems.

Sadi Carnot, 1796-1832 (Boilly lithograph, Photographische Gesellschaft, Berlin, courtesy AIP Emilio Segre Visual Archives, Harvard University Collection.)

An in-joke in our field is that we’re “waiting for Carnot.” Sadi Carnot was a physicist of the early nineteenth century who originated some of the key concepts of thermodynamics. Similarly, we are waiting for the right concepts and mathematics to be formulated to describe the many forms of complexity we see in nature.

Accomplishing all of this will require something more like a modern Isaac Newton than a modern Carnot. Before the invention of calculus, Newton faced a conceptual problem similar to what we face today. In his biography of Newton, the science writer James Gleick describes it thus: “He was hampered by the chaos of language—words still vaguely defined and words not quite existing…. Newton believed he could marshal a complete science of motion, if only he could find the appropriate lexicon.…” By inventing calculus, Newton finally created this lexicon. Calculus provides a mathematical language to rigorously describe change and motion, in terms of such notions as infinitesimal, derivative, integral, and limit. These concepts already existed in mathematics but in a fragmented way; Newton was able to see how they are related and to construct a coherent edifice that unified them and made them completely general. This edifice is what allowed Newton to create the science of dynamics.

Can we similarly invent the calculus of complexity—a mathematical language that captures the origins and dynamics of self-organization, emergent behavior, and adaptation in complex systems? There are some people who have embarked on this monumental task. For example, as I described in chapter 10, Stephen Wolfram is using the building blocks of dynamics and computation in cellular automata to create what he thinks is a new, fundamental theory of nature. As I noted above, Ilya Prigogine and his followers have attempted to identify the building blocks and build a theory of complexity in terms of a small list of physical concepts. The physicist Per Bak introduced the notion of self-organized criticality, based on concepts from dynamical systems theory and phase transitions, which he presented as a general theory of self-organization and emergence. The physicist Jim Crutchfield has proposed a theory of computational mechanics, which integrates ideas from dynamical systems, computation theory, and the theory of statistical inference to explain the emergence and structure of complex and adaptive behavior.

While each of these approaches, along with several others I don’t describe here, is still far from being a comprehensive explanatory theory for complex systems, each contains important new ideas and are still areas of active research. Of course it’s still unclear if there even exists such a theory; it may be that complexity arises and operates by very different processes in different systems. In this book I’ve presented some of the likely pieces of a complex systems theory, if one exists, in the domains of information, computation, dynamics, and evolution. What’s needed is the ability to see their deep relationships and how they fit into a coherent whole—what might be referred to as “the simplicity on the other side of complexity.”

While much of the science I’ve described in this book is still in its early stages, to me, the prospect of fulfilling such ambitious goals is part of what makes complex systems a truly exciting area to work in. One thing is clear: pursuing these goals will require, as great science always does, an adventurous intellectual spirit and a willingness to risk failure and reproach by going beyond mainstream science into ill-defined and uncharted territory. In the words of the writer and adventurer André Gide, “One doesn’t discover new lands without consenting to lose sight of the shore.” Readers, I look forward to the day when we can together tour those new territories of complexity.