Complexity: A Guided Tour - Melanie Mitchell (2009)

Part IV. Network Thinking

Chapter 17. The Mystery of Scaling

THE PREVIOUS TWO CHAPTERS SHOWED how network thinking is having profound effects on many areas of science, particularly biology. Quite recently, a kind of network thinking has led to a proposed solution for one of biology’s most puzzling mysteries: the way in which properties of living organisms scale with size.

Scaling in Biology

Scaling describes how one property of a system will change if a related property changes. The scaling mystery in biology concerns the question of how the average energy used by an organism while resting—the basal metabolic rate—scales with the organism’s body mass. Since metabolism, the conversion by cells of food, water, air, and light to usable energy, is the key process underlying all living systems, this relation is enormously important for understanding how life works.

It has long been known that the metabolism of smaller animals runs faster relative to their body size than that of larger animals. In 1883, German physiologist Max Rubner tried to determine the precise scaling relationship by using arguments from thermodynamics and geometry. Recall from chapter 3 that processes such as metabolism, that convert energy from one form to another, always give off heat. An organism’s metabolic rate can be defined as the rate at which its cells convert nutrients to energy, which is used for all the cell’s functions and for building new cells. The organism gives off heat at this same rate as a by-product. An organism’s metabolic rate can thus be inferred by measuring this heat production.

If you hadn’t already known that smaller animals have faster metabolisms relative to body size than large ones, a naïve guess might be that metabolic rate scales linearly with body mass—for example, that a hamster with eight times the body mass of a mouse would have eight times that mouse’s metabolic rate, or even more extreme, that a hippopotamus with 125,000 times the body mass of a mouse would have a metabolic rate 125,000 times higher.

The problem is that the hamster, say, would generate eight times the amount of heat as the mouse. However, the total surface area of the hamster’s body—from which the heat must radiate—would be only about four times the total surface of the mouse. This is because as an animal gets larger, its surface area grows more slowly than its mass (or equivalent, its volume).

This is illustrated in figure 17.1, in which a mouse, hamster, and hippo are represented by spheres. You might recall from elementary geometry that the formula for the volume of a sphere is four-thirds pi times the radius cubed, where pi ≈ 3.14159. Similarly, the formula for the surface area of a sphere is four times pi times the radius squared. We can say that “volume scales as the cube of the radius” whereas “surface area scales as the square of the radius.” Here “scales as” just means “is proportional to”—that is, ignore the constants 4 / 3 × pi and 4 × pi. As illustrated in figure 17.1, the hamster sphere has twice the radius of the mouse sphere, and it has four times the surface area and eight times the volume of the mouse sphere. The radius of the hippo sphere (not drawn to scale) is fifty times the mouse sphere’s radius; the hippo sphere thus has 2,500 times the surface area and 125,000 times the volume of the mouse sphere. You can see that as the radius is increased, the surface area grows (or “scales”) much more slowly than the volume. Since the surface area scales as the radius squared and the volume scales as the radius cubed, we can say that “the surface area scales as the volume raised to the two-thirds power.” (See the notes for the derivation of this.)

FIGURE 17.1. Scaling properties of animals (represented as spheres). (Drawing by David Moser.)

Raising volume to the two-thirds power is shorthand for saying “square the volume, and then take its cube root.”

Generating eight times the heat with only four times the surface area to radiate it would result in one very hot hamster. Similarly, the hippo would generate 125,000 times the heat of the mouse but that heat would radiate over a surface area of only 2,500 times the mouse’s. Ouch! That hippo is seriously burning.

Nature has been very kind to animals by not using that naïve solution: our metabolisms thankfully do not scale linearly with our body mass. Max Rubner reasoned that nature had figured out that in order to safely radiate the heat we generate, our metabolic rate should scale with body mass in the same way as surface area. Namely, he proposed that metabolic rate scales with body mass to the two-thirds power. This was called the “surface hypothesis,” and it was accepted for the next fifty years. The only problem was that the actual data did not obey this rule.

This was discovered in the 1930s by a Swiss animal scientist, Max Kleiber, who performed a set of careful measures of metabolism rate of different animals. His data showed that metabolic rate scales with body mass to the three-fourths power: that is, metabolic rate is proportional to bodymass3/4. You’ll no doubt recognize this as a power law with exponent 3/4. This result was surprising and counterintuitive. Having an exponent of 3/4 rather than 2/3 means that animals, particularly large ones, are able to maintain a higher metabolic rate than one would expect, given their surface area. This means that animals are more efficient than simple geometry predicts.

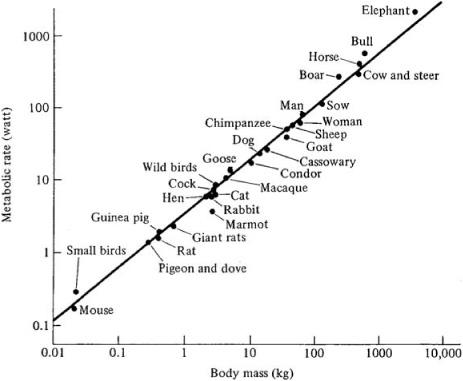

Figure 17.2 illustrates such scaling for a number of different animals. The horizontal axis gives the body mass in kilograms and the vertical axis gives the average basal metabolic rate measured in watts. The labeled dots are the actual measurements for different animals, and the straight line is a plot of metabolic rate scaling with body mass to exactly the three-fourths power. The data do not exactly fit this line, but they are pretty close. figure 17.2 is a special kind of plot—technically called a double logarithmic (or log-log) plot—in which the numbers on both axes increase by a power of ten with each tic on the axis. If you plot a power law on a double logarithmic plot, it will look like a straight line, and the slope of that line will be equal to the power law’s exponent. (See the notes for an explanation of this.)

FIGURE 17.2. Metabolic rate of various animals as a function of their body mass. (From K. Schmidt-Nielsen, Scaling: Why Is Animal Size So Important? Copyright © 1984 by Cambridge University Press. Reprinted with permission of Cambridge University Press.)

This power law relation is now called Kleiber’s law. Such 3/4-power scaling has more recently been claimed to hold not only for mammals and birds, but also for the metabolic rates of many other living beings, such as fish, plants, and even single-celled organisms.

Kleiber’s law is based only on observation of metabolic rates and body masses; Kleiber offered no explanation for why his law was true. In fact, Kleiber’s law was baffling to biologists for over fifty years. The mass of living systems has a huge range: from bacteria, which weigh less than one one-trillionth of a gram, to whales, which can weigh over 100 million grams. Not only does the law defy simple geometric reasoning; it is also surprising that such a law seems to hold so well for organisms over such a vast variety of sizes, species types, and habitat types. What common aspect of nearly all organisms could give rise to this simple, elegant law?

Several other related scaling relationships had also long puzzled biologists. For example, the larger a mammal is, the longer its life span. The life span for a mouse is typically two years or so; for a pig it is more like ten years, and for an elephant it is over fifty years. There are some exceptions to this general rule, notably humans, but it holds for most mammalian species. It turns out that if you plot average life span versus body mass for many different species, the relationship is a power law with exponent 1/4. If you plot average heart rate versus body mass, you get a power law with exponent −1/4 (the larger an animal, the slower its heart rate). In fact, biologists have identified a large collection of such power law relationships, all having fractional exponents with a 4 in the denominator. For that reason, all such relationships have been called quarter-power scaling laws. Many people suspected that these quarter-power scaling laws were a signature of something very important and common in all these organisms. But no one knew what that important and common property was.

An Interdisciplinary Collaboration

By the mid-1990s, James Brown, an ecologist and professor at the University of New Mexico, had been thinking about the quarter-power scaling problem for many years. He had long realized that solving this problem—understanding the reason for these ubiquitous scaling laws—would be a key step in developing any general theory of biology. A biology graduate student named Brian Enquist, also deeply interested in scaling issues, came to work with Brown, and they attempted to solve the problem together.

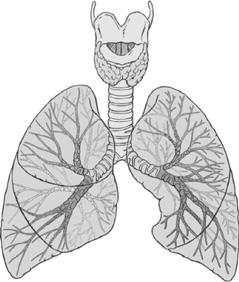

Brown and Enquist suspected that the answer lay somewhere in the structure of the systems in organisms that transport nutrients to cells. Blood constantly circulates in blood vessels, which form a branching network that carries nutrient chemicals to all cells in the body. Similarly, the branching structures in the lungs, called bronchi, carry oxygen from the lungs to the blood vessels that feed it into the blood (figure 17.3). Brown and Enquist believed that it is the universality of such branching structures in animals that give rise to the quarter-power laws. In order to understand how such structures might give rise to quarter-power laws, they needed to figure out how to describe these structures mathematically and to show that the math leads directly to the observed scaling laws.

Most biologists, Brown and Enquist included, do not have the math background necessary to construct such a complex geometric and topological analysis. So Brown and Enquist went in search of a “math buddy”—a mathematician or theoretical physicist who could help them out with this problem but not simplify it so much that the biology would get lost in the process.

Left to right: Geoffrey West, Brian Enquist, and James Brown. (Photograph copyright © by Santa Fe Institute. Reprinted with permission.)

FIGURE 17.3. Illustration of bronchi, branching structures in the lungs. (Illustration by Patrick Lynch, licensed under Creative Commons [http://creativecommons.org/licenses/by/3.0/].)

Enter Geoffrey West, who fit the bill perfectly. West, a theoretical physicist then working at Los Alamos National Laboratory, had the ideal mathematical skills to address the scaling problem. Not only had he already worked on the topic of scaling, albeit in the domain of quantum physics, but he himself had been mulling over the biological scaling problem as well, without knowing very much about biology. Brown and Enquist encountered West at the Santa Fe Institute in the mid-1990s, and the three began to meet weekly at the institute to forge a collaboration. I remember seeing them there once a week, in a glass-walled conference room, talking intently while someone (usually Geoffrey) was scrawling reams of complex equations on the white board. (Brian Enquist later described the group’s math results as “pyrotechnics.”) I knew only vaguely what they were up to. But later, when I first heard Geoffrey West give a lecture on their theory, I was awed by its elegance and scope. It seemed to me that this work was at the apex of what the field of complex systems had accomplished.

Brown, Enquist, and West had developed a theory that not only explained Kleiber’s law and other observed biological scaling relationships but also predicted a number of new scaling relationships in living systems. Many of these have since been supported by data. The theory, called metabolic scaling theory (or simply metabolic theory), combines biology and physics in equal parts, and has ignited both fields with equal parts excitement and controversy.

Power Laws and Fractals

Metabolic scaling theory answers two questions: (1) why metabolic scaling follows a power law at all; and (2) why it follows the particular power law with exponent 3/4. Before I describe how it answers these questions, I need to take a brief diversion to describe the relationship between power laws and fractals.

Remember the Koch curve and our discussion of fractals from chapter 7? If so, you might recall the notion of “fractal dimension.” We saw that in the Koch curve, at each level the line segments were one-third the length of the previous level, and the structure at each level was made up of four copies of the structure at the previous level. In analogy with the traditional definition of dimension, we defined the fractal dimension of the Koch curve this way: 3dimension = 4, which yields dimension = 1.26. More generally, if each level is scaled by a factor of x from the previous level and is made up of N copies of the previous level, then xdimension = N. Now, after having read chapter 15, you can recognize that this is a power law, with dimension as the exponent. This illustrates the intimate relationship between power laws and fractals. Power law distributions, as we saw in chapter 15, figure 15.6, are fractals—they are self-similar at all scales of magnification, and a power-law’s exponent gives the dimension of the corresponding fractal (cf. chapter 7), where the dimension quantifies precisely how the distribution’s self-similarity scales with level of magnification. Thus one could say, for example, that the degree distributions of the Web has a fractal structure, since it is self-similar. Similarly one could say that a fractal like the Koch curve gives rise to a power-law—the one that describes precisely how the curve’s self-similarity scales with level of magnification.

The take-home message is that fractal structure is one way to generate a power-law distribution; and if you happen to see that some quantity (such as metabolic rate) follows a power-law distribution, then you can hypothesize that there is something about the underlying system that is self-similar or “fractal-like.”

Metabolic Scaling Theory

Since metabolic rate is the rate at which the body’s cells turn fuel into energy, Brown, Enquist, and West reasoned that metabolic rate must be largely determined by how efficiently that fuel is delivered to cells. It is the job of the organism’s circulatory system to deliver this fuel.

Brown, Enquist, and West realized that the circulatory system is not just characterized in terms of its mass or length, but rather in terms of its network structure. As West pointed out, “You really have to think in terms of two separate scales—the length of the superficial you and the real you, which is made up of networks.”

In developing their theory, Brown, Enquist, and West assumed that evolution has produced circulatory and other fuel-transport networks that are maximally “space filling” in the body—that is, that can transport fuel to cells in every part of the body. They also assumed that evolution has designed these networks to minimize the energy and time that is required to distribute this fuel to cells. Finally, they assume that the “terminal units” of the network, the sites where fuel is provided to body tissue, do not scale with body mass, but rather are approximately the same size in small and large organisms. This property has been observed, for example, with capillaries in the circulatory system, which are the same size in most animals. Big animals just have more of them. One reason for this is that cells themselves do not scale with body size: individual mouse and hippo cells are roughly the same size. The hippo just has more cells so needs more capillaries to fuel them.

The maximally space-filling geometric objects are indeed fractal branching structures—the self-similarity at all scales means that space is equally filled at all scales. What Brown, Enquist, and West were doing in the glass-walled conference room all those many weeks and months was developing an intricate mathematical model of the circulatory system as a space-filling fractal. They adopted the energy-and-time-minimization and constant-terminal-unit-size assumptions given above, and asked, What happens in the model when body mass is scaled up? Lo and behold, their calculations showed that in the model, the rate at which fuel is delivered to cells, which determines metabolic rate, scales with body mass to the 3/4 power.

The mathematical details of the model that lead to the 3/4 exponent are rather complicated. However, it is worth commenting on the group’s interpretation of the 3/4 exponent. Recall my discussion above of Rubner’s surface hypothesis—that metabolic rate must scale with body mass the same way in which volume scales with surface area, namely, to the 2/3 power. One way to look at the 3/4 exponent is that it would be the result of the surface hypothesis applied to four-dimensional creatures! We can see this via a simple dimensional analogy. A two-dimensional object such as a circle has a circumference and an area. In three dimensions, these correspond to surface area and volume, respectively. In four dimensions, surface area and volume correspond, respectively, to “surface” volume and what we might call hypervolume—a quantity that is hard to imagine since our brains are wired to think in three, not four dimensions. Using arguments that are analogous to the discussion of how surface area scales with volume to the 2/3 power, one can show that in four dimensions surface volume scales with hypervolume to the 3/4 power.

In short, what Brown, Enquist, and West are saying is that evolution structured our circulatory systems as fractal networks to approximate a “fourth dimension” so as to make our metabolisms more efficient. As West, Brown, and Enquist put it, “Although living things occupy a three-dimensional space, their internal physiology and anatomy operate as if they were four-dimensional … Fractal geometry has literally given life an added dimension.”

Scope of the Theory

In its original form, metabolic scaling theory was applied to explain metabolic scaling in many animal species, such as those plotted in figure 17.2. However, Brown, Enquist, West, and their increasing cadre of new collaborators did not stop there. Every few weeks, it seems, a new class of organisms or phenomena is added to the list covered by the theory. The group has claimed that their theory can also be used to explain other quarter-power scaling laws such as those governing heart rate, life span, gestation time, and time spent sleeping.

The group also believes that the theory explains metabolic scaling in plants, many of which use fractal-like vascular networks to transport water and other nutrients. They further claim that the theory explains the quarter-power scaling laws for tree trunk circumference, plant growth rates, and several other aspects of animal and plant organisms alike. A more general form of the metabolic scaling theory that includes body temperature was proposed to explain metabolic rates in reptiles and fish.

Moving to the microscopic realm, the group has postulated that their theory applies at the cellular level, asserting that 3/4 power metabolic scaling predicts the metabolic rate of single-celled organisms as well as of metabolic-like, molecule-sized distribution processes inside the cell itself, and even to metabolic-like processes inside components of cells such as mitochondria. The group also proposed that the theory explains the rate of DNA changes in organisms, and thus is highly relevant to both genetics and evolutionary biology. Others have reported that the theory explains the scaling of mass versus growth rate in cancerous tumors.

In the realm of the very large, metabolic scaling theory and its extensions have been applied to entire ecosystems. Brown, Enquist, and West believe that their theory explains the observed −3/4 scaling of species population density with body size in certain ecosystems.

In fact, because metabolism is so central to all aspects of life, it’s hard to find an area of biology that this theory doesn’t touch on. As you can imagine, this has got many scientists very excited and looking for new places to apply the theory. Metabolic scaling theory has been said to have “the potential to unify all of biology” and to be “as potentially important to biology as Newton’s contributions are to physics.” In one of their papers, the group themselves commented, “We see the prospects for the emergence of a general theory of metabolism that will play a role in biology similar to the theory of genetics.”

Controversy

As to be expected for a relatively new, high-profile theory that claims to explain so much, while some scientists are bursting with enthusiasm for metabolic scaling theory, others are roiling with criticism. Here are the two main criticisms that are currently being published in some of the top scientific journals:

· Quarter-power scaling laws are not as universal as the theory claims. As a rule, given any proposed general property of living systems, biology exhibits exceptions to the rule. (And maybe even exceptions to this rule itself.) Metabolic scaling theory is no exception, so to speak. Although most biologists agree that a large number of species seem to follow the various quarter-power scaling laws, there are also many exceptions, and sometimes there is considerable variation in metabolic rate even within a single species. One familiar example is dogs, in which smaller breeds tend to live at least as long as larger breeds. It has been argued that, while Kleiber’s law represents a statistical average, the variations from this average can be quite large, and metabolic theory does not explain this because it takes into account only body mass and temperature. Others have argued that there are laws predicted by the theory that real-world data strongly contradict. Still others argue that Kleiber was wrong all along, and the best fit to the data is actually a power law with exponent 2/3, as proposed over one hundred years ago by Rubner in his surface hypothesis. In most cases, this is an argument about how to correctly interpret data on metabolic scaling and about what constitutes a “fit” to the theory. The metabolic scaling group stands by its theory, and has diligently replied to many of these arguments, which become increasingly technical and obscure as the authors discuss the intricacies of advanced statistics and biological functions.

· The Kleiber scaling law is valid but the metabolic scaling theory is wrong. Others have argued that metabolic scaling theory is oversimplified, that life is too complex and varied to be covered by one overreaching theory, and that positing fractal structure is by no means the only way to explain the observed power-law distributions. One ecologist put it this way: “The more detail that one knows about the particular physiology involved, the less plausible these explanations become.” Another warned, “It’s nice when things are simple, but the real world isn’t always so.” Finally, there have been arguments that the mathematics in metabolic scaling theory is incorrect. The authors of metabolic scaling theory have vehemently disagreed with these critiques and in some cases have pointed out what they believed to be fundamental mistakes in the critic’s mathematics.

The authors of metabolic scaling theory have strongly stood by their work and expressed frustration about criticisms of details. As West said, “Part of me doesn’t want to be cowered by these little dogs nipping at our heels.” However, the group also recognizes that a deluge of such criticisms is a good sign—whatever they end up believing, a very large number of people have sat up and taken notice of metabolic scaling theory. And of course, as I have mentioned, skepticism is one of the most important jobs of scientists, and the more prominent the theory and the more ambitious its claims are, the more skepticism is warranted.

The arguments will not end soon; after all, Newton’s theory of gravity was not widely accepted for more than sixty years after it first appeared, and many other of the most important scientific advances have faced similar fates. The main conclusion we can reach is that metabolic scaling theory is an exceptionally interesting idea with a huge scope and some experimental support. As ecologist Helene Müller-Landau predicts: “I suspect that West, Enquist et al. will continue repeating their central arguments and others will continue repeating the same central critiques, for years to come, until the weight of evidence finally leads one way or the other to win out.”

The Unresolved Mystery of Power Laws

We have seen a lot of power laws in this and the previous chapters. In addition to these, power-law distributions have been identified for the size of cities, people’s incomes, earthquakes, variability in heart rate, forest fires, and stock-market volatility, to name just a few phenomena.

As I described in chapter 15, scientists typically assume that most natural phenomena are distributed according to the bell curve or normal distribution. However, power laws are being discovered in such a great number and variety of phenomena that some scientists are calling them “more normal than ‘normal.’” In the words of mathematician Walter Willinger and his colleagues: “The presence of [power-law] distributions in data obtained from complex natural or engineered systems should be considered the norm rather than the exception.”

Scientists have a pretty good handle on what gives rise to bell curve distributions in nature, but power laws are something of a mystery. As we have seen, there are many different explanations for the power laws observed in nature (e.g., preferential attachment, fractal structure, self-organized criticality, highly optimized tolerance, among others), and little agreement on which observed power laws are caused by which mechanisms.

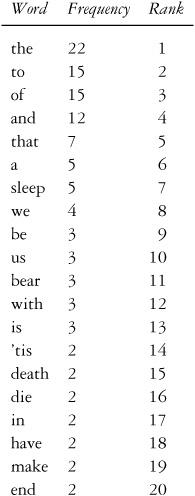

In the early 1930s, a Harvard professor of linguistics, George Kingsley Zipf, published a book in which he included an interesting property of language. First take any large text such as a novel or a newspaper, and list each word in the order of how many times it appears. For example, here is a partial list of words and frequencies from Shakespeare’s “To be or not to be” monologue from the play Hamlet:

Putting this list in order of decreasing frequencies, we can assign a rank of 1 to the most frequent word (here, “the”), a rank of 2 to the second most frequent word, and so on. Some words are tied for frequency (e.g., “a” and “sleep” both have five occurrences). Here, I have broken ties for ranking at random.

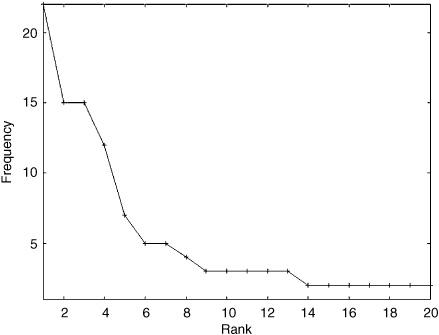

In figure 17.4, I have plotted the to-be-or-not-to-be word frequency as a function of rank. The shape of the plot indeed looks like a power law. If the text I had chosen had been larger, the graph would have looked even more power-law-ish.

Zipf analyzed large amounts of text in this way (without the help of computers!) and found that, given a large text, the frequency of a word is approximately proportional to the inverse of its rank (i.e., 1 /rank). This is a power law, with exponent −1. The second highest ranked word will appear about half as often as the first, the third about one-third as often, and so forth. This relation is now called Zipf’s law, and is perhaps the most famous of known power laws.

FIGURE 17.4. An illustration of Zipf’s law using Shakespeare’s “To be or not to be” monologue.

There have been many different explanations proposed for Zipf’s law. Zipf himself proposed that, on the one hand, people in general operate by a “Principle of Least Effort”: once a word has been used, it takes less effort to use it again for similar meanings than to come up with a different word. On the other hand, people want language to be unambiguous, which they can accomplish by using different words for similar but nonidentical meanings. Zipf showed mathematically that these two pressures working together could produce the observed power-law distribution.

In the 1950s, Benoit Mandelbrot, of fractal fame, had a somewhat different explanation, in terms of information content. Following Claude Shannon’s formulation of information theory (cf. chapter 3), Mandelbrot considered a word as a “message” being sent from a “source” who wants to maximize the amount of information while minimizing the cost of sending that information. For example, the words feline and cat mean the same thing, but the latter, being shorter, costs less (or takes less energy) to transmit. Mandelbrot showed that if the information content and transmission costs are simultaneously optimized, the result is Zipf’s law.

At about the same time, Herbert Simon proposed yet another explanation, presaging the notion of preferential attachment. Simon envisioned a person adding words one at a time to a text. He proposed that at any time, the probability of that person reusing a word is proportional to that word’s current frequency in the text. All words that have not yet appeared have the same, nonzero probability of being added. Simon showed that this process results in text that follows Zipf’s law.

Evidently Mandelbrot and Simon had a rather heated argument (via dueling letters to the journal Information and Control) about whose explanation was correct.

Finally, also around the same time, to everyone’s amusement or chagrin, the psychologist George Miller showed, using simple probability theory, that the text generated by monkeys typing randomly on a keyboard, ending a word every time they (randomly) hit the space bar, will follow Zipf’s law as well.

The many explanations of Zipf’s law proposed in the 1930s through the 1950s epitomize the arguments going on at present concerning the physical or informational mechanisms giving rise to power laws in nature. Understanding power-law distributions, their origins, their significance, and their commonalities across disciplines is currently a very important open problem in many areas of complex systems research. It is an issue I’m sure you will hear more about as the science behind these laws becomes clearer.