Collider: The Search for the World's Smallest Particles - Paul Halpern (2009)

Chapter 3. Striking Gold Rutherford ’s Scattering Experiments

Now I know what the atom looks like!

—ERNEST RUTHERFORD, 1911

In a remote farming region of the country the Maoris call Aotearoa, the Land of the Long White Cloud, a young settler was digging potatoes. With mighty aim, the boy broke up the soil and shoveled the crop that would support his family in troubling times. Though he had little chance of striking gold—unlike other parts of New Zealand, his region didn’t have much—he was nevertheless destined for a golden future.

Ernest Rutherford, who would become the first to split open the atom, was born to a family of early New Zealand settlers. His grandfather, George Rutherford, a wheelwright from Dundee, Scotland, had come to the Nelson colony on the tip of the South Island to help assemble a sawmill. Once the mill was established, the elder Rutherford moved his family to the village of Brightwater (now called Spring Grove) south of Nelson in the Wairoa River valley. There, George’s son James, a flax maker, married an English settler named Martha, who gave birth to Ernest on August 30, 1871.

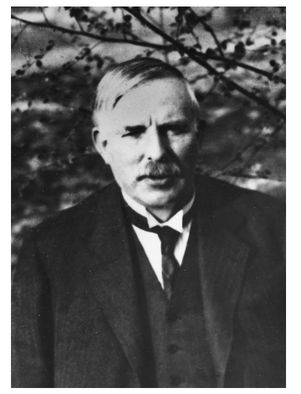

Ernest Rutherford (1871-1937), the father of nuclear physics.

Attending school in Nelson and university at Canterbury College in Christchurch, the largest and most English city on the South Island, Rutherford proved diligent and capable. A fellow student described him as a “boyish, frank, simple, and very likable youth, with no precocious genius, but once he saw his goal, he went straight to the central point.”1

Rutherford’s nimble hands could work wonders with any kind of mechanical device. His youthful pursuits would prepare him well for his deft manipulation of atoms and their nuclei. With surgical dexterity, he disassembled clocks, constructed working models of a water mill, and put together a homemade camera that he used to snap pictures. At Canterbury, he became fascinated by the electromagnetic discoveries taking place in Europe, and decided to build his own instruments. Following in the footsteps of Gustav Hertz, he constructed a radio transmitter and receiver—research that would anticipate Guglielmo Marconi’s invention of the wireless telegraph. Rutherford showed how radio waves could travel long distances, penetrate walls, and magnetize iron. His clever undertakings would make him an attractive candidate for a new research program in Cambridge, England.

Coincidentally, in the year of Rutherford’s birth, a new physical laboratory had been established at Cambridge, with Maxwell its first director. Named after the esteemed physicist Henry Cavendish, who, among other accomplishments, was the first to isolate the element hydrogen, Cavendish Laboratory would become the world’s leading center for atomic physics. Its original location was near the center of the venerable university town on a narrow side street called Free School Lane. Maxwell had supervised its construction and planned out its equipment, making it the first laboratory in the world dedicated to physics research. Following Maxwell’s death in 1879, another well-known physicist, Lord Rayleigh, had assumed the directorship. Then, in 1884, that mantle passed to the extraordinary leadership of J. J. (Joseph John) Thomson.

An intense intellectual with long, dark hair, wire-framed glasses, and a scruffy mustache, Thomson adroitly presided over a revolution in scientific education that allowed students vastly more opportunities for research. For physics students of earlier times, experimental research was merely the dessert course of a long banquet of mathematical studies—a treat that their tutors would sometimes only grudgingly allow them to partake. After satisfying their requirements with theoretical examinations of mechanics, heat, optics, and so forth, students would perhaps get a chance to sample some of the laboratory apparatus. At Cavendish, with its state-of-the-art equipment, these brief tastes would become a much richer meal unto itself. Thomson was pleased to take advantage of a new program allowing students from other universities to come to Cambridge, perform supervised laboratory research, write up their results in a thesis, and receive a postgraduate degree. Today we are accustomed to research Ph.D.s—it’s the bread and butter of academia—but back in the late nineteenth century the concept was novel. Such graduate student assistance would help spark the revolution in physics soon to follow.

The new program began in 1895, with Rutherford one of the first invitees. He was funded through an 1851 scholarship offered to talented young inhabitants of the British Dominion (now the Commonwealth). His move from rural New Zealand to academic Cambridge would prove extraordinary not only for his own career but also for the history of atomic physics.

The moment Rutherford encountered his fate is a matter of legend. Reportedly, his mother received a telegram bearing the good news and brought it out to the potato garden where he was digging. When she told him what he had won, at first he couldn’t believe his ears. After the realization sank in, he tossed his spade aside and exclaimed, “That’s the last potato I’ll dig.”2

Bringing his homemade radio detector along, Rutherford sailed to London, where he promptly slipped on a banana peel and injured his knee. Fortunately, the country lad had no more missteps as he made his way through the smoky, labyrinthine city. Journeying north, he left the smoke for the fresh air of the English countryside and arrived at the hallowed jumble of colleges and courtyards on the River Cam. There he took up residence at Trinity College, founded in 1546 by King Henry VIII, where the arched Great Gate and legends of Newton’s feats tower over nervous entering students. (Cambridge is organized into a number of residential colleges, of which Trinity is the largest.) From Trinity, Cavendish was just a short, pleasant walk away.

Along with Rutherford, the labs of Cambridge were soon filled with research students from around the world. Reveling in the cosmopolitan atmosphere, Thomson invited his young assistants for tea in his office every afternoon. As Thomson recalled, “We discussed almost every subject under the Sun except physics. I did not encourage talking about physics because the meeting was intended as a relaxation … and also because the habit of talking ‘shop’ is very easy to acquire but very hard to cure, and if it is not cured the power of taking part in a general conversation may become atrophied for want of use.”3

Despite Thomson’s efforts to help young researchers lighten up, the pressures at Cambridge must have been intense. “When I come home from researching, I can’t keep quiet for a minute and generally get in a rather nervous state,” Rutherford once wrote. His solution to his nervousness was to take up pipe smoking, a habit he would maintain for life. “If I took to smoking occasionally,” he continued, “it would keep me anchored a bit… . Every scientific man ought to smoke as he has to have the patience of a dozen Jobs in research work.”4

To make matters worse, many of the traditional students viewed the newcomers as interlopers. Taunted by some of his upper-crust colleagues as a yokel from the antipodes, Rutherford bore an extra burden. About one such mocker, he commented, “There is one demonstrator on whose chest I should like to dance a Maori war-dance.”5

Thomson was a meticulous experimentalist and had been engaged for a time in his own explorations of the properties of electricity. Constructing a clever apparatus, he investigated the combined effects of electric and magnetic fields on what was known as cathode rays: negatively charged beams of electricity passing between negatively and positively charged electrodes (terminals attached by wires to each end of a battery). The negative electrode produces the cathode rays and the positive electrode attracts them.

Electric and magnetic fields affect charges in different ways. Applying an electric field to a moving negative charge creates a force opposite to the field’s direction. In contrast, a magnetic field generates a force at right angles to the field’s direction. Also, unlike electric forces, magnetic forces depend on the charges’ velocities. Thomson found a way of balancing the electric and magnetic forces in a manner that revealed this speed, which he used to determine the ratio of the charge of the rays to their mass. Making the assumption that these rays bear the same charge as ionized hydrogen, he found the mass of the rays to be about ten thousand times smaller than hydrogen’s. In other words, cathode rays consist of elementary particles much, much lighter than atoms. Repeating the experiment numerous times under a variety of conditions, he always got the same results. Thomson called these negatively charged particles corpuscles, but they were later dubbed electrons, a name that has stuck. They offered the first glimpse of an intricate world within the atom.

Initially, Thomson’s phenomenal discovery was met with skepticism. As he recalled, “At first there were very few who believed in the existence of these bodies smaller than atoms. I was even told long afterwards by a distinguished physicist who had been present at my lecture at the Royal Institution that he thought I had been ‘pulling their legs.’ I was not surprised at this, as I had myself come to this explanation with great reluctance, and it was only after I was convinced that the experiment left no escape from it that I published my belief in the existence of bodies smaller than atoms.”6

Meanwhile, on the other side of the English Channel, the discovery of radioactive decay challenged the notion of atomic permanence. In 1896, Parisian physicist Henri Becquerel scattered uranium salts over a photographic plate wrapped in black paper, and was astonished to find that the plate darkened over time due to mysterious rays produced by the salts. Unlike the X-ray radiation found by Roentgen, Becquerel’s rays emerged spontaneously without the need for electrical apparatus. Becquerel found that any type of compound containing uranium gave off these rays, in a rate proportional to the amount of uranium, suggesting that the uranium atoms themselves were producing the radiation.

Similarly working in Paris, Polish-born physicist Marie Curie confirmed Becquerel’s findings and, along with her husband, Pierre, extended them to two new elements she discovered: radium and polonium. These elements emitted radiation at a higher rate than uranium and diminished in quantity over time. She coined the term radioactivity to describe the phenomenon of atoms spontaneously breaking down by giving off radiation. For their monumental discovery of the impermanence of atoms through radioactive processes, replacing Dalton’s century-old static concept with a more dynamic vision, Becquerel and the Curies would share the 1903 Nobel Prize.

Rutherford followed these developments with great interest. While his mentor Thomson was engaged in discovering the electron, Rutherford concentrated his attention on using radioactive materials as a source for ionizing gases. Somehow the emissions from uranium and other radioactive materials seemed to have the property of knocking the electrical neutrality out of surrounding gases, transforming them into electrically active conductors. The radiation seemed to perform the same function as rubbing dry sticks together and producing a spark.

Radioactivity ignited Rutherford’s curiosity as well and launched him on a rigorous investigation of its properties that would revolutionize physics. From a novice keen on developing radio detectors and other electromagnetic devices, he would emerge from his experience an extraordinary experimentalist adept at using radiation to decipher the world of the atom. Using the property that magnetic fields steer differently charged particles along distinct paths, he determined that radioactive materials produce positive and negative kinds of emissions, which he named, respectively, alpha and beta particles. (Beta particles turned out to be simply electrons. Villard discovered gamma radiation, a third, electrically neutral type, shortly after Rutherford’s classification.) Magnetic fields cause alpha particles to spiral in one direction and beta in the other—like horses racing in opposite directions around a circular track. Testing the ability of each kind of radiation to be stopped by barriers, he demonstrated that alpha particles are more easily blocked than beta. This suggested that alpha particles are larger in size than beta.

In 1898, in the midst of his studies of radioactive materials, Rutherford decided to take time off for a matter of the heart. He headed briefly to New Zealand to marry his high school sweetheart, Mary Newton. They didn’t return to England, however. Married life required a decent salary, he reasoned, so he accepted an offer of a professorship at McGill University in Montreal, Canada, that paid five hundred British pounds per year—respectable at the time and equivalent to about fifty thousand dollars today. The couple sailed to the colder clime, where Rutherford soon resumed his investigations.

At McGill, Rutherford focused on trying to uncloak alpha particles and reveal their true identities. Replicating Thomson’s charge-to-mass ratio experiment with alpha particles instead of electrons, he determined their charge—curiously finding it to be precisely the same as helium ions. He began to suspect that the most massive products of radioactivity decay were just mild-mannered helium in disguise.

Just when Rutherford could use some help in unraveling atomic mysteries, a new sleuth arrived in town. In 1900, Frederick Soddy, a chemist from Sussex, England, was appointed to a position at McGill. Learning of Rutherford’s work, he offered his expertise, and together they set out to understand the process of radioactivity. They developed a hypothesis that radioactive atoms, such as uranium, radium, and thorium, disintegrated into simpler atoms associated with other chemical elements by releasing alpha particles. Interested in medieval history, when alchemists tried to transform base materials into gold, Soddy recognized that radioactive transmutation could lead to the fulfillment of that dream.

In 1903, soon after Rutherford announced their theory of transmutation, Soddy decided to join forces with a noted expert in helium and other inert gases (neon and so forth), chemist William Ramsay of University College, London. Ramsay and Soddy conducted careful experiments in which they collected the alpha particles produced by decaying radium in a glass tube. Then, after the particles accumulated into a gas, they studied its spectral lines and found them identical to those of helium. Spectral lines are bands of specific frequencies (in the visible spectrum, particular colors) that make up the characteristic signature of an element when it either emits or absorbs light. For the emission spectrum of helium, certain violet, yellow, green, blue-green, and red lines always appear, along with two distinct shades of blue. Ramsay and Soddy found this fingerprint in what they observed, offering proof that alpha particles constitute ionized helium.

Soddy would later coin the term “isotope” to describe when elements exist in two or more distinct forms with different atomic weights. For example, deuterium, or heavy hydrogen, is chemically identical to the standard form, but has approximately twice its atomic weight. Tritium, a radioactive isotope of hydrogen, has about three times the weight of the ordinary variety. It decays into helium-3, a lighter isotope of common helium. In what he called the Displacement Law, Soddy demonstrated how alpha decay causes elements to drop down two spaces on the periodic table, as if sliding backward during a game of snakes and ladders. Beta decay, in contrast, causes a move one space forward, to one of the isotopes of the element in the slot ahead. That’s exactly what happens when tritium turns into helium-3 and moves forward in the periodic table.

Suppose you encounter a strange kind of marble dispenser with its contents shielded from view. Sometimes blue marbles pop out of the machine and it flashes once. Sometimes red marbles pop out and it flashes twice. What would you think is inside? You might guess that the interior is an even mixture of red and blue marbles, distributed hither and thither like plums in a pudding.

By 1904, physicists knew that atoms transmuted by producing emissions of different charges and masses, but they didn’t know how all of these fit together. Thomson decided to venture a guess that positive and negative particles were distributed evenly—with the latter being much smaller and thereby freer to move. He hoped that the proof of his “plum pudding model” would be in the testing, but alas it would turn out to be plumb wrong—disproven, as fate would have it, by his former protégé from New Zealand.

The next stage of Rutherford’s life was arguably his most productive. In 1907, the University of Manchester, the northern English setting of Dalton’s explorations, appointed him to a new position as chair of physics. Manchester’s gain was a huge loss for McGill. By then, Rutherford had become a commanding presence, “riding the crest of a wave” of his own making—as he once boasted to his biographer (and former student) Arthur Eve.7 As helmsman, he ran a tight ship—recruiting some of the best young researchers, setting them challenging problems, and dismissing those who fell short. With a booming voice and a propensity toward fits of temper and yelling at equipment during stressful moments, the mustached, pipe-smoking professor could be an intimidating commander indeed. Moments of stress and anger would quickly pass, however, like the blazing sun behind calming puffy clouds, and no one could be friendlier, warmer, or more supportive.

Chaim Weizmann, a Manchester biochemist who would later become the first president of Israel, befriended Rutherford at the time and described him as:

Youthful, energetic, boisterous; he suggested everything but the scientist. He talked readily and vigorously on every subject under the sun without knowing anything about it. Going down to the refectory for lunch I would hear the loud, friendly voice rolling up the corridor… . He was a kindly person, but he did not suffer fools gladly.8

Comparing Rutherford to Einstein, whom he also knew well, Weizmann recalled:

As scientists the two men were strongly contrasting types—Einstein all calculation, Rutherford all experiment. The personal contrast was not less remarkable: Einstein looks like an etherealized body, Rutherford looked like a big, healthy, boisterous New Zealander—which is exactly what he was. But there is no doubt that as an experimenter Rutherford was a genius, one of the greatest. He worked by intuition and whatever he touched turned to gold.9

At Manchester, Rutherford had meaty goals: using alpha particles to crack open the atom and reveal its contents. Alpha particles, he realized, would be large enough to make ideal probes of deep atomic structure. In particular, he wanted to test Thomson’s plum pudding model and see if each atom’s interior was an evenly distributed mix of large positive and small negative chunks. To carry out his project, he was lucky enough to snag two prize catches: a precious supply of radium (for which he had vied with Ramsay) and the valuable services of German physicist Hans Geiger, who had worked for the former physics chair. Rutherford assigned Geiger the task of developing a reliable way of detecting alpha particles.

The method Geiger pioneered—counting sparks that pass between electrodes on a metal tube when incoming alpha particles ionize a gas sealed inside, making it a conductor—became the prototype for what would become his most famous invention: the Geiger counter. Geiger counters rely on the principle that electricity travels around closed loops. Each time a sample emits an alpha particle, electricity rounds the circuit between the electrodes and the conducting gas—producing an audible click. Despite the utility of Geiger’s innovation, Rutherford usually relied on a second means of detection: using a screen coated with zinc sulfide, a material that lights up when alpha particles hit it through a process known as scintillation.

In 1908, Rutherford took a break from his research to collect the Nobel Prize in Chemistry for his work with alpha particles. He didn’t stay away from the lab for long. Equipped with reliable detection techniques, he developed another project involving Geiger, in conjunction with an extraordinary undergraduate, Ernest Marsden.

Just twenty years old at the time (1909), Marsden had a background curiously parallel to Rutherford’s. Like Rutherford, Marsden came from humble roots, with a father in the textile industry. Marsden’s dad was a cotton weaver from Lancashire, the local English county. Rutherford started his life in New Zealand and ended up in England; for Marsden it would be the reverse. And each performed vital experimental research while still in their undergraduate years. In Marsden’s case, he was just completing his course of study when asked to contribute his talents.

Rutherford recalled the simple query that led to Geiger and Marsden’s monumental collaboration. “One day Geiger came to me and said, ‘Don’t you think that young Marsden … ought to begin a small research?’ Now I had thought that too, so I said, ‘Why not let him see if any alpha particles can be scattered through a large angle?’ ”10

Legendary for posing just the right questions at precisely the right time, Rutherford had a hunch that the possibility of alpha particles scattering backward from a metal would reveal something about the material. Although he was curious to see what would happen, he didn’t necessarily expect a positive outcome. But given even the slightest chance of the particles bouncing off a hidden something, he felt it would be a sin not to try.

For certain types of sensitive measurements, particle physicists have to be like nocturnal cats on the prowl; they need to see well in the dark to spot the subtle signs of their prey. That’s an area in which younger scientists can have an advantage—not just with better vision but also with patience. No wonder Rutherford and Geiger recruited a twenty-year-old for the alpha particle scattering experiment. Marsden was instructed to cover the windows, making the lab as dark as possible, and then to sit and wait until his pupils were dilated enough to sense every errant speck of light. Only then was he supposed to start taking readings.

Placing plates of various thicknesses and types of metal (lead, platinum, and so forth) near a glass tube filled with a radium compound, Marsden waited for alpha particles to emerge from the tube, hit the plates, and either pass through or bounce off. A zinc sulfide screen, acting as a scintillator, was positioned to record the rates and angles of any alpha particles that happened to reflect. After testing each kind of metal, recording the constellations of sparks his sensitive eyes could see, he shared the data with Geiger. They soon realized that thin sheets of gold offered the highest rate of bounces. Even then, the vast majority of alpha particles passed right through the foil as if it were the skin of a ghost. In the rare cases of reflection, the majority took place at very large angles (ninety degrees or higher), indicating that something hard and focused within the gold was bouncing the alpha particles back.

Glowing with excitement, Geiger ran up to Rutherford. “We have been able to get some of the alpha particles coming backwards!” he reported. Rutherford was absolutely delighted.

“It was quite the most incredible event that has ever happened to me in my life,” Rutherford recalled. “It was almost as incredible as if you fired a 15-inch shell at a piece of tissue paper and it came back and hit you.”11

If Thomson’s plum pudding model was correct, then alpha particles impacting a gold foil would be modestly diverted by the gelatinous mixture of charges within the gold atoms and bounce back at fairly small angles. But that’s not what Geiger and Marsden found. Like champion sluggers in a baseball game, something within the atoms slammed back the projectiles at large angles only if they were within certain narrow strike zones; otherwise, they continued straight through.

In 1911, Rutherford decided to publish his own alternative to the Thomson model. “I think I can devise an atom much superior to J. J.’s,” he informed a colleague. 12 His groundbreaking paper introduced the idea that each atom has a nucleus—a tiny center packed with positive charge and the bulk of the atom’s mass. When the alpha particles hit the gold, that’s what batted them back, but only in the unlikely chance that they were right on target.

Atoms are almost completely empty space. The nuclei constitute but a minuscule portion of their volume—the rest is unfathomable nothingness. If an atom were the size of Earth, then a cross-section of its nucleus would be roughly the size of a football stadium. Rutherford colorfully described striking a nuclear target as akin to locating a gnat in the Albert Hall, a huge performance venue in London.

Despite their minuteness, nuclei play a critical role for determining the properties of atoms. As Rutherford surmised, the amount of positive charge in the nucleus corresponds to its place in the periodic table—called its atomic number. Starting with one for hydrogen, each atom’s nucleus houses the positive equivalent of the charge of the electron multiplied by its atomic number. For example, gold, the seventy-ninth element, has a nucleus charged to the positive equivalent of seventy-nine electrons. Balancing out the central positive charge is the same number of negatively charged electrons—rendering the atom electrically neutral unless it is ionized. These electrons, Rutherford asserted, are scattered in a sphere uniformly distributed around the center.

Rutherford’s model was a great conceptual leap, but it left certain questions unanswered. Although it brilliantly explained the Geiger-Marsden scattering results, it didn’t address many aspects of what was known about the atom at the time. For example, it didn’t account for why the spectral lines of hydrogen, helium, and other atoms have distinct patterns. If electrons in the atom are evenly spaced, how come atomic light spectra are not? And how did Planck’s quantum concept and Einstein’s photoelectric effect, showing how electrons can exchange energy via discrete bundles of light, fit into the picture?

Fortunately, in the spring of 1912, Rutherford’s department welcomed a young visitor from Denmark who would help resolve these issues. Niels Bohr, a freshly minted Ph.D. from Copenhagen with an athletic build and a long face with prominent jowls, arrived at Manchester after half a year with Thomson in Cambridge. Bohr had written to Rutherford asking if he could spend some time learning about radioactivity. He had learned from Thomson about Rutherford’s nuclear model and was intrigued about exploring its implications. While performing some calculations about the impact of alpha particles on atoms, Bohr decided to introduce the notion that electrons vibrate with only particular values of energy, multiples of Planck’s constant. In a stroke, he forever painted atoms with the variegated coating of quantum theory.

After returning to Copenhagen in the summer of that year, Bohr continued his studies of atomic structure, focusing on the question of why atoms don’t spontaneously collapse. Something must prevent the negative electrons from plunging toward the positive nucleus, like a meteorite hurtling toward Earth. In Newtonian physics, a conserved property called angular momentum characterizes the tendency of rotating objects to maintain the same rate of turning. Specifically, mass times velocity times orbital radius tends to remain constant—the reason why ballet dancers twirl faster when they tuck their arms closer to their bodies. Bohr noticed that by requiring an electron’s angular momentum to be a multiple of Planck’s constant divided by twice the number pi (3.1415 … ), he forced it to maintain specific orbits and energies. That is, electrons could reside only particular distances from an atom’s nucleus, in discrete levels called quantum states.

Bohr’s insight led to enormous strides in tackling the question of why atomic spectral lines are arranged in certain patterns. In his model of the atom, electrons neither gain nor lose energy if they maintain the same quantum state—a situation akin to an idealized, absolutely stable planetary orbit. Therefore, superficially, Bohr’s picture treats electrons like little “Mercurys,” “Venuses,” and so forth, revolving around a nuclear “Sun.” Instead of gravity acting as the central force, the electrostatic attraction between negative electrons and the positive nucleus does the job. At that point, however, the solar system analogy ends, and Bohr’s theory veers on a radically different course. Unlike planets, electrons sometimes “jump” from one quantum state to another, either toward or away from the nucleus. These jumps are instant and spontaneous, resulting in either the loss or gain of a quantum of energy, depending on whether the motion is to a lower or higher energy level. In line with the photoelectric effect, these energy quanta, later called photons or light particles, have frequencies equal to the energy transferred divided by Planck’s constant. Thus, the particular lines of color in the emission spectra of hydrogen and other atoms are due to the luminous ballast flung away when electrons enact specific dives—generally the longer the dive, the greater the frequency. Indeed, Bohr’s calculations matched up perfectly with the known formulas for the spacings of hydrogen spectral lines—a stunning success for his model.

In the winter of 1913, Bohr wrote to Rutherford with his results and was disappointed to receive a mixed response. Ever the practical thinker, Rutherford found what he saw as a major flaw. He informed Bohr, “There appears to me one grave difficulty in your hypothesis, which I have no doubt you fully realize, namely how does an electron decide what frequency it is going to vibrate at when it passes from one stationary state to another? It seems to me that you have to assume that the electron knows beforehand where it is going to stop.”13

With his perceptive comment, Rutherford identified one of the principal quandaries involving Bohr’s atomic model. How can you predict when an electron will abandon the tranquillity of the state it is in and venture to a new one? How can you determine exactly in which state the electron ends up? Bohr’s model couldn’t say—and Rutherford was bothered.

Only in 1925 would Rutherford’s critique be addressed, and even then the answer was most perplexing. Bohr, by that time, had become the head of his own institute for theoretical physics (now the Niels Bohr Institute) in Copenhagen, where he hosted a stellar array of young researchers. One of the very brightest, German physicist Werner Heisenberg, who studied in Munich and Göttingen, developed a brilliant alternative description of electrons in the atom that, although it didn’t explain why electrons jumped, could accurately calculate the chances of their doing so.

Heisenberg’s “matrix mechanics” introduced a new abstraction to physics that confused many old-timers and revolted some of the prominent physicists who understood its implications—most famously Einstein, who argued vehemently against it. It draped a veil of uncertainty around the atom—and indeed all of nature on that scale or smaller—demonstrating that not all physical properties can be glimpsed at once.

Like many a rebellious youth, Heisenberg began his line of reasoning by abandoning many of the long held suppositions of his elders. Instead of treating the electron as an actual orbiting particle, he reduced it to a mere abstraction: a mathematical state. To represent position, momentum (mass times velocity), and other measurable physical properties, he multiplied the representation state by different quantities. His Ph.D. adviser, Göttingen physicist Max Born, suggested encoding these quantities in arrays called matrices—hence the term “matrix mechanics,” also known as quantum mechanics. Equipped with powerful new mathematical tools, Heisenberg felt that he could explore the very depths of the atom. As he recalled, “I had the feeling that, through the surface of atomic phenomena, I was looking at a strangely beautiful interior, and felt almost giddy at the thought that I now had to probe this wealth of mathematical structures nature had so generously spread before me.”14

In classical Newtonian physics, both position and momentum can be measured at the same time. Not so in quantum mechanics, as Heisenberg cleverly demonstrated. If position and momentum matrices are both applied to a state, the order of their application makes a profound difference. Applying position first and then momentum generally produces a different result than applying momentum first and then position. The situation in which the order of operations matters is called noncommutative—in contrast with commutative forms of arithmetic such as addition and multiplication. While four times two is the same as two times four, position times momentum is not the same as momentum times position. This noncommutativity renders it impossible to know both quantities simultaneously with perfect certainty, a state of affairs Heisenberg later formalized as the uncertainty principle.

In quantum mechanics, Heisenberg’s uncertainty principle mandates, for example, that when the position of an electron is ascertained, its momentum goes all fuzzy. Because momentum is proportional to speed, an electron can’t tell you where it is and how fast it’s going at the same time. Electrons are mercurial creatures indeed, not Mercurial—more like elusive quicksilver than an orbiting planet.

Despite the inherent uncertainty in quantum mechanics, as shown by Heisenberg, it offers accurate predictions of probabilities. So while it doesn’t guarantee that a bet will pay off, at least it tells you the odds. For example, it tells you the chances that an electron will plunge from any given state to another. If the chances are zero, then you know that such a transition is forbidden. Otherwise, it is allowed and you can expect a line in the atom’s spectrum with corresponding frequency.

In 1926, physicist Erwin Schrödinger proposed a more tangible alternative version of quantum mechanics, called wave mechanics. In line with a theory proposed by French physicist Louis de Broglie, Schrödinger’s version imagines electrons as “matter waves”—akin to light waves, but representing material particles rather than electromagnetic radiation. These wave functions respond to physical forces in a manner described by a relationship called Schrödinger’s equation. Subject to the electrostatic attraction of an atomic nucleus, for example, wave functions representing electrons form “clouds” of various shapes, energies, and average distances from the center of the atom. These clouds are not actual arrangements of material, but rather distributions of the likelihood of an electron’s being in different points in space.

We can think of these wave formations as akin to the vibrations of a guitar string. Because it is attached on both ends, a plucked guitar string produces what is called a standing wave. Unlike a rolling ocean wave heading toward a beach, a standing wave is constrained to move only up and down. Within such restrictions it can have a number of peaks—one, two, or more—as long as it is a whole number, not a fraction. Wave mechanics identifies the principal quantum number of an electron with the number of peaks of its wave function, offering a natural explanation for why certain states exist and not others.

Much to Heisenberg’s chagrin, many of his colleagues favored Schrödinger’s depiction over his—perhaps because they were accustomed to models of sound waves, light waves, and kindred phenomena. Matrices seemed too abstract. Fortunately, as the sharp-witted Viennese physicist Wolfgang Pauli proved, Heisenberg’s and Schrödinger’s descriptions are completely equivalent. Like digital and analog clocks they’re equally trustworthy instruments and can be relied on according to taste.

Pauli offered his own critical contribution to quantum mechanics: the concept that no two electrons can be in exactly the same quantum state. His “exclusion principle” inspired two young Dutch researchers, Samuel Goudsmit and George Uhlenbeck, to propose that electrons can exist in two orientations, called spin. Contrary to its name, spin has nothing to do with actual spinning, but rather with an electron’s magnetic properties. If we imagine placing an electron in a vertical magnetic field (for instance, directly above a magnetized coil of wire), then the electron’s own minimagnet could be aligned in the same direction as the external field, called “spin-up,” or in the opposite direction, called “spin-down.”

Ambidextrous by nature, an electron normally consists of an equal mixture of spin-up and spin-down states. How can a single particle have two opposite qualities at once? In mundane experience, compass needles can’t point north and south at the same time, but the quantum world defies conventional explanation. Until an electron’s spin is measured, quantum uncertainty dictates that an electron’s spin is ambiguous. Only after a researcher switches on an external magnetic field does an electron reduce into a spin-up or spin-down orientation—a process known as wave function collapse.

If two electrons are paired and one is determined to be spin-up, the other automatically flips spin-down. This switching takes place even if the electrons are widely separated—an intuition-defying effect Einstein called “spooky action at a distance.” Because of such unintuitive connections, Einstein thought a deeper, more straightforward theory would someday replace quantum mechanics.

Bohr, on the other hand, embraced contradictions. He reveled in unions of opposites—such as the notion that electrons are waves and particles at the same time, which he called the principle of complementarity. Prone to enigmatic statements, he once said, “A great truth is a truth whose opposite is also a great truth.” Appropriately, right in the center of his coat of arms he placed the yin-yang symbol of Taoist contrasts.15

Despite their philosophical differences, Einstein shared with Bohr the realization that quantum mechanics matches up incredibly well with experimental data. One sign of Einstein’s recognition would be his nomination of Heisenberg and Schrödinger for the Nobel Prize in Physics, which Heisenberg was awarded in 1932 and Schrödinger shared with British quantum physicist Paul Dirac in 1933. (Einstein and Bohr were awardees in 1921 and 1922, respectively.)

Rutherford would remain cautious about quantum theory, continuing to focus his attention mainly on experimental explorations of the atomic nucleus. In 1919, Thomson stepped down as Cavendish professor and director of Cavendish Laboratory, and Rutherford was appointed to that venerable position. During his final year at Manchester and his initial years at Cambridge, he focused on bombarding various nuclei with fast-moving alpha particles. Marsden had noticed that where alpha particles collide with hydrogen gas, even faster, more penetrating particles emerge. These were the nuclei of hydrogen atoms. Rutherford repeated Marsden’s experiment, replacing the hydrogen with nitrogen, and much to his astonishment, hydrogen nuclei emerged from that gas too. Striking a fluorescent screen, the scintillations produced by the hydrogen nuclei were so faint and tiny that they could be seen only through a microscope. Yet they offered important evidence that the nitrogen atoms were releasing particles from their cores. As the discovery of radioactivity showed that atoms could transmute on their own, Rutherford’s bombardment experiments demonstrated that atoms could be altered artificially as well.

Rutherford coined a name for the positively charged particles found in all nuclei: protons. Some researchers wanted to call these “positive electrons,” but he objected, arguing that protons are far more massive than electrons and have little in common. When an actual positive electron was discovered, following predictions by Dirac, it ended up being called the positron. Positrons provided the first example of what is known as “antimatter”: similar to ordinary matter but oppositely charged. Protons, on the other hand, are a key constituent of conventional matter.

A new type of particle detector, called the cloud chamber, aided Rutherford and his group in understanding the paths of particles, such as protons, after they are emitted from target nuclei. While scintillators and Geiger counters could measure the rate of emitted particles, cloud chambers could also capture their behavior as they move through space, leading to an improved understanding of their properties.

Cloud chambers were invented by Scottish physicist Charles Wilson, who noticed during a hike up the mountain Ben Nevis that moist air tends to condense into water droplets in the presence of charged particles such as ions. The charges attract the water molecules and pull them out of the air, offering a vapor trail of electrically active regions. Realizing that the same principle could be used to detect unseen particles, Wilson designed a closed chamber filled with cold, humid air that displayed visible streaks of condensation whenever charged particles passed through—similar to the jet trails etched by airplanes in the sky. These patterns can be photographed, providing a valuable record of what transpires during an experiment.

Although Wilson completed his first working model in 1911, it wasn’t until 1924 that cloud chambers came into use in nuclear physics. That year Patrick Blackett, a graduate student in Rutherford’s group, used such a device to record the release of protons in the transmutation of nitrogen. His data wonderfully complemented Rutherford’s scintillation experiments, presenting irrefutable evidence of artificial nuclear decay.

Protons are not the only inhabitants of nuclei. In another of his legendary successful prognostications, in 1920 Rutherford predicted that nuclei harbor neutral particles along with protons. Twelve years later, Rutherford’s student James Chadwick would discover the neutron, similar in mass to the proton but electrically neutral. In a key paper written right after Chadwick’s discovery, “On the Structure of Atomic Nuclei,” Heisenberg introduced the modern picture of protons and neutrons constituting the cores of all atoms.

The nuclear picture helps explain the different types of radioactivity. Alpha decay occurs when nuclei emit two protons and two neutrons at once—an exceptionally stable combination. Beta decay, on the other hand, derives from neutrons decaying into protons and electrons—with the beta particles comprising the released electrons. As Pauli showed, this couldn’t be the whole story because some extra momentum and energy couldn’t be accounted for. He predicted the existence of an unseen neutral particle that came to be known as the neutrino. Finally, gamma decay represents the release of energy when a nucleus transforms from a higher- to a lower-energy quantum state. While in alpha and beta decay, the number of protons and neutrons in the nucleus alters, resulting in a different element, in gamma decay that quantity stays the same.

From Rutherford’s historic techniques and discoveries, an idea was forged: using elementary particles to probe the natural world on its tiniest scale. Radioactive materials pumping out alpha particles offered a reliable source for early investigations of the nucleus. They were perfect for Geiger and Marsden’s scattering experiments that proved that atoms have tiny cores. Yet, as Rutherford came to realize, exploring nuclear properties in a fuller and deeper way would require much higher energy probes. Breaking through the nuclear fortress would take a sturdy battering ram—particles propelled through artificial means to fantastically high velocities. He decided that Cavendish would build a particle accelerator—a project that he recognized would entail a certain amount of theoretical know-how. Fortunately, direct from Stalin’s own fortress nation, a whiz kid would slip away and bring his cache of quantum knowledge to Free School Lane.