An Introduction to Applied Cognitive Psychology - David Groome, Anthony Esgate, Michael W. Eysenck (2016)

Chapter 6. Memory improvement

David Groome and Robin Law

6.1 INTRODUCTION

Most of us would like to have a better memory. The ability to remember things accurately and effortlessly would make us more efficient in our daily lives, and it would make us more successful in our work. It might also help us socially, by helping us to remember appointments and avoiding the embarrassment of forgetting the name of an acquaintance. Perhaps the most important advantage, however, would be the ability to study more effectively, for example when revising for an examination or learning a new language. While there are no magical ways of improving the memory dramatically overnight, a number of strategies have been developed that can help us to make worthwhile improvements in our memory performance, and for a few individuals the improvements have been quite spectacular. In this chapter, some of the main principles and techniques of memory improvement will be reviewed and evaluated in the light of scientific research. This will begin with a review of the most efficient ways to learn new material and the use of mnemonic strategies, and will then move on to consider the main factors influencing retrieval.

6.2 LEARNING AND INPUT PROCESSING

MEANING AND SEMANTIC PROCESSING

One of the most important principles of effective learning is that we will remember material far better if we concentrate on its meaning, rather than just trying to learn it by heart. Mere repetition or mindless chanting is not very efficient. To create an effective memory trace we need to extract as much meaning from it as we can, by connecting it with our store of previous knowledge. In fact this principle has been used for centuries in the memory enhancement strategies known as mnemonics, which mostly involve techniques to increase the meaningfulness of the material to be learnt. These will be considered in the next section.

Bartlett (1932) was the first to demonstrate that people are better at learning material that is meaningful to them. He used story-learning experiments to show that individuals tend to remember the parts of a story that make sense to them, and they forget the less meaningful sections. Subsequent research has confirmed that meaning ful processing (also known as semantic processing) creates a stronger and more retrievable memory trace than more superficial forms of processing, by making use of previous experience and knowledge.

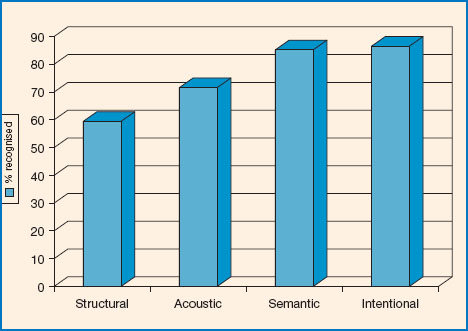

Bartlett’s story-learning experiments were unable to identify the actual type of processing used by his participants, but more recent studies have controlled the type of processing employed by making use of orienting tasks (Craik and Tulving, 1975; Craik, 1977). Orienting tasks are activities designed to direct the subject’s attention to certain features of the test items, in order to control the type of processing carried out on them. For example, Craik (1977) presented the same list of words visually to four different groups of subjects, each group being required to carry out a different orienting task on each one of the words. The first group carried out a very superficial structural task on each of the words (e.g. is the word written in capitals or small letters?), while the second group carried out an acoustic task (e.g. does the word rhyme with ‘cat’?) and the third group carried out a semantic task (e.g. is it the name of a living thing?). The fourth group was given no specific orienting task, but was simply given instructions to try to learn the words as best they could. The subjects were subsequently tested for their ability to recognise the words, and the results obtained are shown in Figure 6.2.

Figure 6.1 How can you make sure you will remember all this in the exam?

Source: copyright Aaron Amat / Shutterstock.com.

It is clear that the group carrying out the semantic orienting task achieved far higher retrieval scores than those who carried out the acoustic task, and they in turn performed far better than the structural processing group. Craik concluded that semantic processing is more effective than more shallow and superficial types of processing (e.g. acoustic and structural processing). In fact the semantic group actually performed as well as the group that had carried out intentional learning, even though the intentional learning group were the only participants in this experiment who had actually been making a deliberate effort to learn the words. It seems, then, that even when we are trying our best to learn, we cannot improve on a semantic processing strategy, and indeed it is likely that the intentional learning group were actually making use of some kind of semantic strategy of their own. The basic lesson we can learn from the findings of these orienting task experiments is that we should always focus our attention on the meaning of items we wish to learn, since semantic processing is far more effective than any kind of non-semantic processing.

Figure 6.2 The effect of different types of input processing on word recognition (Craik, 1977).

ORGANISATION AND ELABORATION

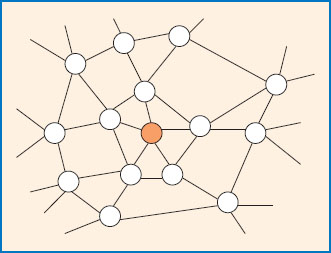

A number of theories have been proposed to explain the superiority of semantic processing, most notably the ‘levels of processing’ theory (Craik and Lockhart, 1972). The levels of processing theory states that the more deeply we process an item at the input stage the better it will be remembered, and semantic processing is seen as being the deepest of the various types of processing to which new input is subjected. Later versions of this theory (Lockhart and Craik, 1990; Craik, 2002) suggest that semantic processing is more effective because it involves more ‘elaboration’ of the memory trace, which means that a large number of associative connections are made between the new trace and other traces already stored in the memory (see Figure 6.3). The result of such elaborative encoding is that the new trace becomes embedded in a rich network of interconnected memory traces, each one of which has the potential to activate all of those connected to it. Elaborative encoding thus creates a trace that can be more easily retrieved in the future because there are more potential retrieval routes leading back to it.

One way of elaborating memory traces and increasing their connections with other traces is to organise them into groups or categories of similar items. Tulving (1962) found that many of the participants who were asked to learn a list of words would organise the words into categories spontaneously, and those who did so achieved far higher retrieval scores than subjects who had not organised the list of words. Dunlosky et al. (2013) concluded from a meta-analysis of previous studies that students who deliberately tried to relate new test items to their existing body of previous knowledge achieved better memory scores, probably because of the elaborative integration of the new items into an extensive network of interconnected traces.

Figure 6.3 Elaborative connections between memory traces.

Eysenck and Eysenck (1980) demonstrated that deep processing offers benefits not only through increased elaboration but also through increasing the distinctiveness of the trace. They pointed out that a more distinct trace will be easier to discriminate from other rival traces at the retrieval stage, and will provide a more exact match with the appropriate retrieval cue. MacLeod (2010) has shown that learning can be improved by merely speaking test items out loud, which he suggests may help to increase their distinctiveness.

VISUAL IMAGERY

Visual imagery is another strategy known to assist memory. Paivio (1965) found that participants were far better at retrieving concrete words such as ‘piano’ (i.e. names of objects for which a visual image could be readily formed) than they were at retrieving abstract words such as ‘hope’ (i.e. concepts that could not be directly imaged). Paivio found that concrete words retained their advantage over abstract words even when the words were carefully controlled for their meaningfulness, so the difference could be clearly attributed to the effect of imagery. Paivio (1971) explained his findings by proposing the dual coding hypothesis, which suggests that concrete words have the advantage of being encoded twice, once as a verbal code and then again as a visual image. Abstract words, on the other hand, are encoded only once, since they can only be stored in verbal form. Dual coding may offer an advantage over single coding because it can make use of two different loops in the working memory (e.g. visuo spatial and phonological loops), thus increasing the total information storage and processing capacity for use in the encoding process.

It is generally found that under most conditions people remember pictures better than they remember words (Haber and Myers, 1982; Paivio, 1991). This may suggest that pictures offer more scope for dual coding than do words, though pictures may also be more memorable because they tend to contain more information than words (e.g. a picture of a dog contains more information than the three letters ‘DOG’).

Bower (1970) demonstrated that dual encoding could be made even more effective if the images were interactive. In his experiment, three groups of subjects were each required to learn a list of thirty pairs of concrete words (e.g. frog-piano). The first group was instructed to simply repeat the word pairs after they were presented, while the second group was asked to form separate visual images of each of the items. A third group was asked to form visual images in which the two items represented by each pair of words were interacting with one another in some way, for example a frog playing a piano (see Figure 6.4). A test of recall for the word pairs revealed that the use of visual imagery increased memory scores, but the group using interactive images produced the highest scores of all.

Figure 6.4 An interactive image.

A recent study by Schmeck et al. (2014) demonstrated that the recall of a written passage can be improved by drawing some aspect of the content, which clearly adds a visual image to the verbal material. De Bene and Moe (2003) confirmed the value of imagery in assisting memory, but they found that visual imagery is more effective when applied to orally presented items rather than visually presented items. A possible explanation of this finding is that visual imagery and visually presented items will be in competition for the same storage resources (i.e. the visuospatial loop of the working memory), whereas visual imagery and orally presented items will be held in different loops of the working memory (the visuospatial loop and the phonological loop, respectively) and will thus not be competing for storage space.

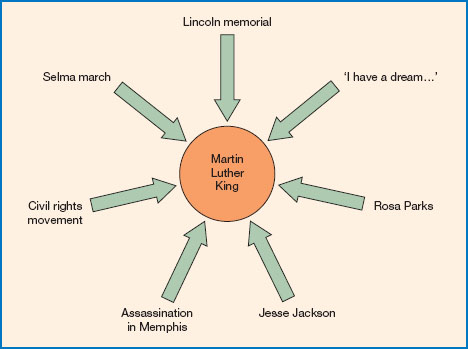

MIND MAPS

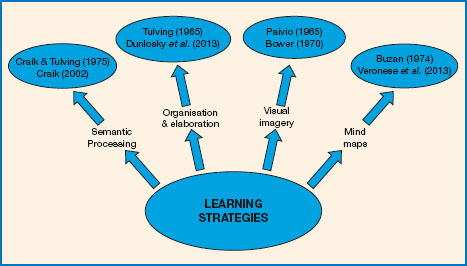

Another useful revision strategy is to draw a diagram that organises your notes in a way that groups together items that have something in common, for example by drawing a ‘mind map’ (Buzan, 1974) of the sort shown in Figure 6.5. Such strategies can help you to organise facts or ideas in a way that will strengthen the associative connections between them, and it can also help to add an element of visual imagery too.

One particularly effective form of mind map is known as a ‘concept map’, because it is organised as a hierarchy of concepts, with major concepts at the top and subsidiary concepts below.

The use of mind maps has been shown to have benefits for students in real-life settings. Lahtinen et al. (1997) reported that medical students who made use of memory improvement strategies during their revision were more successful in their exams than those who did not, and the most effective strategies were found to be the use of mind maps and using organised summaries of lecture notes. McDougal and Gruneberg (2002) found that students preparing for a psychology exam were more successful if their revision included the use of mind maps and name lists. Memory strategies involving first-letter cues or concepts were less effective but still had some benefit, but those students who did not use a memory strategy of any kind performed worst of all. In a more recent study, Veronese et al. (2013) reported that medical students who made use of mind maps in their revision gained better marks than other students.

Figure 6.5 A mind map.

The experimental studies described above have established several important principles which are known to help students to learn more effectively, notably the use of semantic and elaborative encoding, organisation of input, and imagery. These are all principles that we can apply to everyday learning or indeed to exam revision. These same general principles of memory facilitation have also been used in more specialised memory improvement strategies known as mnemonics, which are techniques that add meaning, organisation and imagery to material that might otherwise possess none of these qualities.

6.3 MNEMONICS

MNEMONIC STRATEGIES

It is relatively easy to learn material that is intrinsically meaningful, but we are sometimes required to learn items that contain virtually no meaning of their own, such as a string of digits making up a telephone number. This normally requires rote learning (also referred to as ‘learning by heart’ or ‘learning parrot-fashion’), and the human brain does not seem to be very good at it.

People have known for centuries that memory for meaningless items can be greatly improved by strategies that involve somehow adding meaning artificially to an item that otherwise has little intrinsic meaningful content of its own, and it can be even more helpful when it adds a strong visual image to the item. Various techniques have been devised for this purpose, and they are known as mnemonics.

First-letter mnemonics

You probably used first-letter mnemonics when you were at school, for example when you were learning the sequential order of the colours of the spectrum. The colours of the spectrum (which are: red, orange, yellow, green, blue, indigo, violet) are usually learnt by using a mnemonic sentence that provides a reminder of the first letter of each colour, such as ‘Richard of York Gave Battle In Vain’ or alternatively that colourful character ‘RoyGBiv’. It is important to note that in this example it is not the actual colour names that are meaningless, but their sequential order. The mnemonic is therefore used primarily for remembering the sequential order of the colours. Other popular first-letter mnemonics include notes of the musical scale (e.g. ‘Every Good Boy Deserves Favours’), the cranial nerves (e.g. ‘On Old Olympus Towering Top A Fin And German Viewed A Hop’) and the ‘big five’ factors of personality (e.g. ‘OCEAN’). All of these mnemonics work by creating a sequence that is meaningful and incorporates potential retrieval cues.

Rhyme mnemonics

For other purposes a simple rhyme mnemonic may help. For example, we can remember the number of days in each month by recalling the rhyme ‘Thirty days hath September, April, June, and November. All the rest have thirty-one, except for February all alone (etc.)’. As before, these mnemonics work essentially by adding meaning and structure to material that is not otherwise intrinsically meaningful, and a further benefit is that the mnemonic also provides retrieval cues which can later be used to retrieve the original items.

Chunking

A good general strategy for learning long lists of digits is the method of chunking (Wickelgren, 1964), in which groups of digits are linked together by some meaningful connection. This technique not only adds meaning to the list, but also reduces the number of items to be remembered, because several digits have been combined to form a single memorable chunk. For example, try reading the following list of digits just once, then cover it up and see if you can write them down correctly from memory:

1984747365

It is unlikely that you will have recalled all of the digits correctly, as there were ten of them and for most people the maximum digit span is about seven items. However, if you try to organise the list of digits into some meaningful sequence, you will find that remembering it becomes far easier. For example, the sequence of digits above happens to contain three sub-sequences which are familiar to most people. Try reading the list of digits once again, but this time trying to relate each group of digits to the author George Orwell, a jumbo jet and the number of days in a year. You should now find it easy to remember the list of digits, because it has been transformed into three meaningful chunks of information. What you have actually done is to add meaning to the digits by making use of your previous knowledge, and this is the principle underlying most mnemonic systems. Memory can be further enhanced by creating a visual image to represent the mnemonic, for example a mental picture of George Orwell sitting in a jumbo jet for a year.

In real life, not all digit sequences will lend themselves to chunking as obviously as in this example, but if you use your imagination and make use of all of the number sequences that you already know (such as birthdays, house numbers or some bizarre hobby you may have), you should be able to find familiar sequences in most digit strings. This technique has in fact been used with remarkable success by expert mnemonists such as SF (an avid athletics enthusiast who made use of his knowledge of running times), as explained later in the section on expert mnemonists.

The method of loci

The method of loci involves a general strategy for associating an item we wish to remember with a location that can be incorporated into a visual image. For example, if you are trying to memorise the items on a shopping list, you might try visualising your living room with a tomato on a chair, a block of cheese on the radiator and a bunch of grapes hanging from the ceiling. You can further enhance the mnemonic by using your imagination to find some way of linking each item with its location, such as imagining someone sitting on the chair and squashing the tomato, or the cheese melting when the radiator gets hot. When you need to retrieve the items on your list, you do so by taking an imaginary walk around your living room and observing all of the imaginary squashed tomatoes and cascades of melting cheese. It is said that the method of loci was first devised by the Greek poet Simonides in about 500 BC, when he achieved fame by remembering the exact seating positions of all of the guests at a feast after the building had collapsed and crushed everyone inside. Simonides was apparently able to identify each of the bodies even though they had been crushed beyond recognition, though cynics among you may wonder how they checked his accuracy. Presumably they just took his word for it.

Massen et al. (2009) found that the method of loci works best when based on an imaginary journey which is very familiar to the individual and thus easily reconstructed. For example, the journey to work, which you do every day, provides a better framework than a walk around your house or your neighbourhood.

The face-name system

Lorayne and Lucas (1974) devised a mnemonic technique to make it easier to learn people’s names. This is known as the face-name system, and it involves thinking of a meaningful word or image that is similar to the person’s name (for example, ‘Groome’ might remind you of horses, or maybe a bridegroom). The next stage is to select a prominent feature of the person’s face, and then to create an interactive image linking that feature with the image you have related to their name (e.g. you might have to think about some aspect of my face and somehow relate it to a horse or a wedding ceremony, preferably the latter). This may seem a rather cumbersome system, and it certainly requires a lot of practice to make it work properly. However, it seems to be very effective for those who persevere with it. For example, former world memory champion Dominic O’Brien has used this method to memorise accurately all of the names of an audience of 100 strangers, despite being introduced only briefly to each one of them (O’Brien, 1993).

The keyword system

A variant of the face-name system has been used to help people to learn new languages. It is known as the keyword system (Atkinson, 1975; Gruneberg, 1987), and it involves thinking of an English word which in some way resembles a foreign word that is to be learnt. For example, the French word ‘herisson’ means ‘hedgehog’. Gruneberg suggests forming an image of a hedgehog with a ‘hairy son’ (see Figure 6.6).

Alternatively, you might prefer to imagine the actor Harrison Ford with a hedgehog. You could then try to conjure up that image when you next need to remember the French word for hedgehog (which admittedly is not a frequent occurrence). Gruneberg further suggests that the gender of a French noun can be attached to the image, by imagining a boxer in the scene in the case of a masculine noun, or perfume in the case of a feminine noun. Thus for ‘l’herisson’ you might want to imagine Harrison Ford boxing against a hedgehog. This may seem like an improbable image (though arguably no more improbable than his exploits in the Indiana Jones movies), but in fact the more bizarre and distinctive the image the more memorable it is likely to be.

Figure 6.6 A hedgehog with its ‘hairy son’ (l’herisson).

The keyword method has proved to be a very effective way to learn a foreign language. Raugh and Atkinson (1975) reported that students making use of the keyword method to learn a list of Spanish words scored 88 per cent on a vocabulary test, compared with just 28 per cent for a group using more traditional study methods. Gruneberg and Jacobs (1991) studied a group of executives learning Spanish grammar, and found that by using the keyword method they were able to learn no fewer than 400 Spanish words, plus some basic grammar, in only 12 hours of teaching. This was far superior to traditional teaching methods, and as a bonus it was found that the executives also found the keyword method more enjoyable. Thomas and Wang (1996) also reported very good results for the keyword method of language learning, noting that it was particularly effective when followed by an immediate test to strengthen learning.

The peg-word system

Another popular mnemonic system is the peg-word system (Higbee, 1977, 2001), which is used for memorising meaningless lists of digits. In this system each digit is represented by the name of an object that rhymes with it. For example, in one popular system you learn that ‘ONE is a bun, TWO is a shoe, THREE is a tree’, and so on (see Figure 6.7). Having once learnt these associations, any sequence of numbers can be woven into a story involving shoes, trees, buns etc.

Like many mnemonic techniques, the peg-word system has a narrow range of usefulness. It can help with memorising a list of digits, but it cannot be used for anything else.

Figure 6.7 An example of the peg-word system.

GENERAL PRINCIPLES OF MNEMONICS

Chase and Ericsson (1982) concluded that memory improvement requires three main strategies:

1 Meaningful encoding (relating the items to previous knowledge);

2 Structured retrieval (adding potential cues to the items for later use);

3 Practice (to make the processing automatic and very rapid).

In summary, most mnemonic techniques involve finding a way to add meaning to material that is not otherwise intrinsically meaningful, by somehow connecting it to one’s previous knowledge. Memorisation can often be made even more effective by creating a visual image to represent the mnemonic. And finally, retrieval cues must be attached to the items to help access them later. These are the basic principles underlying most mnemonic techniques, and they are used regularly by many of us without any great expertise or special training. However, some individuals have developed their mnemonic skills by extensive practice, and have become expert mnemonists. Their techniques and achievements will be considered in the next section.

EXPERT MNEMONISTS

The mnemonic techniques described above can be used by anyone, but a number of individuals have been studied who have achieved remarkable memory performance, far exceeding that of the average person. They are sometimes referred to as ‘memory athletes’, because they have developed exceptional memory powers by extensive practice of certain memory techniques.

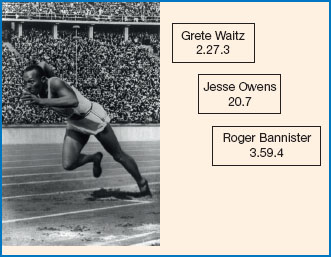

Chase and Ericsson (1981) studied the memory performance of an undergraduate student (‘SF’), who trained himself to memorise long sequences of digits simply by searching for familiar number sequences. It so happened that SF was a running enthusiast, and he already knew the times recorded in a large number of races, such as world records, and his own best performances over various distances. For example, Roger Bannister’s time for the very first 4-minute mile happened to be 3 minutes 59.4 seconds, so for SF the sequence 3594 would represent Bannister’s record time. SF was thus able to make use of his prior knowledge of running times to develop a strategy for chunking digits (see Figure 6.8).

In fact SF had to work very hard to achieve these memory skills. He was eventually able to memorise lists of up to eighty digits, but only after he had practised his mnemonic techniques for one hour a day over a period of two years. It appears that SF was not innately gifted or superior in his memory ability, but he achieved his remarkable feats of memory by sheer hard work. Despite his amazing digit span, his performance in other tests of memory was no better than average. For example, his memory span for letters and words was unremarkable, and failed to show any benefit from his mnemonic skills despite being very similar to the digit span task. In fact, even his digit span performance fell to normal levels when he was presented with digit lists that could not be readily organised into running times.

Dominic O’Brien trained his memory to such a level that he became the world memory champion in 1992, and he was able to make a living by performing memory feats in front of audiences and by gambling at blackjack. He wrote a book about his memory improvement techniques (O’Brien, 1993), in which he explained that he had been born with an average memory, and he had only achieved his remarkable skills by devoting six years of his life to practising mnemonic strategies.

Another expert mnemonist, Rajan Mahadevan, successfully memorised the constant pi (used for calculating the area or circumference of a circle from its radius) to no fewer than 31,811 figures, though again his memory in most other respects was unexceptional (Ericsson et al., 2004). More recently, another expert mnemonist, known as ‘PI’, has improved on this record by reciting pi to 64,000 figures, using mainly the method of loci. However, ‘PI’ is reported as having a poor memory for most other tasks (Raz et al., 2009).

Figure 6.8 Running times as used as a mnemonic strategy by SF (Chase and Ericsson, 1981).

Source: photo of Jesse Owens at the start of his record-breaking 200-metre race, 1936. Courtesy of Library of Congress.

Figure 6.9 The judge (L) holds up a card for Nelson Dellis who recites from memory the order of two decks of cards, 12 March 2011, during the 14th annual USA Memory Championships in New York. Dellis won the competition.

Source: copyright: Don Emmert/Getty Images.

Maguire et al. (2003) studied a number of recent memory champions and concluded that the mnemonic technique most frequently used was the method of loci. Roediger (2014) reports that the current world memory champion, Nelson Dellis, mainly relies on the method of loci and the peg-word system.

From these studies it would appear that any reasonably intelligent person can achieve outstanding feats of memory if they are prepared to put in the practice, without needing any special gift or innate superiority (Ericsson et al., 2004). However, for most of us it is probably not worth devoting several years of our lives to practising a set of memory skills which are of fairly limited use. Mnemonics tend to be very specific, so that each mnemonic facilitates only one particular task. Even the most skilled mnemonists tend to excel on only one or two specialised tasks, but their skills do not transfer to other types of memory performance (Groeger, 1997), so memory training seems to improve the performance of just a particular memory task, and it does not improve memory function in general.

Another important limitation is that mnemonic skills tend to be most effective when used for learning meaningless material such as lists of digits, but this is quite a rare requirement in real-life settings. Most of us are not prepared to devote several years of our lives to becoming memory champions, just as most of us will never consider it worth the effort and sacrifice required to become a tennis champion or an expert juggler. However, just as the average tennis player can learn something of value from watching the top players, we can also learn something from studying the memory champions. We may not aspire to equal their achievements, but they do offer us an insight into what the human brain is capable of.

GIFTED INDIVIDUALS

While most memory experts have achieved their skills by sheer hard work, a few very rare individuals who seem to have been born with special memory powers have been studied. The Russian psychologist Luria (1975) studied a man called V.S. Shereshevskii (referred to as ‘S’), who not only employed a range of memory organisation strategies but who also seemed to have been born with an exceptional memory. S was able to memorise lengthy mathematical formulae and vast tables of numbers very rapidly, and with totally accurate recall. He was able to do this because he could retrieve an array of figures with the same vividness as an actual perceived image, and he could project these images onto a wall and simply ‘read off’ figures from them like a person reading a book. This phenomenon is known as eidetic imagery, and it is an ability that occurs extremely rarely. On one occasion S was shown an array of fifty digits, and he was still able to recall them with perfect accuracy several years later. S used his exceptional memory to make a living, by performing tricks of memory on stage. However, his eidetic imagery turned out to have certain drawbacks in everyday life. In the first place, his eidetic images provided a purely visual representation of memorised items, without any clear meaning or understanding. A further problem for S was his inability to forget his eidetic images, which tended to trouble him and often prevented him from directing his attention to other items. In the later part of his life S developed a psychiatric illness, possibly as a consequence of the stress and overload of his mental faculties resulting from his almost infallible memory. On the whole, S found that his unusual memory powers produced more drawbacks than advantages, and this may provide a clue to the reason for the extreme rarity of eidetic imagery. It is possible that eidetic imagery may have been more common among our ancient ancestors, but has mostly died out through natural selection. Presumably it would be far more common if it conveyed a general advantage in normal life.

Wilding and Valentine (1994) make a clear distinction between those who achieve outstanding memory performance through some natural gift (as in the case of Shereshevskii) and those who achieve an outstanding memory performance by practising some kind of memory improvement strategy (such as Dominic O’Brien). There were some interesting differences, notably the fact that those with a natural gift tended to make less use of mnemonic strategies, and that they frequently had close relatives who shared a similar gifted memory.

Another form of exceptional memory, which has been reported in a few rare individuals, is the ability to recognise any face that has ever been previously encountered (Russell et al., 2009). These people are known as ‘super-recognisers’, and their exceptional ability appears to be largely genetic in origin (Wilmer et al., 2010).

A few individuals have been studied who seem to have clear recall of almost every event or experience of their lives, an ability known as Highly Superior Autobiographical Memory (HSAM for short). For example, LePort et al. (2012) studied a woman called Jill Price who was able to run through her entire life in vivid detail. However, she describes this ability as a burden rather than a gift, as her past memories tend to dominate her thoughts in a way that resembles a form of obsessional-compulsive syndrome. It is also notable that her performance on standard memory tests is no better than average.

WORKING MEMORY TRAINING

The preceding sections about memory improvement and mnemonic techniques are concerned mainly with long-term memory (LTM). However, in recent years there has been a substantial growth in the popularity of working memory (WM) training, and a range of studies have claimed to provide evidence for the effectiveness of this training (Shipstead et al., 2012). This research was introduced in Chapter 5, but only in relation to WM improvement in the elderly.

The particular interest in WM as opposed to other aspects of memory arises from the finding that WM plays an important role in many other aspects of cognition (Oberauer et al., 2005), so that an improvement of WM might offer enhancement of a wide range of cognitive abilities and even general intelligence (Jaeggi et al., 2008).

WM training involves computer-based tasks that seek to push the individual to their maximum WM capacity, and repetition of this process with constant reinforcement and feedback, which typically leads to improved task performance. The assumption behind such training is that improvements in task performance reflect improved WM performance (Shipstead et al., 2012). However, there are several shortcomings with the studies claiming to provide evidence for the effectiveness of such training. Most notably, observed improvements in task performance do not necessarily reflect improvements in working memory, but may simply reflect improvement in performing that particular task. It is therefore of great importance to demonstrate that the effects of training do in fact transfer to untrained tasks, but numerous studies have suggested that this is not the case (Melby-Lervåg and Hulme, 2012; Shipstead et al., 2012).

There are further methodological issues with studies of WM training, such as the fact that many of these studies have not included a control group, which would be crucial to control for confounding factors such as test-retest effects (Shipstead et al., 2012). Furthermore, studies in this field have generally not controlled for participant expectation effects. When these factors are controlled for, it is apparent that there is no improvement in WM (or general intelligence) after training (Redick et al., 2013). In those cases where improvements in WM have been found, it appears that the effects only occur immediately after training and disappear thereafter (Melby-Lervåg and Hulme, 2012).

In summary, the results of WM training studies are inconsistent, and despite the interest in WM training within the lay population there is little evidence for its effectiveness, at least in younger adults, due to the absence of appropriately controlled research studies (Melby-Lervåg and Hulme, 2012; Shipstead et al., 2012). However, some rather more promising results have been reported recently in older adults (e.g. Karbach and Verhaeghen, 2014), and these studies are reviewed in Chapter 5.

6.4 RETRIEVAL AND RETRIEVAL CUES

THE IMPORTANCE OF RETRIEVAL CUES

Learning a piece of information does not automatically guarantee that we will be able to retrieve it whenever we want to. Sometimes we cannot find a memory trace even though it remains stored in the brain somewhere. In this case the trace is said to be ‘available’ (i.e. in storage) but not ‘accessible’ (i.e. retrievable). In fact, most forgetting is probably caused by retrieval failure rather than the actual loss of a memory trace from storage, meaning that the item is available but not accessible.

Successful retrieval has been found to depend largely on the availability of suitable retrieval cues (Tulving and Pearlstone, 1966; Tulving, 1976; Mantyla, 1986). Retrieval cues are items of information that jog our memory for a specific item, by somehow activating the relevant memory trace. For example, Tulving and Pearlstone (1966) showed that participants who had learnt lists of words belonging to different categories (e.g. fruit) were able to recall far more of the test items when they were reminded of the original category names. Mantyla (1986) found that effective retrieval cues (in this case self-generated) produced a dramatic improvement in cued recall scores, with participants recalling over 90 per cent of the words correctly from a list of 600 words.

In fact the main reason for the effectiveness of elaborative semantic processing (see Section 6.2) is thought to be the fact that it creates associative links with other memory traces and thus increases the number of potential retrieval cues that can reactivate the target item (Craik and Tulving, 1975; Craik, 2002). This is illustrated in Figure 6.10.

These findings suggest that when you are trying to remember something in a real-life setting, you should deliberately seek as many cues as you can find to jog your memory. However, in many situations there are not many retrieval cues to go on, so you have to generate your own. For example, in an exam the only overtly presented cues are the actual exam questions, and these do not usually provide much information. You therefore have to use the questions as a starting point from which to generate further memories from material learnt during revision sessions. If your recall of relevant information is patchy or incomplete, you may find that focusing your attention closely on one item which you think may be correct (e.g. the findings of an experimental study) may help to cue the retrieval of other information associated with it (e.g. the design of their study, the author, and maybe even a few other related studies if you are lucky). Try asking as many different questions as you can about the topic (Who? When? Why? etc.). The main guiding principle here is to make the most of any snippets of information that you have, by using them to generate more information through activation of related memory traces.

Figure 6.10 Retrieval cues leading to a memory trace.

Occasionally you may find that you cannot remember any relevant information at all. This is called a retrieval block, and it is probably caused by a combination of the lack of suitable cues (and perhaps inadequate revision) and an excess of exam nerves. If you do have the misfortune to suffer a retrieval block during an exam, there are several strategies you can try. In the first place, it may be helpful to simply spend a few minutes relaxing, by thinking about something pleasant and unrelated to the exam. It may help to practise relaxation techniques in advance for use in such circumstances. In addition, there are several established methods of generating possible retrieval cues, which may help to unlock the information you have memorised. One approach is the ‘scribbling’ technique (Reder, 1987), which involves writing on a piece of scrap paper everything you can remember that relates even distantly to the topic, regardless of whether it is relevant to the question. You could try writing a list of the names of all of the psychologists you can remember. If you cannot remember any, you could just try writing down all the names of people you know, starting with yourself. (If you cannot even remember your own name, then things are not looking so good.) Even if the items you write down are not directly related to the question, there is a strong possibility that some of them will cue something more relevant.

When you are revising for an exam it can often be helpful to create potential retrieval cues in advance, which you can use when you get into the exam room. For example, you could learn lists of names or key theories, which will later jog your memory for more specific information. You could even try creating simple mnemonics for this purpose. Some of the mnemonic techniques described in Section 6.3 include the creation of potential retrieval cues (e.g. first-letter mnemonics). Gruneberg (1978) found that students taking an examination were greatly helped by using first-letter mnemonics of this kind, especially those students who had suffered a retrieval block.

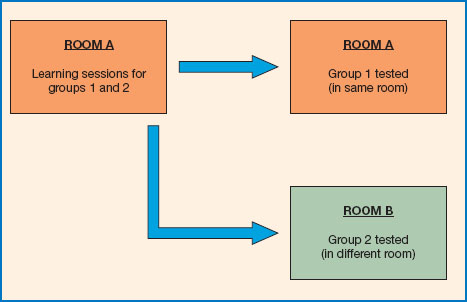

CONTEXT CUES AND THE ENCODING SPECIFICITY PRINCIPLE

The encoding specificity principle (Tulving and Thomson, 1973) suggests that, in order to be effective, a retrieval cue needs to contain some aspects of the original memory trace. According to this view, the probability of retrieving a trace depends on the match between features present in the retrieval cues and features encoded with the trace at the input stage. This is known as ‘feature overlap’, and it has been found to apply not only to features of the actual trace, but also to the context and surroundings in which the trace was initially encoded. There is now considerable evidence that reinstatement of context can be a powerful method of jogging the memory. For example, experiments have shown that recall is more successful when subjects are tested in the same room used for the original learning session, whereas moving to a different room leads to poorer retrieval (Smith et al., 1978). The design used in these experiments is illustrated in Figure 6.11.

Figure 6.11 The context reinstatement experiment of Smith et al. (1978).

Even imagining the original learning context can help significantly (Jerabek and Standing, 1992). It may therefore help you to remember details of some previous experience if you return to the actual scene, or simply try to visualise the place where you carried out your learning. Remembering other aspects of the learning context (such as the people you were with, the clothes you were wearing or the music you were listening to) could also be of some benefit. Context reinstatement has been used with great success by the police, as a means of enhancing the recall performance of eyewitnesses to crime as a part of the so-called ‘cognitive interview’ (Geiselman et al., 1985; Larsson et al., 2003). These techniques will be considered in more detail in Chapter 8. For a recent review of research on the encoding specificity principle, see Nairne (2015).

6.5 RETRIEVAL PRACTICE AND TESTING

RETRIEVAL PRACTICE AND THE TESTING EFFECT

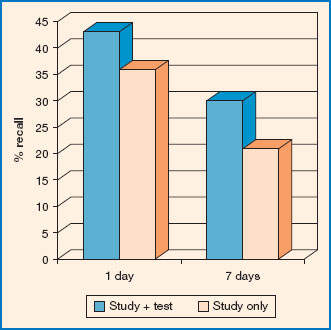

Learning is far more effective if it involves testing and retrieval of the material you are trying to learn. This is known as the testing effect, and it has been demonstrated by many research studies using a wide range of different materials. The testing effect has been found with the learning of wordlists (Allen et al., 1969), general knowledge (McDaniel and Fisher, 1991), map learning (Carpenter and Pashler, 2007) and foreign language learning (Carpenteret al., 2008). Figure 6.12 shows the results of one typical experiment.

McDaniel et al. (2007) confirmed the occurrence of the testing effect in a real-life setting, showing that students achieved higher exam marks if their revision focused on retrieval testing rather than mere re-reading of the exam material. Dunlosky et al. (2013) compared ten widely used learning techniques and found that the testing effect was the most effective of them all, and it was also found to be easy to use and applicable to a wide range of learning situations and materials.

Potts and Shanks (2012) found evidence that the benefits of testing are probably related to the fact that testing leads to reactivation of the test items, which makes them more easily strengthened. It has also been shown that learning benefits from a retrieval test even when the participant gives a wrong answer to the test (Hays et al., 2013; Potts and Shanks, 2014), provided that immediate feedback is given with the correct answer. Again, this rather unexpected effect is probably related to the fact that the correct answer is activated, even though the participant guessed wrongly.

Figure 6.12 The testing effect. The effect of testing on retrieval of Swahili-English word pairs (based on data from Carpenter et al., 2008).

The testing effect has important implications for anyone who is revising for an exam, or indeed anyone who is trying to learn information for any purpose. The research shows that revision will be far more effective if it involves testing and active retrieval of the material you are trying to learn, rather than merely reading it over and over again.

DECAY WITH DISUSE

Research on the testing effect has led to a reconsideration of the reasons why memories tend to fade away with time. It has been known since the earliest memory studies that memory traces grow weaker with the passage of time (Ebbinghaus, 1885). Ebbinghaus assumed that memories decay spontaneously as time goes by, but Thorndike (1914) argued that decay only occurs when a memory is left unused. Thorndike’s ‘Decay with Disuse’ theory has subsequently been updated by Bjork and Bjork (1992), who argued that a memory trace becomes inaccessible if it is not retrieved periodically. Bjork and Bjork’s ‘New Theory of Disuse’ proposes that frequent retrieval strengthens the retrieval route leading to a memory trace, which makes it easier to retrieve in the future. This theory is consistent with research findings on the testing effect.

Bjork and Bjork suggest that retrieval increases future retrieval strength, so each new act of retrieval constitutes a learning event. The new theory of disuse proposes that the act of retrieval leads to the strengthening of the retrieved trace at the expense of rival traces. This effect was assumed to reflect the activity of some kind of inhibitory mechanism, and recent experiments have demonstrated the existence of such a mechanism, which is known as retrieval-induced forgetting.

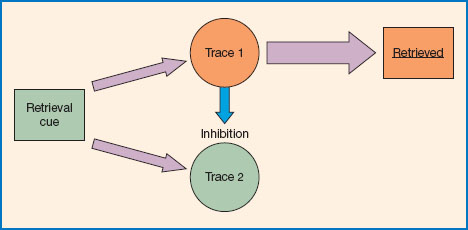

RETRIEVAL-INDUCED FORGETTING

Retrieval-induced forgetting (RIF) was first demonstrated by Anderson et al. (1994), who discovered that the retrieval of an item from memory made other items from the same category more difficult to retrieve. For example, when participants were presented with a list of items that included several types of fruit, retrieving the word ‘apple’ made other fruits such as ‘orange’ or ‘pear’ more difficult to retrieve subsequently, but it had no effect on the retrievability of test items from other categories (e.g. ‘shirt’ or ‘socks’). Anderson et al. concluded that the retrieval of an item from a particular category had somehow inhibited the retrieval of other items in the same category (see Figure 6.13).

The phenomenon of retrieval-induced forgetting has now been confirmed by a number of studies (e.g. Anderson et al., 2000; MacLeod and Macrae, 2001; Law et al., 2012). Anderson et al. (2000) established that the inhibition of an item only occurs when there are two or more items competing for retrieval, and when a rival item has actually been retrieved. This supported their theory that RIF is not merely some accidental event, but involves the active suppression of related but unwanted items in order to assist the retrieval of the target item. The inhibition account of RIF receives support from a number of studies (Anderson et al., 2000; Storm and Levy, 2012), but has been questioned by some recent findings (Raaijmakers and Jacab, 2013; Bäuml and Dobler, 2015).

Figure 6.13 Retrieval-induced forgetting (the retrieval of trace 1 is thought to inhibit retrieval of trace 2).

It is easy to see how such an inhibitory mechanism might have evolved (assuming that this is in fact the mechanism underlying RIF), because it would offer considerable benefits in helping people to retrieve items selectively. For example, remembering where you left your car in a large multi-storey car park would be extremely difficult if you had equally strong memories for every previous occasion on which you had ever parked your car. A mechanism that activated the most recent memory of parking your car, while inhibiting the memories of all previous occasions, would be extremely helpful (Anderson and Neely, 1996).

The discovery of retrieval-induced forgetting in laboratory experiments led researchers to wonder whether this phenomenon affected memory in real-life settings, such as the performance of a student revising for examinations or the testimony of an eyewitness in a court room. Macrae and MacLeod (1999) showed that retrieval-induced forgetting does indeed seem to affect students revising for examinations. Their participants were required to sit a mock geography exam, for which they were presented with twenty facts about two fictitious islands. The participants were then divided into two groups, one of which practised half of the twenty facts intensively while the other group did not. Subsequent testing revealed that the first group achieved good recall for the ten facts they had practised (as you would expect), but showed very poor recall of the ten un-practised facts, which were far better recalled by the group who had not carried out the additional practice. These findings suggest that last-minute cramming before an examination may sometimes do more harm than good, because it assists the recall of a few items at the cost of inhibited recall of all of the others.

Shaw et al. (1995) demonstrated the effects of retrieval-induced forgetting in an experiment on eyewitness testimony. Their participants were required to recall information about a crime presented in the form of a slide show, and it was found that the recall of some details of the crime was inhibited by the retrieval of other related items. From these findings Shaw et al. concluded that in real crime investigations there was a risk that police questioning of a witness could lead to the subsequent inhibition of any information not retrieved during the initial interview. There is some recent evidence that retrieval-induced forgetting can increase the likelihood of eyewitnesses falling victim to the misinformation effect. The misinformation effect is dealt with in Chapter 7, and it refers to the contamination of eyewitness testimony by information acquired subsequent to the event witnessed, for example information heard in conversation with other witnesses or imparted in the wording of questions during police interrogation. MacLeod and Saunders (2005) confirmed that eyewitnesses to a simulated crime became more susceptible to the misinformation effect (i.e. their retrieval was more likely to be contaminated by post-event information) following guided retrieval practice of related items. However, they found that both retrieval-induced inhibition and the associated misinformation effect tended to disappear about 24 hours after the retrieval practice, suggesting that a 24-hour interval should be placed between two successive interviews with the same witness.

Recent studies have shown that the RIF effect is greatly reduced, or even absent, in individuals who are suffering from depression (Groome and Sterkaj, 2010) or anxiety (Law et al., 2010). This may help to explain why selective recall performance tends to deteriorate when people become depressed or anxious, as for example when students experience memory blockage caused by exam nerves.

6.6 THE SPACING OF LEARNING SESSIONS

MASSED VERSUS SPACED STUDY SESSIONS

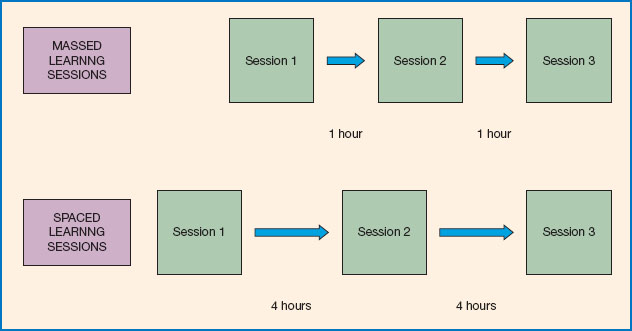

The most obvious requirement for learning something new is practice. We need to practise if we want to learn a new skill such as playing the piano, and we need practice when we are trying to learn new information, for example when revising for an examination. One basic question, which applies to most learning situations, is how best to organise our learning sessions - is it better to do the learning in one large ‘cramming’ session, or spread it out over a number of separate learning sessions? These two approaches are known as ‘massed’ and ‘spaced’ learning, respectively, and they are illustrated in Figure 6.14.

It has generally been found that spaced learning is more efficient than massed learning. This was first demonstrated more than a century ago by Ebbinghaus (1885), who found that spaced learning sessions produced higher retrieval scores than massed learning sessions, when the total time spent learning was kept constant for both learning conditions. Ebbinghaus used lists of nonsense syllables as his test material, but the general superiority of spaced over massed learning has been confirmed by many subsequent studies using various different types of test material, such as learning lists of words (Dempster, 1987) and text passages (Reder and Anderson, 1982). Spaced learning has also generally proved to be better than massed when learning motor skills, such as keyboard skills (Baddeley and Longman, 1978). More recently, Pashler et al. (2007) reported that spaced learning offers significant benefits for students who are learning a new language, learning to solve maths problems or learning information from maps.

SPACING OVER LONGER RETENTION INTERVALS

Most of the earlier studies of spacing involved fairly short retention intervals, typically a few minutes or hours. However, Cepeda et al. (2008) found that spaced learning also offers major benefits when information has to be retained for several months. They carried out a systematic study of the precise amount of spacing required to achieve optimal learning at any given retention interval. For example, for a student who is preparing for an exam in one year’s time, Cepeda et al. found that the best retrieval will be achieved with study sessions about 21 days apart. However, if preparing for an exam in 7 days’ time, revision sessions should take place at 1-day intervals. In fact, students who adopted the optimal spacing strategy achieved a 64 per cent higher recall score than those who studied for the same total time but with a massed learning strategy. The benefits of spaced learning are therefore very substantial, and Cepeda et al. recommend that students and educators should make use of these findings whenever possible.

Of course, in real-life settings it may not always be possible or even advisable to employ spaced learning. For example, if you have only 20 minutes left before an exam, it might be better to use the entire period for revision rather than to take rest breaks, which will waste some of your precious time. Again, a very busy person might have difficulty fitting a large number of separate learning sessions into their daily schedule. But it is clear that spaced learning is the best strategy for most learning tasks, so it is worth adopting so long as you can fit the study sessions around your other activities.

Although spaced learning has been consistently found to be superior to massed learning over the long term, it has been found that during the actual study session (and for a short time afterwards) massed learning can actually show a temporary advantage (Glenberg and Lehman, 1980). The superiority of spaced learning only really becomes apparent with longer retrieval intervals. Most learning in real life involves fairly long retrieval intervals (e.g. revising the night before an exam), so in practice spaced learning will usually be the best strategy.

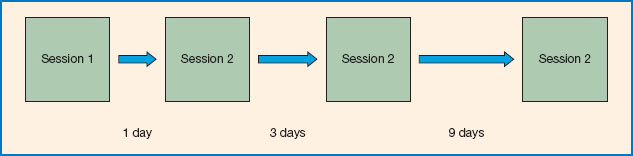

EXPANDING RETRIEVAL PRACTICE

Most early studies of spaced learning involved the use of uniformly spaced learning sessions. However, Landauer and Bjork (1978) found that learning is often more efficient if the time interval between retrieval sessions is steadily increased for successive sessions. This strategy is known as ‘expanding retrieval practice’, and it is illustrated in Figure 6.15. For example, the first retrieval attempt might be made after a 1-day interval, the second retrieval after 4 days, the third after 9 days and so on. Subsequent research has confirmed that expanding retrieval practice not only improves retrieval in normal individuals (Vlach et al., 2014), but it can also help the retrieval performance of amnesic patients such as those with Alzheimer’s disease (Broman 2001).

Figure 6.14 Massed and spaced learning sessions.

Vlach et al. (2014) found that an expanding practice schedule was the most effective strategy for a group of children learning to assign category names to a set of novel shapes. However, they reported that expanding practice only began to show an advantage at retrieval intervals of 24 hours or more. When the children were tested immediately after the final study session, the expanding practice schedule showed no advantage over an equally spaced schedule.

A number of possible explanations have been proposed for the superiority of spaced learning over massed learning. Ebbinghaus (1885) suggested that with massed learning there will be more interference between successive items, whereas frequent rest breaks will help to separate items from one another. A more recent theory, known as the ‘encoding variability hypothesis’ (Underwood, 1969), suggests that when we return to a recently practised item which is still fresh in the memory, we tend to just reactivate the same processing as we used last time. However, if we delay returning to the item until it has been partly forgotten, the previous processing will also have been forgotten, so we are more likely to process the item in a new and different way. According to this view, spaced learning provides more varied forms of encoding and thus a more elaborated trace, with more potential retrieval routes leading to it. The encoding variability hypothesis can also help to explain the effectiveness of expanding retrieval intervals, since the time interval required for the previous processing event to be forgotten will increase as the item becomes more thoroughly learnt. Schmidt and Bjork (1992) suggest that, for optimum learning, each retrieval interval should be long enough to make retrieval as difficult as possible without quite rendering the item irretrievable. They argue that the partial forgetting that occurs in between successive learning trials creates additional opportunities for learning.

Figure 6.15 Expanding retrieval practice.

Having considered the best strategies for organising and spacing our study sessions, we will now go on to consider whether people understand the value of these strategies.

RETRIEVAL STRENGTH, STORAGE STRENGTH AND METAMEMORY

In their original paper introducing the new theory of disuse, Bjork and Bjork (1992) make an important distinction between storage strength and retrieval strength. Storage strength reflects how strongly a memory trace has been encoded, and once established it is assumed to be fairly lasting and permanent. Retrieval strength, on the other hand, reflects the ease with which a memory trace can be retrieved at any particular moment in time, and this varies from moment to moment as a consequence of factors such as disuse and retrieval-induced inhibition. It is therefore possible that a trace with high storage strength may still be difficult to retrieve. In other words, a trace can be available without necessarily being accessible.

There is some evidence that when individuals attempt to estimate the strength of their own memories (a form of subjective judgement known as ‘metamemory’), they tend to base their assessment on memory performance during learning (i.e. retrieval strength) rather than lasting changes to the memory trace (storage strength). However, retrieval performance actually provides a very misleading guide to actual storage strength, because retrieval strength is extremely variable and is influenced by many different factors (Simon and Bjork, 2001; Soderstrom and Bjork, 2015). For example, it has been established that spaced learning trials produce more effective long-term retrieval than massed learning trials, but during the actual learning session massed learning tends to hold a temporary advantage in retrieval strength (Glenberg and Lehman, 1980). Simon and Bjork (2001) found that participants learning motor keyboard skills by a mixture of massed and spaced learning trials tended to judge massed trials as being more effective in the long term than spaced trials, because it produced better retrieval performance at the learning stage. They appear to have been misled by focusing on transient retrieval strength rather than on the long-term storage strength, which actually benefits more from spaced learning trials.

It turns out that in a variety of different learning situations, subjects tend to make errors in judging the strength of their own memories as a consequence of basing their subjective judgements on retrieval strength rather than storage strength (Soderstrom and Bjork, 2015). One possible reason for this inaccuracy is the fact that storage strength is not available to direct experience and estimation, whereas people are more aware of the retrieval strength of a particular trace because they can check it by making retrieval attempts. In some ways the same general principle applies to experimental studies of memory, where retrieval strength is relatively easy to measure by using a simple recall test, whereas the measurement of storage strength requires more complex techniques such as tests of implicit memory, familiarity or recognition.

Most people are very poor at judging the effectiveness of their own learning, or deciding which strategies will yield the best retrieval, unless of course they have studied the psychology of learning and memory. Bjork (1999) notes that in most cases individuals tend to overestimate their ability to retrieve information, and he suggests that such misjudgements could cause serious problems in many work settings, and even severe danger in certain settings. For example, air traffic controllers and operators of nuclear plants who hold an over-optimistic view of their own retrieval capabilities may take on responsibilities that are beyond their competence, or may fail to spend sufficient time on preparation or study.

In summary, it seems that most subjects are very poor at estimating their own learning capabilities, because they base their judgements on retrieval strength rather than storage strength. Consequently, most people mismanage their own learning efforts because they hold a faulty mental model of learning processes (Soderstrom and Bjork, 2015). In any learning situation it is important to base judgements of progress on more objective measures of long-term learning rather than depending on the subjective opinion of the learner. This finding obviously has important implications for anyone involved in learning and study, and most particularly for instructors and teachers.

6.7 SLEEP AND MEMORY CONSOLIDATION

Recent research has shown that sleep plays an important role in memory consolidation. When trying to learn large amounts of new information, it is sometimes tempting to sacrifice sleep in exchange for a few extra hours of time in which to work. Such behaviour is known to be quite common among students preparing for examinations. However, if your performance depends on effective memory function, cutting back on sleep might not be a good idea.

Until relatively recently, sleep was widely considered to serve little function other than recovery from fatigue and tiredness. However, our understanding of its role has since changed dramatically. Within the past quarter of a century there has been a rapid growth in sleep research, and it has now been firmly established that sleep plays a crucial role in a range of physical and psychological functions, including the regulation of memory function (Stickgold, 2005).

To understand the importance of sleep for memory, one must first consider memory in three stages:

1 Initial learning or storage of an item of memory;

2 Consolidation of the previously stored item for future retrieval;

3 Successful retrieval of the memory from storage.

Figure 6.16 Why not sleep on it?

Source: copyright R. Ashrafov / Shutterstock.com.

While evidence suggests that we are not capable of learning new memories during sleep (Druckman and Bjork, 1994), it appears that sleep is particularly important for the second of these stages, consolidation. Much of the evidence for the role of sleep in memory consolidation comes from sleep restriction studies. The restriction of sleep significantly impairs the consolidation of information learnt in the preceding day. Such effects have been demonstrated for a range of different forms of memory, including word-learning (Plihal and Born, 1997), motor skills (Fischer et al., 2002), procedural memory (Plihal and Born, 1997), emotional memory (Wagner et al., 2002) and spatial memory (Nguyen et al., 2013).

A very neat demonstration of this was provided by Gaskell and Dumay (2003), who had their research participants learn a set of novel words that resembled old words (e.g. Cathedruke, Shrapnidge) and then tested the speed of responding to the old words (e.g. Cathedral, Shrapnel). They found that learning the new words caused participants to show a delay when recognising the old words, but crucially this interference effect was only present following a night’s sleep.

Several studies have demonstrated similar effects of post-learning sleep on memory consolidation, but importantly a study conducted by Gais et al. (2007) demonstrated that the effects of sleep on memory consolidation are not just restricted to performance on the following day, but that these changes are evident even long after the initial learning session. Moreover, this study used MRI scans to show that post-sleep consolidation is in fact reflected in changes to the brain regions associated with the memory, suggesting that sleep-dependent consolidation is not just a strengthening of the memory but a physical process of spatial reorganisation of memories to appropriate locations in the brain.

Sleep consists of cycles of different stages, which are characterised primarily by the different frequencies of brain waves that can be observed on an electroencephalogram (EEG) during each stage (Dement and Kleitman, 1957). It appears that consolidation of declarative memory benefits mainly from periods known as ‘slow wave sleep’ (which tends to dominate the early part of sleep), while consolidation of non-declarative memory (such as emotional and procedural memory) benefits mostly from ‘rapid eye movement’ (REM) sleep (typically occurring later in the sleep period) (Smith, 2001; Gais and Born, 2004).

The precise mechanisms underlying the consolidation of memory during sleep are still not fully understood. Electroencephalogram (EEG) studies show that after engagement in an intense declarative memory learning task during the day, an increase in bursts of brain activity known as ‘sleep spindles’ follows in the early part of sleep. These ‘sleep spindles’ are considered to be indicative of reprocessing of prior-learnt information (Gais and Born, 2004), and indeed such increased sleep spindle activity has also been shown to predict subsequent improvement in memory performance (Schabus et al., 2004).

Thus far we have considered the important role of sleep for memory consolidation, but this is not the limit of sleep’s influence on memory. Going back to the three stages of memory mentioned above, there is a third stage, retrieval, which can also be influenced by sleep. Even if post-learning sleep is normal and memories well stored and consolidated, sleep deprivation prior to retrieval can significantly reduce one’s ability to accurately retrieve items from memory (Durmer and Dinges, 2005). In fact, sleep deprivation not only reduces the ability to recall the right information, but can increase the generation of false memories (Frenda et al., 2014), and can also detrimentally affect working memory performance (Durmer and Dinges, 2005; Banks and Dinges, 2007). These findings suggest that reduced sleep the night before an exam or a memory performance task would be ill-advised.

Given all of the points discussed above, the question that of course arises is: how might we better regulate our sleep? First, it should be noted that for some, reduced sleep quality may be the product of a health condition. However, for many of us, sleep quality and sleep duration are influenced by habit and lifestyle. Research into sleep tends to suggest a range of conductive behaviours, broadly labelled by sleep scientists as ‘sleep hygiene’. Some examples of good sleep hygiene are listed below:

✵ Set a regular bedtime and awakening time.

✵ Aim for a suitable duration of sleep (around 8 hours) per night.

✵ Try to ensure exposure to natural light during the daytime.

✵ Before sleep, try to avoid the use of bright-light-emitting screens.

✵ Avoid large meals, caffeine, nicotine and alcohol close to bedtime.

✵ If possible, do regular daytime exercise (ideally during the morning or afternoon).

Maintaining these habits may improve sleep, and in turn memory performance.

In summary, while the mechanisms by which sleep influences memory are not yet fully understood, it is clear that sleep plays a particularly important role in the encoding and consolidation of previously stored memories. So no matter how well one plans one’s learning, getting an appropriate amount of sleep is also crucial to memory function.

SUMMARY

✵ Learning can be made more effective by semantic processing (i.e. focusing on the meaning of the material to be learnt), and by the use of imagery.

✵ Mnemonic strategies, often making use of semantic processing and imagery, can assist learning and memory, especially for material that has little intrinsic meaning.

✵ Retrieval can be enhanced by strategies to increase the effectiveness of retrieval cues, including contextual cues.

✵ Retrieval and testing of a memory makes it more retrievable in the future, whereas disused memories become increasingly inaccessible.

✵ Retrieving an item from memory tends to inhibit the retrieval of other related items.

✵ Learning is usually more efficient when trials are ‘spaced’ rather than ‘massed’, especially if expanding retrieval practice is employed.

✵ Attempts to predict our own future retrieval performance are frequently inaccurate, because we tend to base such assessments on estimates of retrieval strength rather than storage strength.

✵ Sleep appears to be particularly important for consolidation of previously acquired memories. Avoiding sleep deprivation and ensuring regular, good-quality sleep may be one quite simple way to improve memory performance.

FURTHER READING

✵ Cohen, G. and Conway, M. (2008). Memory in the real world. Hove: Psychology Press. A book that focuses on memory only, but it provides a very wide-ranging account of memory performance in real-life settings.

✵ Groome D.H. et al. (2014). An introduction to cognitive psychology: Processes and disorders (3rd edn). Hove: Psychology Press. This book includes chapters on memory covering the main theories and lab findings, which provide a background for the applied cognitive studies in the present chapter.

✵ Worthen, J.B. and Hunt, R.R. (2011). Mnemonology: Mnemonics for the 21st century. Hove: Psychology Press. A good source of mnemonic techniques.